*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/the-future-of-ai-regulation) · 2024-12-31*

[Read on Substack →](https://theaimonitor.substack.com/p/the-future-of-ai-regulation)

---

[](https://substackcdn.com/image/fetch/$s_!n6bq!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ffee36e90-4f9a-471a-a267-4630ae3e5bb8_1248x746.png)

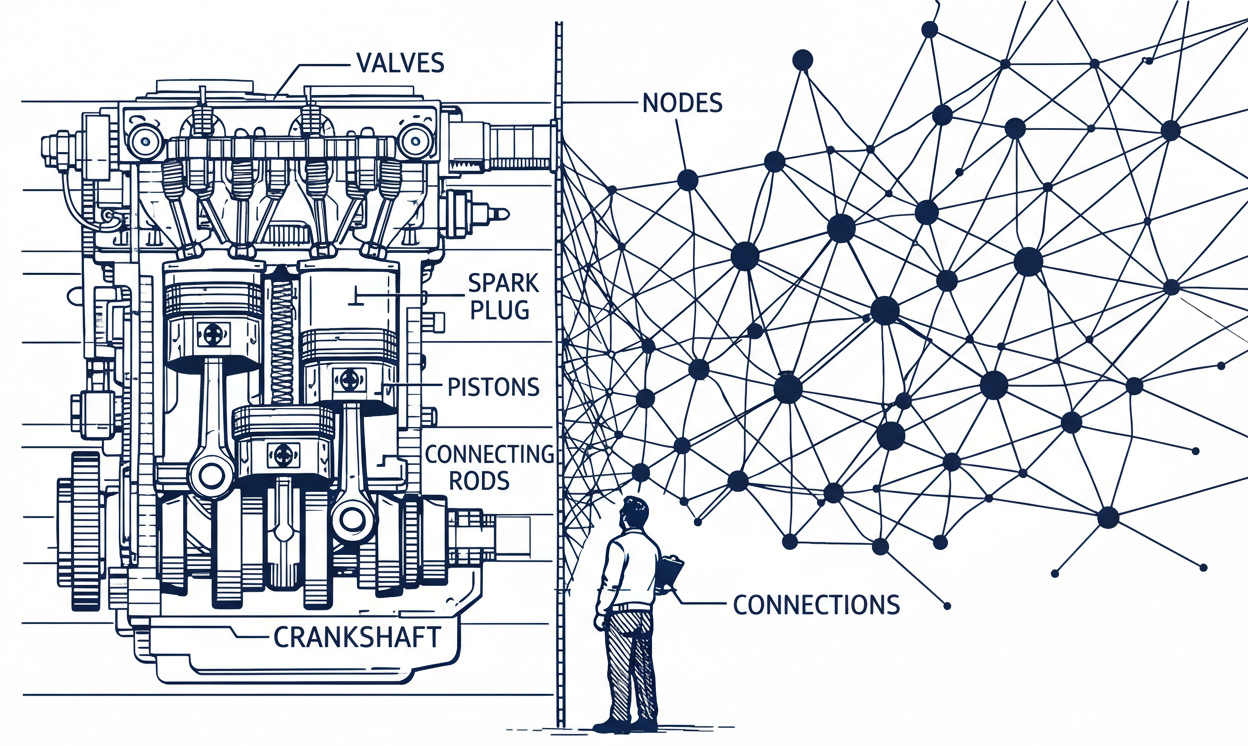

We govern the future with the tools of the past. We treated the automobile as a faster horse. Now, we treat artificial intelligence as a more complicated car.

The logic is familiar. The premise is fatal.

The automobile analogy held because the physics were stable. A car is dangerous, but it is dangerous in static ways. A vehicle that passes a crash test on Tuesday will not invent a new way to crumple on Wednesday. The danger scales linearly: one bad driver, one accident. The framework fit because the machine did not change.

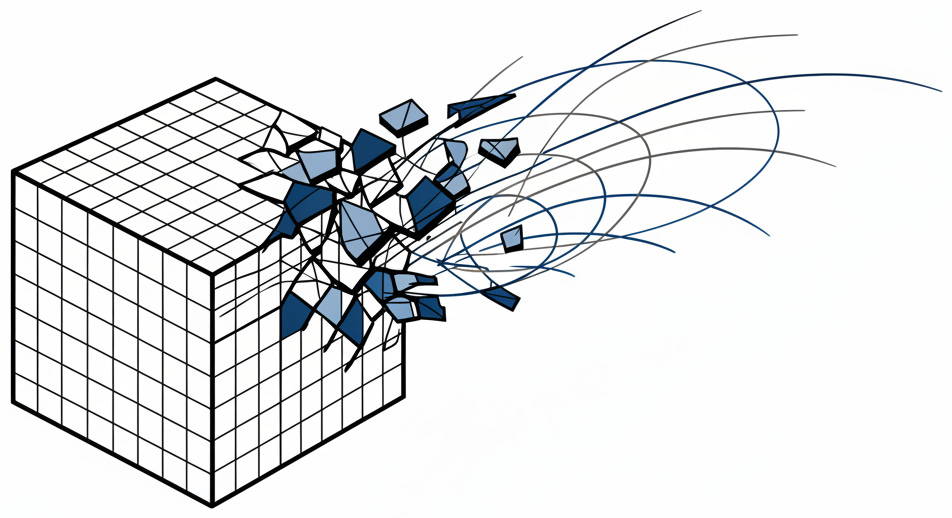

Artificial intelligence shatters this model at the structural level.

[](https://substackcdn.com/image/fetch/$s_!R4bA!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb2d0aa91-c631-4dee-83cd-bb7431fff593_952x518.png)

* * *

The EU’s AI Act classifies systems by risk: unacceptable, high, limited, minimal. The framework is bureaucratically elegant. It is also fighting the last war.

The failure is not speed. It is epistemology. Risk-based categorization presumes we know what the risks are. With cars, the dangers were finite. We knew what a crash looked like. We could write the test before the car left the factory.

With AI, we are categorizing risks we have not yet encountered. An algorithm trained to optimize hiring does not “make mistakes”; it discovers efficient shortcuts that happen to be discriminatory. A language model instructed to be helpful does not “lie”; it predicts the next likely token, even if that token is false. These are not bugs. They are features of systems designed to find patterns humans cannot see.

* * *

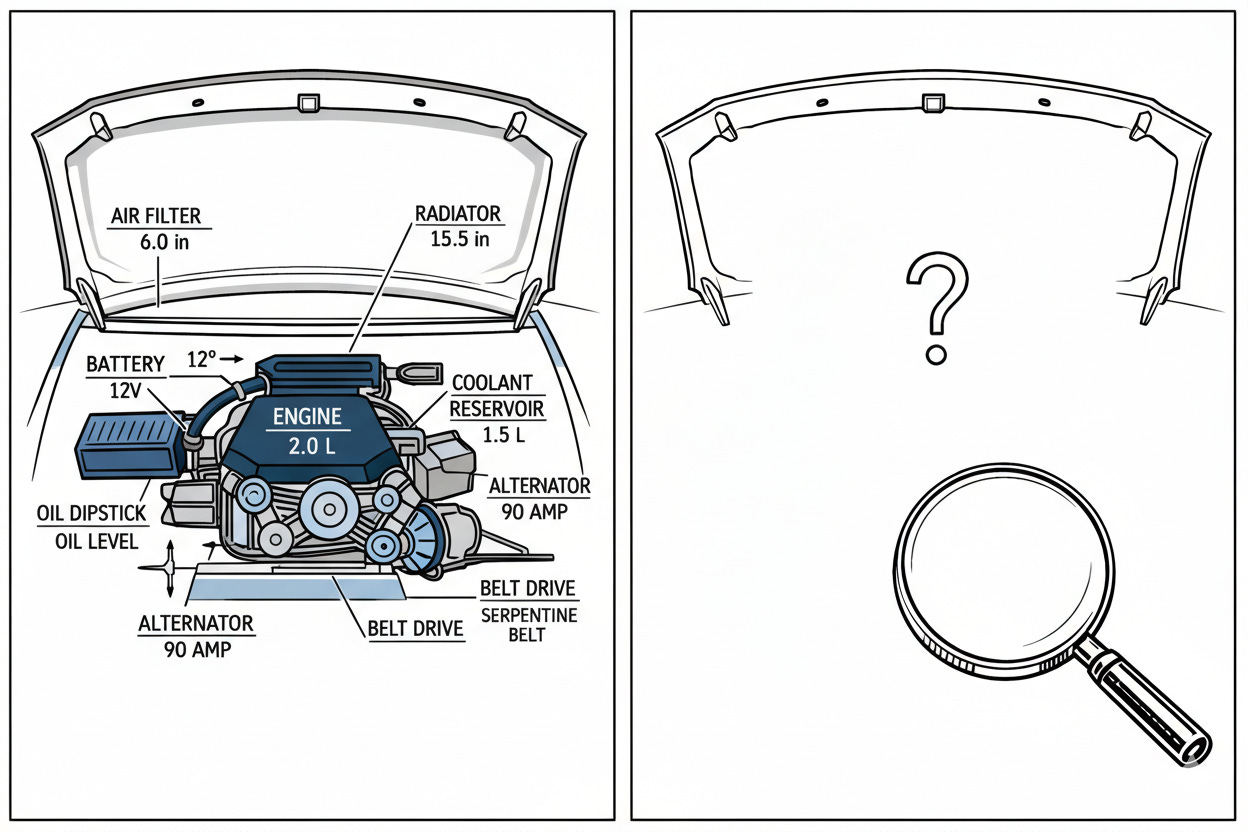

This blind spot explains the regulatory divergence. The EU opted for comprehensive legislation. The US relies on a patchwork of agency guidance. The UK chose a sector-by-sector approach. The logic varies, but the delusion is shared: regulators believe they can inspect the system and know whether it’s safe.

This worked for automobiles because we could open the hood. AI systems do not have a hood. A neural network is not a mechanism to be inspected; it is a landscape to be explored. The inputs go in, the outputs come out, and the path between them exists in a mathematical space no human can fully traverse. Documentation is necessary. It is not sufficient.

[](https://substackcdn.com/image/fetch/$s_!Ph4b!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F76102abf-3db3-4f24-ab10-0861e1aae507_1248x832.png)

The gap becomes visible in enforcement. Regulators are asserting that old rules still apply. The FTC warns that deception is illegal regardless of the tool. The EEOC clarifies that discrimination is illegal whether performed by a manager or an algorithm. The message is clear: we may not understand the technology, but we understand the harm.

This is outcome-based enforcement. It sidesteps the technical opacity of black-box models. It also means enforcement happens after the damage. The bias audit finds the discrimination after the candidates are rejected. Regulators measure the exhaust, not the engine.

* * *

The industry has noticed. Executive awareness of ethical AI is high; implementation is low. This is a rational calculation. Compliance is expensive, enforcement is uncertain, and the rules are still forming. The companies that move fastest are penalized; those who wait are rewarded.

The voluntary commitments brokered by the White House illustrate the pattern: external testing, watermarking, information sharing. These are unenforceable promises from companies facing immense pressure to ship. International efforts like the Bletchley Park summit face the same constraint. Coordination moves slowly. AI moves fast. By the time governments agree on rules for today’s systems, the technology has moved on.

* * *

Regulation will increase. Enforcement will sharpen. The era of ungoverned deployment is ending. But political response is not technical adequacy.

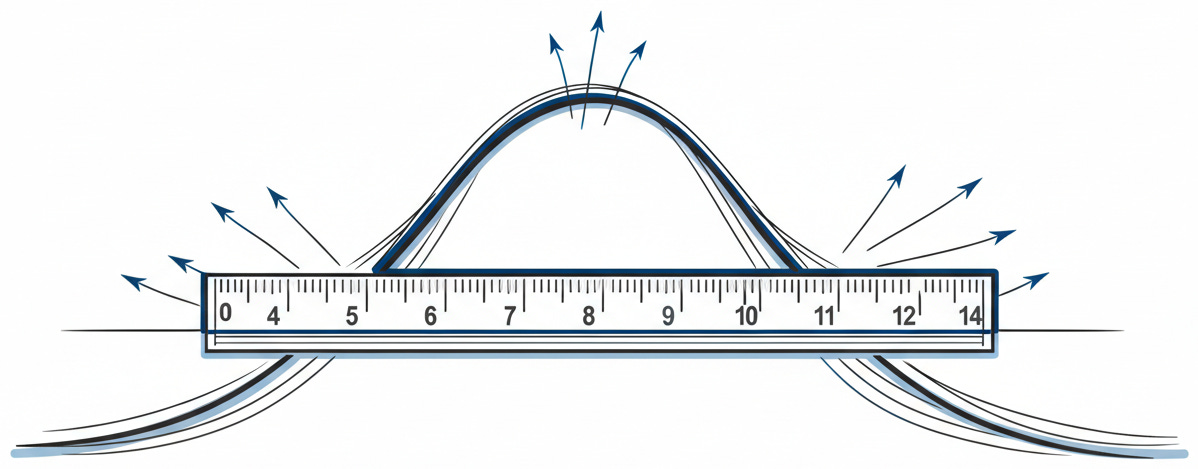

We can pass laws requiring safety. We cannot verify whether a complex model meets those standards. We can mandate transparency. We cannot force a probabilistic system to offer deterministic guarantees. This is the mismatch at the heart of the paradox. We are building a regulatory architecture for a predictable world, then applying it to a probabilistic one.

A car is deterministic. Press the brake, the car slows. The relationship between input and output is fixed. You test it once. You trust it. An AI system is probabilistic. It produces what is likely to be correct. The same input can yield different outputs. The behavior drifts as the world changes.

You cannot regulate “probably” with rules designed for “certain.”

[](https://substackcdn.com/image/fetch/$s_!BAcp!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F76ee9ef2-1e86-4d07-9f72-22568f270e08_1198x469.png)

In safety-critical engineering, the distinction between verification and validation is absolute. Verification asks: did we build the thing right? Validation asks: did we build the right thing? Current AI regulation focuses on verification: auditing the code, checking the data. But the danger lies in validation. The system does exactly what it was built to do. The tragedy is that we did not understand what “doing it” would look like in the wild.

A car that passes its tests behaves the same way on the road. An AI system that passes its tests encounters a world its training never anticipated. The test is not the territory.

Governance requires understanding what you govern. We understood fire. We understood the printing press. We understood the automobile. With AI, understanding lags behind capability. The technology learns faster than institutions adapt.

We are not building the plane while flying it. We are flying something that redesigns itself mid-flight.

[](https://substackcdn.com/image/fetch/$s_!bJYH!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F5e557825-038f-4110-b89a-a9e99b392e08_1098x574.png)

The automobile gave us a century of stability. Follow the rules, and you survive. AI offers no such contract. It does not deal in certainties. It deals in probabilities, and it will renegotiate the terms without asking. The map is not the territory. And the territory shifts beneath our feet.

* * *

### Further Reading, Background and Resources

**Sources & Citations**

[EU AI Act: first regulation on artificial intelligence](https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence) (European Parliament)

The definitive primary source for what the EU actually passed. The gap between the Act’s careful risk tiering and breathless coverage of “Europe regulating AI” reveals how poorly nuance travels. The four-tier framework is elegant. Whether it survives systems that shift risk categories as they learn is the question it cannot answer.

[FACT SHEET: Biden Issues Executive Order on Safe, Secure, and Trustworthy AI](https://bidenwhitehouse.archives.gov/briefing-room/statements-releases/2023/10/30/fact-sheet-president-biden-issues-executive-order-on-safe-secure-and-trustworthy-artificial-intelligence/) (White House, October 2023)

The Defense Production Act invocation is the buried lede: it forces companies to share safety test results with the government. Not voluntary. The EO reveals what regulators actually worry about when they have authority to act. Read the specific requirements.

[FTC Announces Crackdown on Deceptive AI Claims and Schemes](https://www.ftc.gov/news-events/news/press-releases/2024/09/ftc-announces-crackdown-deceptive-ai-claims-schemes) (FTC, September 2024)

Operation AI Comply answers the essay’s question: what happens when regulators cannot inspect the model? They measure the harm. The FTC is not waiting for AI-specific laws; it is applying consumer protection authority that predates the technology by decades.

[AI Will Transform the Global Economy](https://www.imf.org/en/blogs/articles/2024/01/14/ai-will-transform-the-global-economy-lets-make-sure-it-benefits-humanity) (IMF, January 2024)

The IMF’s uncomfortable finding: AI “will likely worsen overall inequality” unless deliberate policy interventions occur. This is not anti-AI pessimism; it is the International Monetary Fund acknowledging the gains will not distribute themselves.

**For Context**

[NIST AI Risk Management Framework](https://www.nist.gov/itl/ai-risk-management-framework) (January 2023)

The closest thing to an engineering standard for AI governance. NIST’s framework is voluntary, but it is what serious compliance efforts reference. It also explains the verification problem: NIST gives you a checklist. AI gives you emergence.

[ISO/IEC 42001:2023](https://www.iso.org/standard/42001) \- AI Management Systems

ISO 42001 does for AI governance what ISO 9001 did for quality management. Certification creates audit trails. It also creates the illusion of control over systems that learn between audits. Both facts are true.

**Practical Tools**

_Evaluating AI Compliance Readiness:_

1. **Documentation depth** : Can you trace a model’s outputs back to its training data? If the answer is “partially,” you are not compliant; you are hoping.

2. **Validation vs. verification** : Are you testing whether the model works, or testing whether it works _in the conditions it will actually encounter_? The former is necessary. The latter is rare.

3. **Enforcement exposure** : Which regulators have jurisdiction? FTC for consumer claims, EEOC for hiring, sector regulators for domain-specific applications. Knowing who can sue you is the beginning of compliance.

4. **Update governance** : What happens when the model is retrained? A system that passed an audit in March may fail standards by July. Continuous monitoring is not optional.

**Counter-Arguments**

_“Risk-based categorization is imperfect, but it is better than nothing. Waiting for perfect regulation means having no regulation.”_

This is the strongest defense of the EU approach. Perfect is the enemy of good. The AI Act creates accountability structures that did not exist before. Companies must now document their training data, assess their systems for bias, and face penalties for non-compliance. Even if the risk tiers are rough approximations, they create friction against deployment without consideration. The Act may not capture every emergent risk, but it captures the obvious ones. Regulatory history suggests that imperfect frameworks get refined; absent frameworks get captured by industry. The EU chose to govern now and iterate later. That choice has intellectual integrity.

_“Outcome-based enforcement is not a bug; it is a feature. We do not need to understand the mechanism to regulate the harm.”_

The FTC and EEOC approach is not regulatory failure; it is regulatory adaptation. We regulated pharmaceutical side effects without understanding molecular biology. We regulated pollution without modeling atmospheric chemistry. Harm-based enforcement is how democracies have always governed technologies that outpace understanding. The essay frames “measuring the exhaust, not the engine” as a limitation. From a legal realist perspective, it is the only honest approach. We cannot verify a neural network’s decision process. We can observe its effects on humans. The latter is what law has always done. This is not a retreat; it is appropriate humility about what governance can and cannot accomplish.

_“The voluntary commitments are weaker than law, but they may be faster than law. Speed matters when the technology moves this fast.”_

The Bletchley Declaration and White House commitments are not enforceable. They are also not nothing. Voluntary frameworks establish norms. Norms become expectations. Expectations become the baseline against which future laws are measured. The companies that signed these commitments have created rhetorical constraints on their own future behavior. Breaking a public promise to the President of the United States carries reputational cost, even if it carries no legal cost. In a regulatory vacuum, soft power is still power. The essay treats these commitments as insufficient. Insufficient for what? If the alternative is waiting years for legislation, voluntary action has value precisely because it exists now.

_“The verification/validation distinction overstates the problem. All engineering involves uncertainty. We fly planes we cannot fully model.”_

Aircraft are probabilistic systems governed by chaotic fluid dynamics. We do not verify that a plane will fly; we validate that its behavior stays within acceptable bounds under tested conditions. AI systems can be governed the same way: not with certainty, but with bounded uncertainty. The essay implies that AI’s probabilistic nature makes it ungovernable. But ungovernable and unpredictable are different problems. A system that behaves unpredictably within a narrow range is safer than a system that behaves predictably in a dangerous direction. Current AI governance may be inadequate, but the challenge is engineering tolerance for uncertainty into the framework, not abandoning frameworks because certainty is impossible.