*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/the-exponential-growth-of-ai) · 2024-07-26*

[Read on Substack →](https://theaimonitor.substack.com/p/the-exponential-growth-of-ai)

---

[](https://substackcdn.com/image/fetch/$s_!D-b_!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F984962ca-4357-4edb-a328-cf4f5d32df13_832x1209.png)

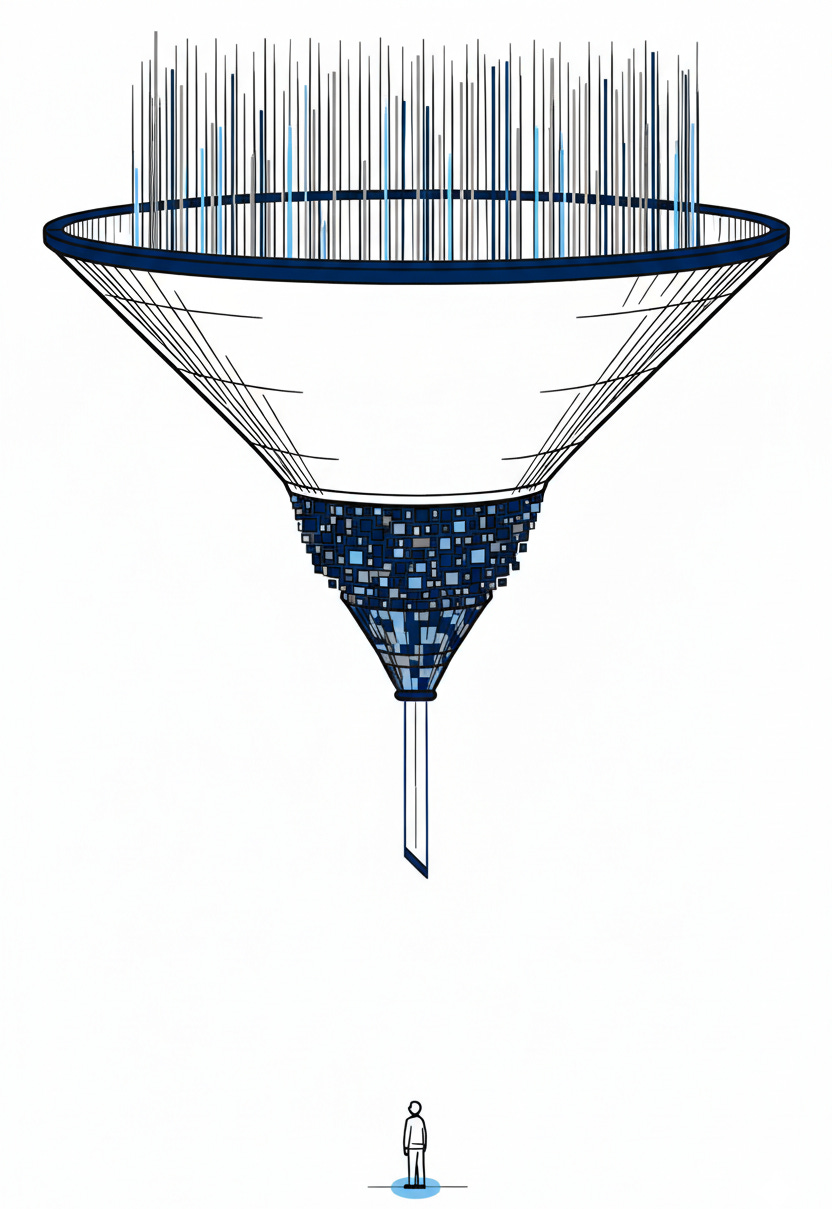

We are spending like we have already solved AI. We are deploying like we are still not sure it works. That gap, between the capital pouring in and the capability being put to use, is the most important number in technology right now. Almost nobody is measuring it.

Venture funding into generative AI surged from roughly $3 billion in 2022 to $25.2 billion in 2023, nearly an eightfold increase in a single year. Venture capital flows at rates that dwarf the dot-com era’s peak years. Corporate R&D budgets have been restructured around a single thesis: that artificial intelligence is a general-purpose technology on the order of electricity, and that being late to it is fatal. The money is betting on transformation. The money is probably right.

But money is not adoption. And adoption is not impact.

Across the economy, the pattern is the same. Companies are buying AI. They are not yet using it, not at the depth the investment implies. In McKinsey’s 2022 survey, roughly half of organisations report AI deployments in at least one business function, which sounds impressive until we examine what “deployment” means: a pilot project in customer service, a proof-of-concept in marketing analytics, a chatbot bolted onto a help desk that still routes most queries to humans. The gap between purchasing a capability and integrating it into how an organisation actually makes decisions, serves customers, and builds products remains vast.

We have seen this pattern before. Understanding it is more useful than ignoring it.

In 1987, the economist Robert Solow observed that “you can see the computer age everywhere but in the productivity statistics.” It took nearly fifteen years from the introduction of the personal computer for the productivity gains to appear in macroeconomic data. Not because computers did not work. Because organisations had not yet reorganised themselves around what computers made possible.

The same dynamic is unfolding now, faster in some ways, slower in others. The bottleneck has shifted. PCs required new literacy. AI requires new judgment about when to trust the output. AI is not a tool that plugs into existing workflows. It is a capability that demands new workflows be built around it. The company that hands GPT-4 to its employees and waits for productivity to rise is making the same mistake as the company that bought PCs in 1985 and expected them to replace typewriters without changing how anyone worked.

The real gains from general-purpose technologies arrive when companies restructure around them. Processes, incentives, training, and management layers must all shift to accommodate what the technology makes newly possible. That restructuring is slow. It is organisational, not technical. It requires changing how people work, which means rewriting what people are rewarded for, which means rethinking what managers measure, which means changing what executives prioritise. Each of those layers adds years.

This is why the current investment surge and the current deployment reality can both be rational. The capital is pricing in a transformation that will take a decade to fully materialise. The deployment lag reflects something other than hype. It reflects the well-documented pattern of adoption, and we are in the early, expensive, messy phase of that pattern.

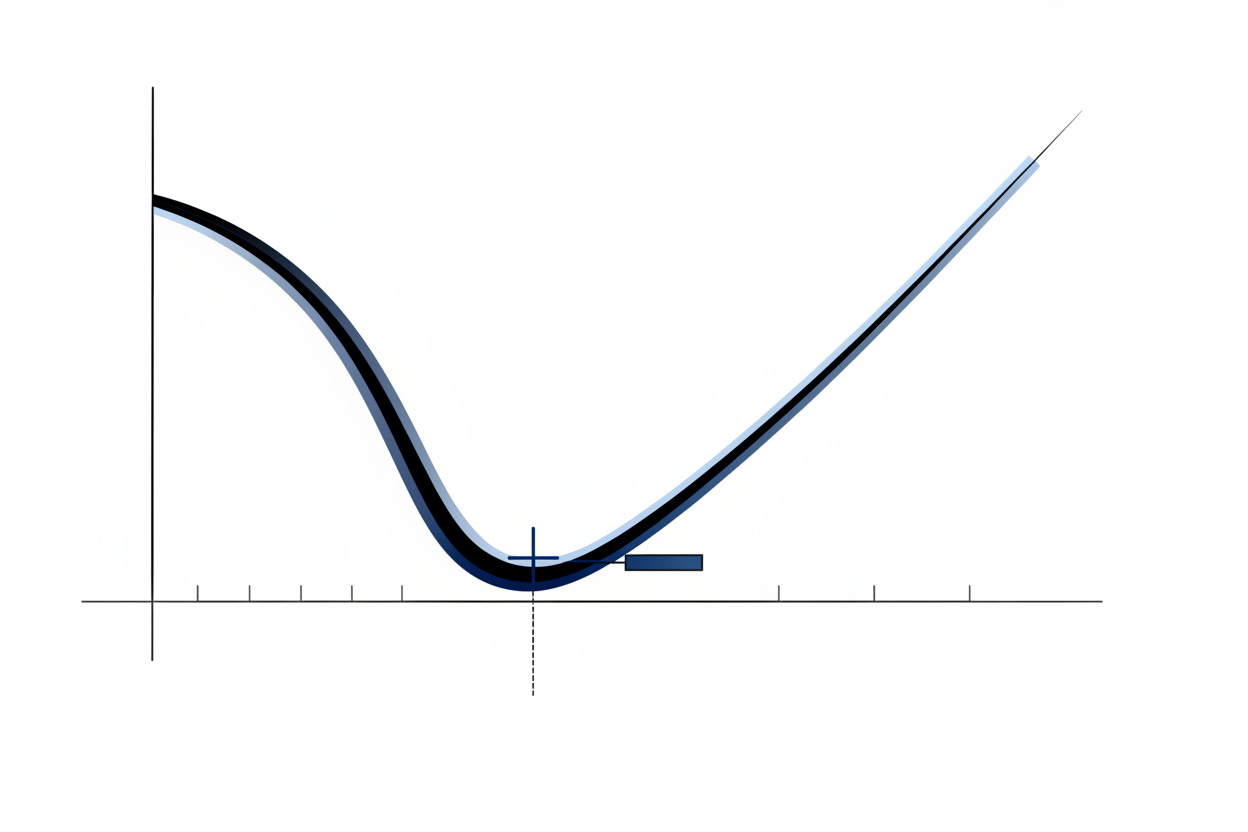

The pattern resembles what economists call the J-curve. Productivity dips as companies invest heavily while still learning to use the technology. The costs are immediate. The returns are deferred. Only after enough organisational learning accumulates does the curve bend upward. The steeper the initial investment, the deeper the dip, and the more alarming it looks to anyone measuring returns on a quarterly basis. This is where we are. The spending is visible. The reorganisation is barely underway. The productivity gains are still largely theoretical.

[](https://substackcdn.com/image/fetch/$s_!jske!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F77c10e79-d0df-4458-8a12-72cc24f538f9_1248x832.png)

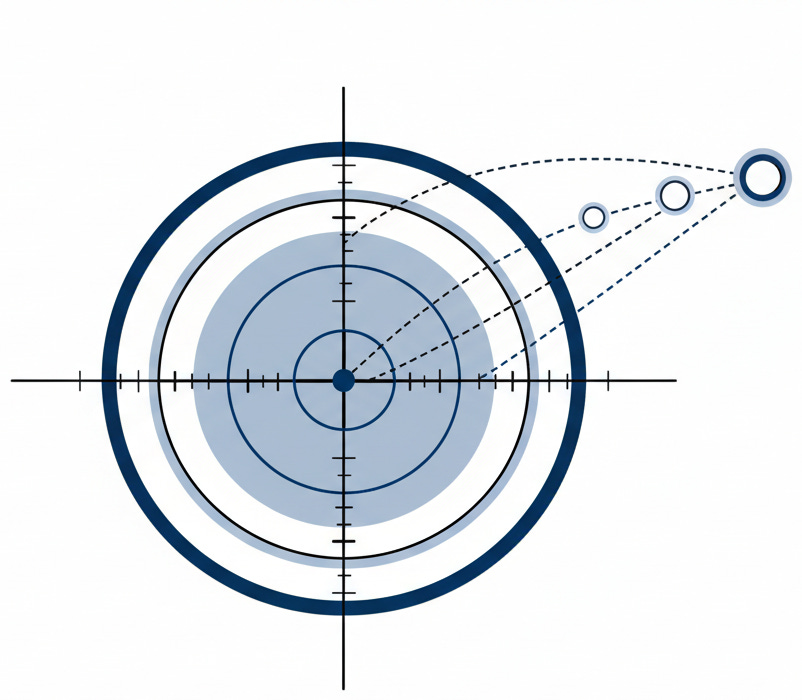

What makes this moment structurally different from the PC era is the speed at which the underlying capability is advancing. In the 1980s, the technology stabilised long enough for organisations to catch up. Today, the models improve faster than most companies can finish evaluating the last generation. Companies are trying to integrate GPT-3.5 while GPT-4 arrives, and by the time their GPT-4 pilots are complete, the next generation is already reshaping what is possible. The target is moving while they aim.

[](https://substackcdn.com/image/fetch/$s_!3lgj!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F0d2e7808-fa87-4d97-83fb-27c784bab13f_802x700.png)

This creates a paradox specific to AI adoption: waiting is rational because the technology keeps improving, and waiting is dangerous because competitors who tolerate imperfect early deployments are building the organisational muscle that will let them absorb each successive generation faster. The workflows, the data pipelines, the institutional knowledge. The advantage accrues not to whoever has the best model, but to whoever has the best integration capability. Models are commoditising. The cost per token has fallen by orders of magnitude in eighteen months, and open-source alternatives now match proprietary performance on many benchmarks. The ability to actually use them is not.

The forces pushing toward rapid integration are substantial. Labour costs are rising across developed economies. Knowledge work is increasingly bottlenecked by human processing speed. Competitive pressure in every industry is real and accelerating. The CEO who tells the board “we are taking a cautious, wait-and-see approach to AI” is making the same speech as the CEO who said the same thing about the internet in 1998. But the forces resisting integration are equally substantial, and far less discussed. Middle management has rational reasons to resist AI adoption when the technology threatens to make their coordination role, their headcount, and their budgets less necessary. And the most honest obstacle of all: most companies do not actually know what their processes are, not at the level of detail required to identify where AI fits.

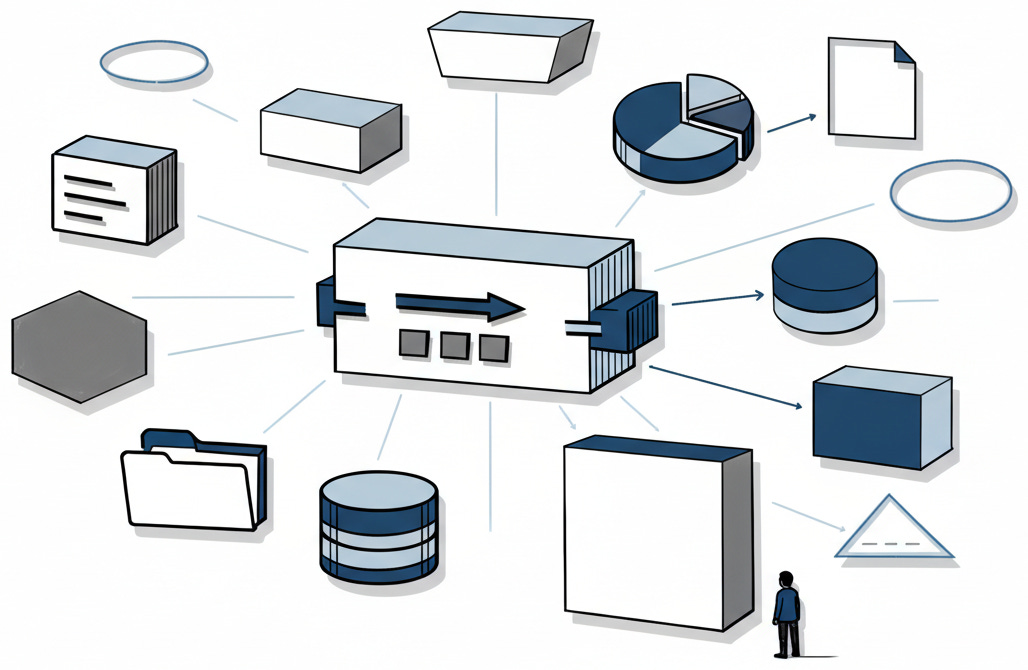

We cannot automate what we have not mapped. And most companies have never had to map their cognitive workflows with the precision that AI integration demands. They know what their people do in broad terms. They do not know, step by step, which decisions involve judgment, which involve pattern-matching, and which are just habit wearing the mask of expertise.

[](https://substackcdn.com/image/fetch/$s_!d8F-!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F6840bfe5-b302-4725-b574-f455aa97650b_1028x670.png)

This mapping problem is where the real work of the AI era lives. The breakthroughs in model architecture and prompt engineering get the attention, but the slow, unglamorous work of understanding how an organisation actually functions is what separates the early movers from the rest. Redesigning that organisation for a world where some of its functions can be handled by systems that are fast, cheap, tireless, and occasionally wrong is how the gap begins to close.

The “occasionally wrong” part deserves more attention than it receives. Every previous general-purpose technology, once functioning, produced deterministic output. A light illuminates or it does not. A spreadsheet returns the correct sum or it does not. AI, even when functioning as designed, produces probabilistic output. It introduces probability into processes built on certainty.

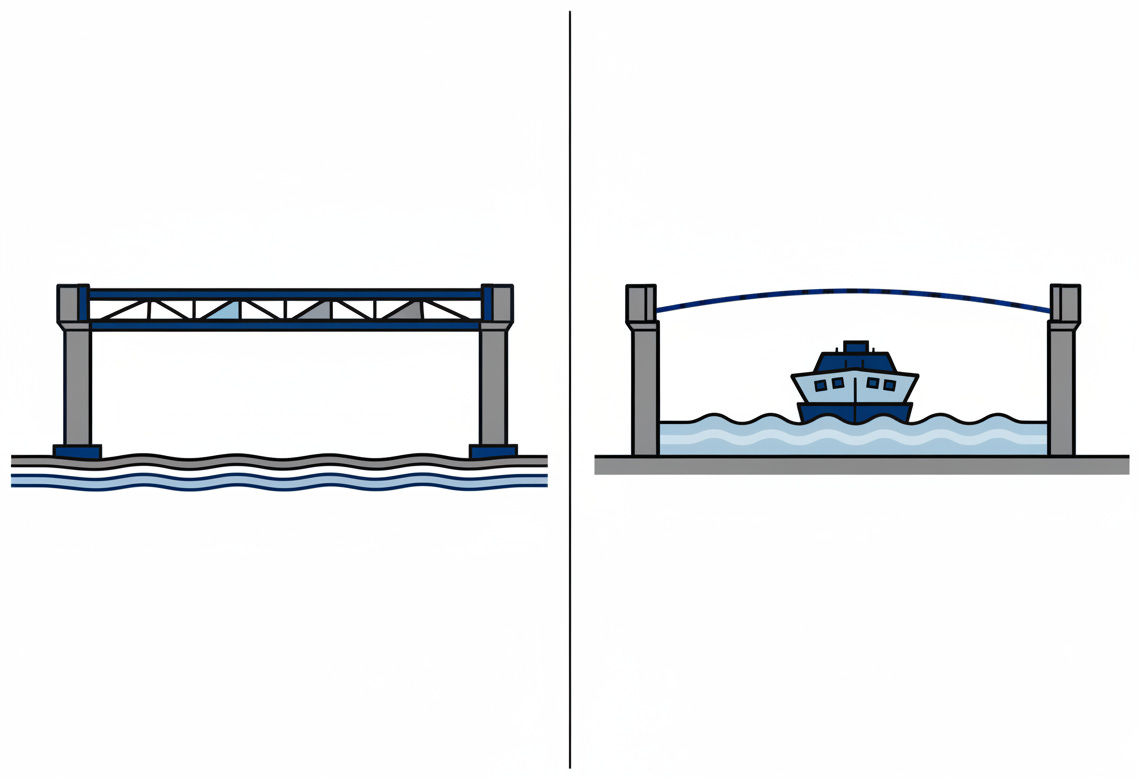

Suppose we replaced a bridge with a ferry that runs ninety-seven percent of the time. The route still exists, but now every downstream workflow must account for the days the ferry does not run. In safety-critical engineering, tolerance for failure is determined by consequence. In business, we are only beginning to learn that distinction. A system that is right ninety-seven percent of the time is transformative in marketing and catastrophic in aerospace, and the same system can be both depending on whether it is drafting copy or screening medical images. Most organisations do not even have a vocabulary for this conversation, let alone a policy. The companies that figure out where to trust probabilistic systems, and where to insist on human judgment, will define the next decade of competitive advantage.

[](https://substackcdn.com/image/fetch/$s_!OoEH!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F909ba57f-82ed-446d-8fc6-9f8cf913cedf_1139x778.png)

The organisations that develop this vocabulary fastest will be the first to close the gap between investment and impact.

The investment numbers will continue to climb. They should. The underlying capability is real, the trajectory of improvement is steep, and the potential for economic transformation is genuine. But the productivity gains will continue to lag the investment by years. The PC lag took nearly two decades; with digital infrastructure already in place, the AI lag may be shorter, but organisational change moves at its own pace. There is no reason to believe AI will be the exception to the rule that general-purpose technologies take time to absorb.

Closing the lag requires three things. Organisations must invest as heavily in integration capability as they invest in the technology itself. Management structures must reward experimentation with AI rather than punishing its inevitable early failures. And the technology must stabilise long enough for deployment to catch up, which, given the current pace of advancement, is the least likely of the three.

The most probable trajectory is the one we are on: massive investment, real capability, and a long, uneven, frustrating period where the returns trail the spending. This is not a bubble. Bubbles are built on undifferentiated capital chasing a real trend past the point of rational valuation. This is a lag. It is built on things that work but have not yet been absorbed. Meanwhile, AI-native startups that build processes around the technology from day one face no integration lag at all, and their existence is what makes the lag existential for everyone else.

The distinction matters.

Bubbles pop. Lags close.

We are building the most powerful cognitive technology in a generation and discovering that the hard part was never making it work. The hard part is making ourselves work differently. That has always been the hard part.

The technology changes in months. The organisations, the incentive structures, the habits of mind. Those change in years. And it is in that gap, between what the machine can do and what we have learned to let it do, that the actual story of AI is being written. Not in the research labs. Not in the investment rounds. In the slow, difficult, necessary work of becoming the kind of enterprises that can absorb what we have built.

* * *

### Further Reading, Background and Resources

**Sources & Citations**

[Stanford HAI AI Index Report 2024](https://aiindex.stanford.edu/report/) (April 2024). The source for the $3 billion to $25.2 billion generative AI funding surge. The report’s deeper finding: overall AI private investment actually declined from its 2021 peak. The surge is generative AI specifically, not AI broadly. That distinction matters.

[Brynjolfsson, Rock, Syverson: “The Productivity J-Curve”](https://www.nber.org/papers/w25148) (NBER, 2018; _AEJ: Macroeconomics_ , 2021). The theoretical foundation for why massive investment and negligible productivity gains can coexist. General-purpose technologies produce a characteristic J-curve: productivity stalls as firms invest in intangible complements, then rises sharply once that invisible capital accumulates. They trace the pattern through electricity and computing, where the lag ran fifteen to twenty-five years.

[McKinsey: “The State of AI in 2022”](https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-in-2022-and-a-half-decade-in-review) (December 2022). Source of the roughly-half adoption figure. Read with one caveat: McKinsey sells the consulting that makes AI adoption happen, so their incentive is to report a landscape that is growing but complex enough to need help.

[Sequoia Capital: “AI’s $600B Question”](https://sequoiacap.com/article/ais-600b-question/) (June 2024). Cahn calculates a $600 billion gap between AI infrastructure spending and the revenue required to justify it. When one of Silicon Valley’s most aggressive AI investors publishes an analysis showing the economics do not yet work, the intellectual honesty is worth noting.

**For Context**

_Robert Solow’s Productivity Paradox (1987)_. The original line appeared in a _[New York Times](https://www.standupeconomist.com/pdf/misc/solow-computer-productivity.pdf)_[ book review](https://www.standupeconomist.com/pdf/misc/solow-computer-productivity.pdf) on July 12, 1987. One sentence, in a book review, by a Nobel laureate. It launched an entire subfield of economics. The reason it endures is that it keeps being true for every general-purpose technology.

_[CFA Institute: “Venture Capital: Lessons from the Dot-Com Days”](https://blogs.cfainstitute.org/investor/2024/03/01/venture-capital-lessons-from-the-dot-com-days/) (March 2024)_. Notes that 44% of 2023 unicorns were concentrated in AI and machine learning, the kind of sectoral crowding that characterised the late 1990s internet boom. Worth reading alongside the Sequoia piece.

**Counter-Arguments**

_The lag may close faster than historical precedent suggests._ Unlike electricity or the PC, AI arrives into organisations that are already digitised. The complementary infrastructure exists. The deployment interface is natural language, which means adoption does not require the technical retraining that delayed PC integration. The historical parallels are instructive, but the boundary conditions have changed.

_AI is already delivering measurable returns._ Brynjolfsson, Li, and Raymond (2023) documented a 14% productivity increase among 5,179 customer support agents, with 34% gains for novices. Noy and Zhang (2023) found 40% faster task completion among professionals. The aggregate statistics have not moved, but the micro-level evidence is substantial. The J-curve may be real, but we may be closer to the inflection than the essay implies.

_The ceiling may be lower than bulls expect._ Daron Acemoglu estimates only 4.6% of tasks will be meaningfully affected by AI in the near term, producing roughly 1% GDP gain over a decade. If he is right, the investment-adoption gap is not just a timing problem. It is, in part, an expectations problem.