*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/the-august-problem) · 2026-01-14*

[Read on Substack →](https://theaimonitor.substack.com/p/the-august-problem)

---

[](https://substackcdn.com/image/fetch/$s_!kFo2!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fa07e7c14-70f1-4f51-b0eb-9cf6a95014aa_840x427.png)

Somewhere in Europe right now, a certification engineer is staring at a compliance matrix that is mostly red.

She has spent fifteen years certifying automotive software under ISO 26262. She knows exactly how to demonstrate that a braking algorithm will engage within 47 milliseconds, every time, under every specified condition. She has built safety cases so rigorous that a regulator can trace a single line of code back through the architecture, through the safety requirements, all the way to the hazard analysis that justified its existence. This is what she was trained to do, what her entire professional identity is built on: the elimination of uncertainty.

Now she is looking at a neural network that identifies pedestrians stepping off a kerb. Correctly 99.7% of the time. The EU AI Act says she must certify this system as safe by August 2026. She does not know what happens in the 0.3%. Nobody does. That is the nature of the technology. And no amount of deadline extension changes what the 0.3% actually is.

[](https://substackcdn.com/image/fetch/$s_!bnAv!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F30ed54d6-702a-4d07-91ce-d51f0779feee_1167x436.png)

The matrix in front of her has more red cells than green. She is not incompetent. She is facing a category error.

* * *

On 2 August 2026, the EU AI Act’s requirements for high-risk AI systems become enforceable. Safety-critical industries have until August 2027 for AI embedded in regulated products, including automotive, aerospace, medical devices, and rail. The assumption is that these industries need more time because AI compliance is technically complex. That assumption misdiagnoses the disease.

Safety-critical industries have the most mature assurance frameworks on earth. Aerospace has DO-178C and ARP4754A. Automotive has ISO 26262 and ISO/PAS 21448, known as SOTIF. Medical devices have IEC 62304 and ISO 14971. Rail has EN 50128. These frameworks encode decades of accumulated wisdom about how to build systems that do not kill people. Rigorous to the point of reverence, they have saved countless lives. And they rest on a foundational assumption that artificial intelligence violates at every level: that a system’s behaviour is deterministic and can be exhaustively specified, tested, and verified.

This is the August Problem. Not a compliance gap. Not a resource constraint. Not a matter of insufficient time. It is the collision between an entire epistemology of safety and a technology that operates by fundamentally different rules.

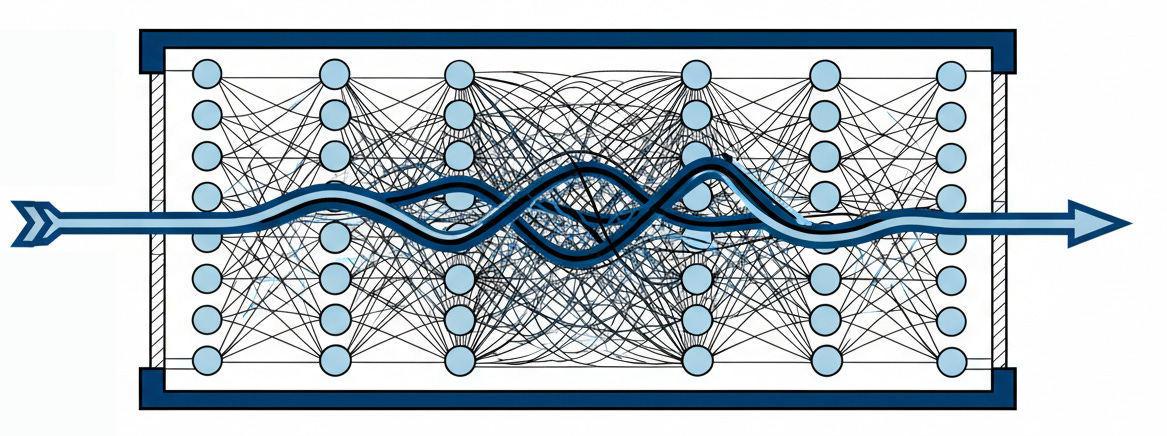

DO-178C at its highest assurance level, DAL-A, is reserved for software whose failure could be catastrophic. The standard demands Modified Condition/Decision Coverage: each Boolean condition in each decision must be shown to independently affect the outcome. For conventional avionics, this is demanding but coherent. For a neural network with millions of learned parameters, the concept does not become difficult. It becomes meaningless. There are no Boolean conditions to cover. There is no decision logic to trace. The “code” is a matrix of floating-point weights produced by training, and the relationship between any individual weight and any safety-relevant output is not merely obscure. It is, in any engineering sense of the word, unknowable.

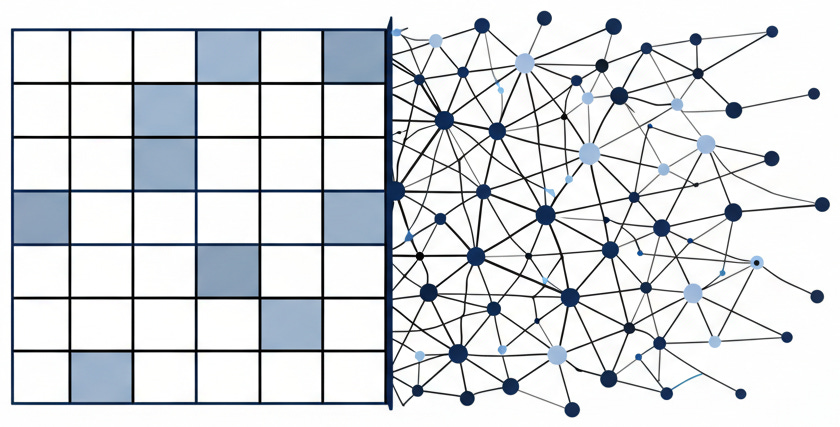

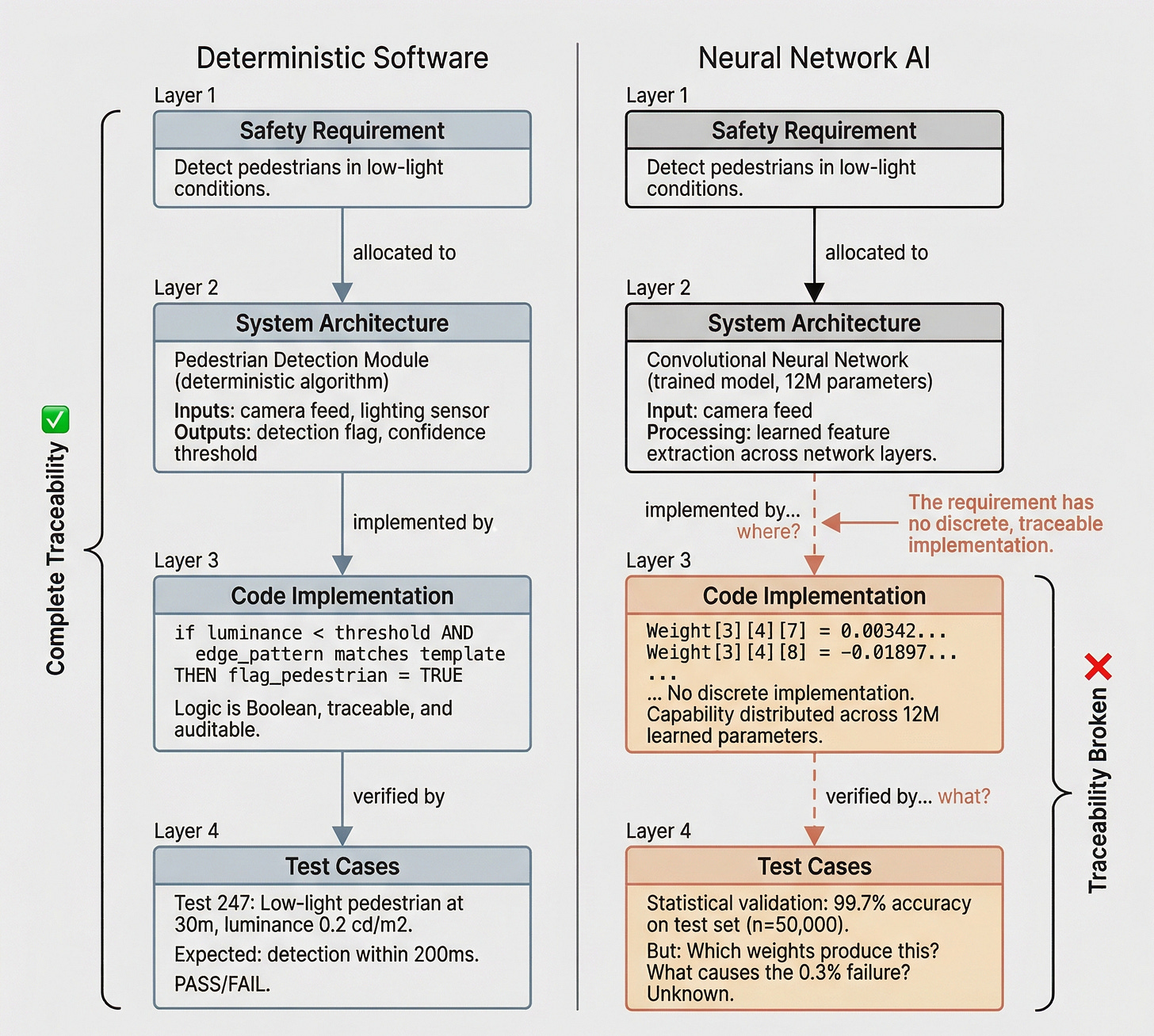

The same structural problem appears everywhere we look. ISO 26262 requires full traceability from safety requirements through architecture through code through test cases. In a neural network, there is no meaningful trace from a safety requirement like “detect pedestrians in low-light conditions” to the weight configuration that produces that capability. The requirement has no discrete, traceable implementation. It emerges from training data interacting with network architecture over millions of iterations.

[](https://substackcdn.com/image/fetch/$s_!Y32q!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb3a8e827-544b-49c2-85c3-4109f01138b8_2048x1839.png)

> _The breakdown of safety traceability when applied to AI systems. Traditional deterministic software (left) supports complete requirement-to-test traceability. Neural network-based AI systems (right) break the chain at the implementation layer, where safety requirements have no discrete, traceable realisation._

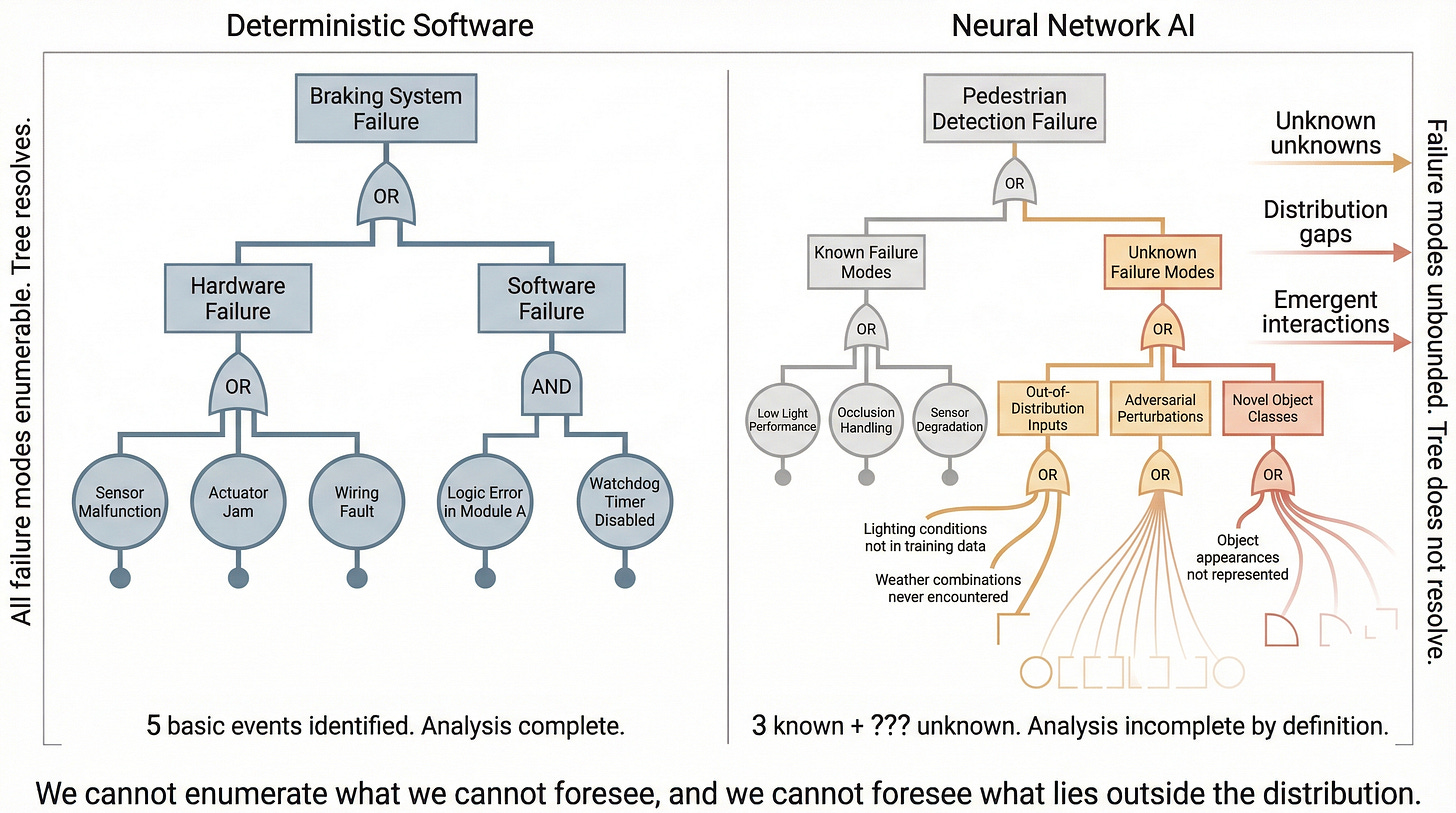

Traditional safety analysis techniques, including FMEA, fault tree analysis, and HAZOP, work by enumerating failure modes. For conventional software, failure modes are bounded by the logic of the code. For AI systems, they include everything the training data did not cover: unknown by definition, potentially unbounded. We cannot enumerate what we cannot foresee, and we cannot foresee what lies outside the distribution.

[](https://substackcdn.com/image/fetch/$s_!fMbN!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F225bad0f-aa02-4c8e-a89f-d7c8484e5f7e_2752x1536.png)

> _A fault tree analysis applied to deterministic software (left) versus an AI system (right). Traditional fault trees resolve to enumerable basic events. For neural networks, the tree expands into unbounded, unenumerable failure modes that extend beyond any analysis frame._

Even SOTIF, the most AI-aware standard in the safety-critical canon, was designed for advanced driver assistance systems, not fully autonomous ones. Its own documentation acknowledges that “as a standard for a complex and constantly developing technology such as automated driving, SOTIF itself has many limitations.” The standards community stating reality. The tools are not ready, and they know it.

The Commission knows it. Eighteen months before enforcement, the regulatory architecture has admitted it is not ready. On 19 November 2025, the European Commission published the Digital Omnibus proposal, making the application date for high-risk AI requirements conditional on the readiness of standards that do not yet exist. The proposal explicitly acknowledges “the absence of harmonised standards for AI Act’s high-risk requirements.” It cites delays in appointing conformity assessment bodies and national competent authorities, and proposes a “moveable start date” with a ceiling of December 2027.

This is not bureaucratic housekeeping. This is an admission that the August Problem was baked into the regulatory architecture from the start. CEN-CENELEC’s Joint Technical Committee 21 is developing the necessary standards, but the first of these, prEN 18286, only entered public enquiry in October 2025, eight months past its original April deadline. Even under an accelerated process that bypasses the formal vote, enforcement will begin without mature, implementable standards.

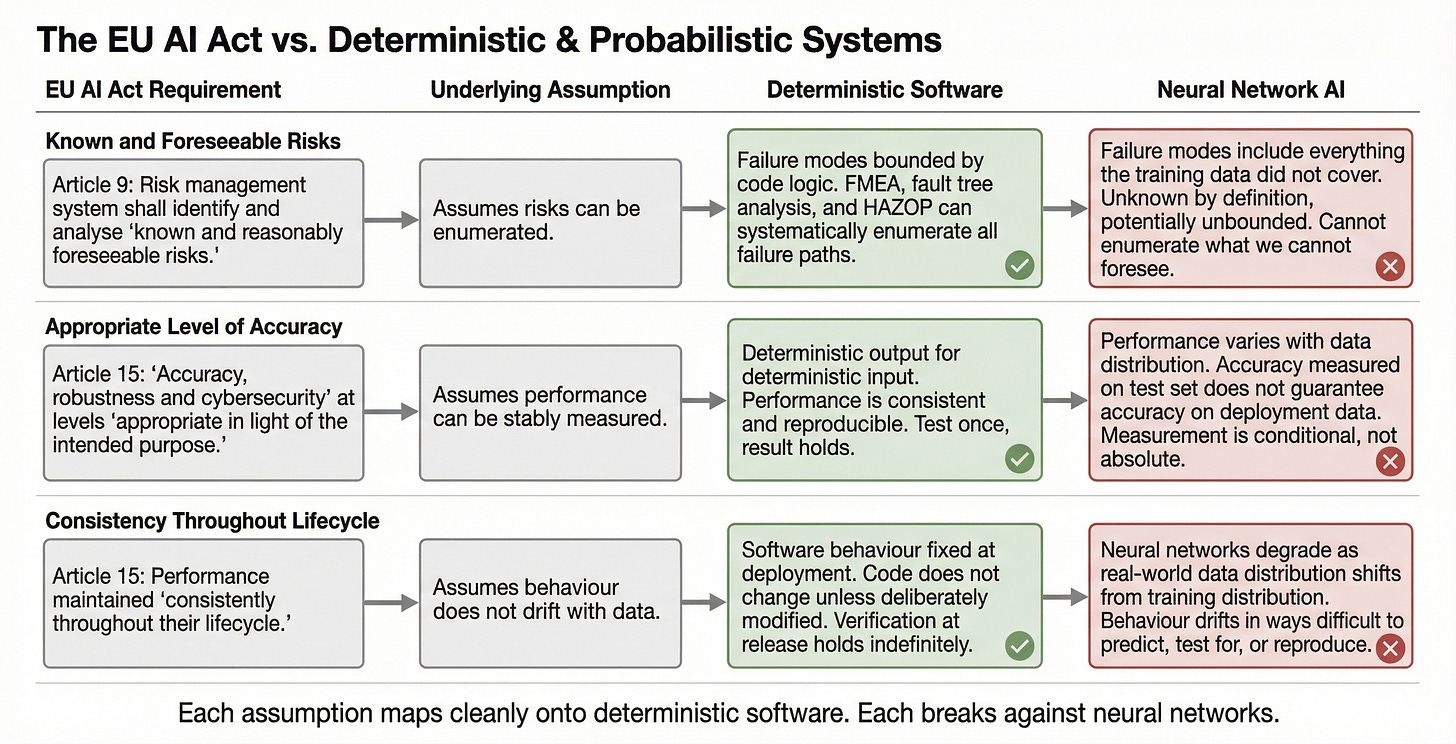

It reveals something deeper than a scheduling problem. The EU AI Act’s requirements are, in themselves, sensible. Article 9 requires a risk management system that identifies and analyses “known and foreseeable risks.” Article 15 requires “accuracy, robustness and cybersecurity” at levels “appropriate in light of the intended purpose.” Read in isolation, these are reasonable demands. Read against the reality of probabilistic AI systems, they expose assumptions about the nature of risk. “Known and foreseeable” assumes we can enumerate risks. “Appropriate level of accuracy” assumes we can stably measure performance. “Consistently throughout their lifecycle” assumes behaviour does not drift with data. Each assumption maps cleanly onto deterministic software. Each breaks against neural networks that degrade in ways that are difficult to predict, test for, or reproduce.

[](https://substackcdn.com/image/fetch/$s_!Th9n!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F7e19b6c5-0371-4eb4-82c0-943f8a604fab_2751x1405.png)

> _How three core assumptions embedded in the EU AI Act's requirements map onto deterministic software and break against probabilistic AI systems. Each regulatory requirement presupposes properties that neural networks structurally cannot guarantee._

The technical challenge is structural. The institutional challenge is existential. Safety-critical industries select, train, and promote engineers for their ability to eliminate uncertainty. The entire professional culture is built on a premise that has served civilisation well: “probably safe” is not safe. A bridge that probably will not collapse is not a bridge we build. An aircraft system that probably will execute the right manoeuvre is not a system we certify.

Now these same engineers and institutions are being asked to certify systems where “probably safe” is the best anyone can offer. It is a confrontation with professional identity itself. Two institutional responses are already forming. Some organisations will avoid AI in safety-critical applications entirely, preserving the certainty their culture demands while ceding competitive ground to those willing to engage. Others will apply deterministic frameworks to probabilistic systems, producing compliance documentation that is formally rigorous and epistemically hollow. The result: thick binders full of traceability matrices that trace nothing meaningful, coverage reports that cover the wrong kind of ground.

Both responses are dangerous. Avoidance delays the integration of technology that, with proper assurance, could save lives. Hollow compliance creates the appearance of safety without its substance. The gap between a certification stamp and genuine assurance becomes the space where failures incubate.

What the August Problem demands is something harder than either avoidance or theatre. It demands genuinely new assurance methodologies: frameworks native to the technology under review rather than deterministic scaffolding with AI-shaped patches. This work has begun in fragments. SOTIF points in the right direction. The FDA’s Predetermined Change Control Plans for AI-based medical devices acknowledge that models will change after deployment. EASA is developing guidance for machine learning in aviation. But none of these efforts have yet produced what safety-critical AI actually requires: a coherent, mature framework for assuring probabilistic systems that is as rigorous on its own terms as ISO 26262 and DO-178C are on theirs.

The paradox at the heart of the August Problem is that the industries best equipped to build these new frameworks are the ones least culturally prepared to do so. They understand safety assurance better than anyone. They have the deepest expertise in identifying failure modes, designing redundancy, and building cases that regulators trust. They also have the deepest attachment to the epistemological foundations AI requires them to rethink.

* * *

The question in front of us is not whether the August deadline will slip. The Digital Omnibus has already signalled that it will. The question is whether we use the borrowed time to do the actual work. Every safety-critical standard we rely on today was once a response to a new technology that the existing frameworks could not contain. The pattern is not new. What is new is the scale of the epistemological shift being demanded. We are not moving from one kind of deterministic assurance to another. We must assure systems whose fundamental operating principle is statistical approximation, in domains where failure costs human lives.

The organisations that will navigate this best are those willing to sit with the discomfort of not yet knowing how. Rejecting both the pretence that old tools still work and the temptation to walk away entirely. The ones who recognise that the August Problem is not a compliance exercise to be managed. It is an epistemological reckoning to be faced. The certification engineer staring at her red-celled matrix sees something larger than a deadline. She is looking at the future of safety assurance itself. The question is whether we will build something worthy of what it protects.

* * *

### Further Reading, Background and Resources

**Sources & Citations**

* **[Regulation (EU) 2024/1689](https://eur-lex.europa.eu/eli/reg/2024/1689/oj/eng)** (European Parliament and Council, July 2024). The full legislative text. Articles 9 and 15 encode assumptions about deterministic systems that the drafters may not have consciously intended.

* **[Digital Omnibus on AI Regulation Proposal](https://digital-strategy.ec.europa.eu/en/library/digital-omnibus-ai-regulation-proposal)** (European Commission, November 2025). The document in which the Commission quietly acknowledges that the tools needed for compliance do not yet exist. The [OneTrust analysis](https://www.onetrust.com/blog/eu-digital-omnibus-proposes-delay-of-ai-compliance-deadlines/) provides a readable summary, but the Commission’s own framing of “absence of harmonised standards” is more revealing than any commentary.

* **[An Analysis of ISO 26262: Using Machine Learning Safely in Automotive Software](https://arxiv.org/pdf/1709.02435)** (Salay, Queiroz, Czarnecki, 2017). Published seven years before the AI Act entered into force, this paper identified the five distinct ways neural networks violate ISO 26262’s foundational assumptions. Worth reading for the methodology alone.

**For Context**

* **[Deterministic or Probabilistic Analysis?](https://risktec.tuv.com/knowledge-bank/deterministic-or-probabilistic-analysis/)** (Risktec/TUV). A concise primer on the conceptual distinction that underpins this essay. Probabilistic methods have historically been applied to hardware with statistically characterisable failure rates, not to software whose behaviour emerges from training data distributions.

* **[Standardisation of the AI Act](https://digital-strategy.ec.europa.eu/en/policies/ai-act-standardisation)** (European Commission). The official tracker for CEN-CENELEC’s Joint Technical Committee 21. The timeline tells its own story: a standardisation request issued in May 2023 with an April 2025 deadline, pushed to end of 2025, with the first standard only reaching public enquiry in October 2025.

**Practical Tools**

For organisations assessing their position on the August Problem, three questions can structure the evaluation:

* **Traceability audit.** Can you trace from each safety requirement through your architecture to a specific, discrete implementation? If the answer involves “distributed across weights,” your traceability framework is not fit for purpose.

* **Coverage methodology.** What does “test coverage” mean for your AI components? If you are applying MC/DC or branch coverage metrics to neural networks, you are measuring the wrong thing.

* **Cultural readiness.** Is your engineering organisation prepared to certify systems where “probably safe” is the best available assurance? The hardest part of the August Problem is not technical. It is institutional willingness to engage with probabilistic assurance without retreating to false certainty or abandoning rigour entirely.

**Counter-Arguments**

* **The regulatory framework is intentionally flexible.** Article 15 requires an “appropriate level” of accuracy, not a deterministic guarantee. The Digital Omnibus is evidence that the system is functioning as designed. These industries have adapted before: when software first entered safety-critical systems in the 1970s, existing frameworks were equally unprepared. DO-178A emerged from that recognition.

* **The essay underweights the safety cost of inaction.** If a neural network pedestrian detection system is correct 99.7% of the time but the human driver it assists is correct only 95%, the system with higher accuracy is the safer one. Demanding deterministic certification for probabilistic systems may prevent deployment of technology that would save lives. The most dangerous version of the August Problem may be that deterministic purity delays systems that are measurably safer than what they would replace.