*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/shifting-gears-the-uks-evolving-ai) · 2024-09-05*

[Read on Substack →](https://theaimonitor.substack.com/p/shifting-gears-the-uks-evolving-ai)

---

[](https://substackcdn.com/image/fetch/$s_!jANh!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F97ff24cc-7cf6-4965-9d22-e561fbed53e6_1229x274.png)

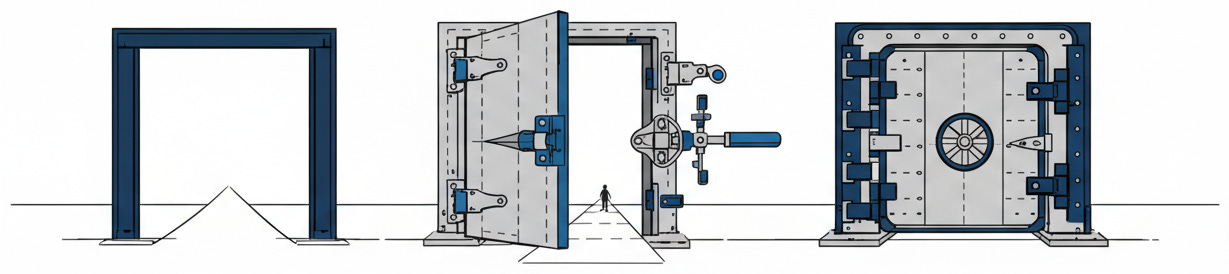

Every democracy that encounters a transformative technology passes through the same three gates. Denial. Principled hand-wringing. Finally, the law. The United Kingdom has compressed a decade’s worth of that cycle into three years. This speed is not indecision. It is a hard problem being understood in real time.

Between 2021 and mid-2024, the UK moved from voluntary ideals to toothless principles and finally to statutory commitments targeting frontier AI. That arc is the closest thing we have to a natural experiment in how democracies learn to regulate technology they barely understand.

## The Voluntary Era: 2021 to 2023

The National AI Strategy of September 2021 set the tone. The UK would be “pro-innovation,” welcoming AI companies with the promise that regulation would be light, contextual, and friendly. The government would not create a new regulator or pass new legislation. Instead, existing bodies like the ICO, the FCA, Ofcom, and the CMA would handle AI within their own sectors, guided by broad principles.

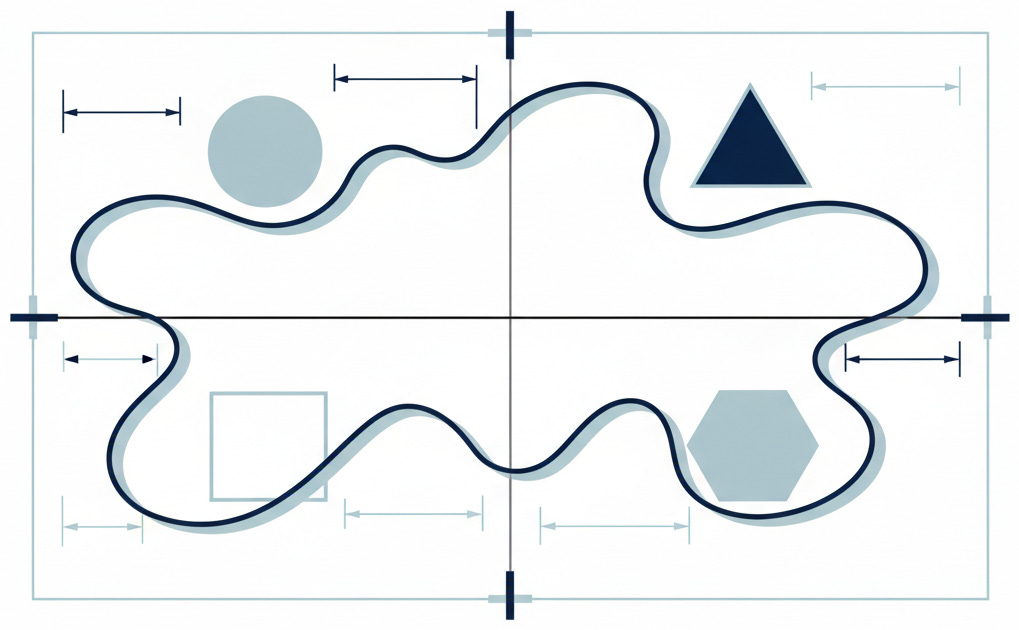

In March 2023, the white paper “A pro-innovation approach to AI regulation” gave those principles formal shape. It articulated five cross-sectoral standards: safety, security, and robustness; appropriate transparency and explainability; fairness; accountability and governance; and contestability and redress. None had the force of law. It also proposed a central function to coordinate regulators, maintain a cross-economy AI risk register, and align guidance across sectors.

On paper, the architecture looked reasonable. In practice, it had a structural flaw that no amount of coordination could fix. The principles were non-statutory. The government asked regulators to implement them but gave them no legal power to enforce. In safety-critical engineering, this is a design flaw: a component obligated to work only when it feels like it.

The Ada Lovelace Institute warned in July 2023 that without a statutory duty, regulators would be “obliged to deprioritise or even ignore the AI principles” whenever those principles conflicted with existing legal mandates. The House of Lords went further in February 2024, calling reliance on voluntary commitments “naive.”

The assessment was polite. The reality was structural. A voluntary framework assumes that the incentives of regulators and the goals of the government are perfectly aligned. They are not. When a principle conflicts with a legal mandate, the law wins every time. The architecture of the voluntary era guaranteed its own failure. Principles change what people say. Laws change what people do.

[](https://substackcdn.com/image/fetch/$s_!Mnm-!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F2c7e6e58-16f9-4914-b996-5e48bc19cbe5_1193x493.png)

By April 2024, the evidence was clear. Different bodies were interpreting the same principles in different ways, with different levels of urgency and resource. The design itself had baked in the coordination problem.

## The White Paper’s Contribution and Its Limits

The 2023 white paper performed a necessary function. It named the problems, defined a shared vocabulary, and forced a national conversation about what AI governance should look like. The five principles, though unenforceable, gave regulators and companies a common reference point. The proposed central function acknowledged that AI does not respect sector boundaries.

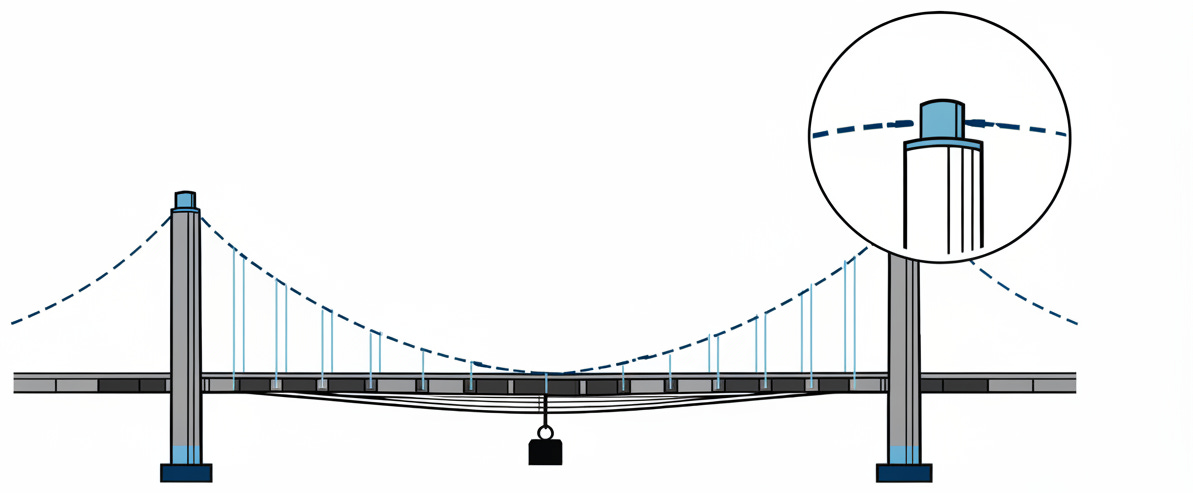

The white paper had a fatal blind spot. It assumed a world of neat industry silos where a bank regulator handles banking and a health regulator handles medicine. General-purpose AI does not respect these boundaries. A large language model deployed in healthcare, finance, and education simultaneously lives in every jurisdiction and none of them. The proposed coordination mechanism was too modest to bridge gaps that were widening faster than the government could measure.

[](https://substackcdn.com/image/fetch/$s_!KJBh!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fc4b30af6-8de5-4d44-9cc2-ebdf561533a5_1019x630.png)

The white paper looked good on the presentation stage. Its architecture was precise, its vocabulary careful. But what works in principle rarely survives contact with the real regulatory landscape. Its greatest contribution was making the limitations of voluntary governance impossible to ignore.

## Labour’s Statutory Shift: Mid-2024

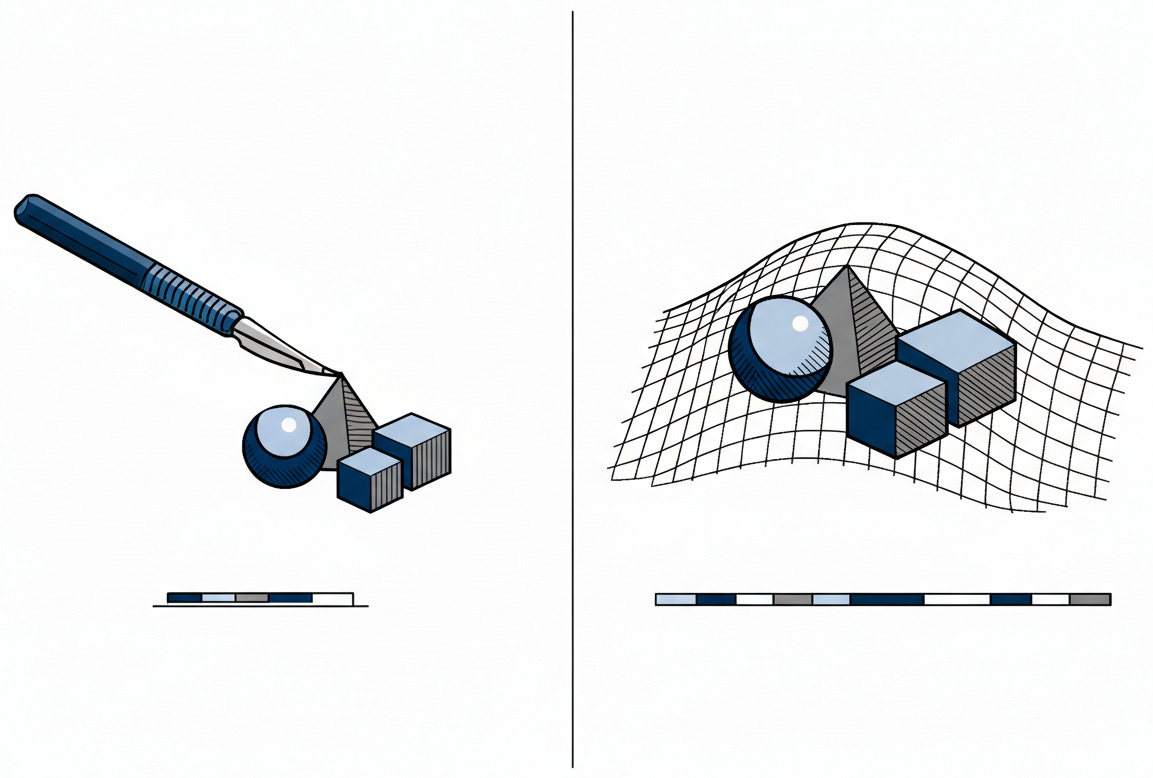

When Labour took office in July 2024, the era of asking nicely ended. The party’s manifesto committed to “introducing binding regulation on the handful of companies developing the most powerful AI models.” Peter Kyle, the new Technology Secretary, was blunt: “We will move from a voluntary code to a statutory code, so that those companies engaging in that kind of research and development have to release all of the test data and tell us what they are testing for.”

The King’s Speech on July 17, 2024 confirmed the direction. The government would “seek to establish the appropriate legislation to place requirements on those working to develop the most powerful artificial intelligence models.” The language was careful. The intent, unmistakable. It also announced a Regulatory Innovation Office to coordinate and support existing regulators.

The proposal was targeted: mandatory safety testing with independent oversight for frontier models, binding codes of practice on a legal footing, and a coordination office to help regulators act together. The scope was narrow by design. This was not a new super-regulator with sweeping enforcement powers.

This is a surgical strike, not a blanket ban. Unlike the EU’s AI Act, which classifies risk across the entire ecosystem, the UK targets only the frontier. Legal analyses, including Steptoe’s, noted that Labour’s framework would “not go as far as the EU’s AI Act.” The ambition is precision, not comprehensive coverage. The approach acknowledges that regulating the application of AI is a different problem than regulating the engine itself.

[](https://substackcdn.com/image/fetch/$s_!aEzl!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F32ebd446-4e0f-4255-b30d-d47fd6e6affd_1153x778.png)

Labour’s shift acknowledged what the voluntary era had proved: that in the governance of powerful technology, the distance between “should” and “must” is the distance between aspiration and accountability. The government stopped trying to negotiate with the technology and started trying to constrain it.

## The International Frame

The UK’s trajectory becomes clearer against the backdrop of how other powers approach the same problem.

The EU’s AI Act, which entered into force on August 1, 2024, is the most comprehensive attempt at AI legislation anywhere. It classifies all AI systems by risk tier, from outright prohibitions through high-risk compliance obligations down to minimal-risk systems with no specific requirements. The UK targets only frontier models. The difference is architectural: the EU governs the technology wherever it appears; the UK governs only its most powerful frontier.

The United States has channelled roughly $6.4 billion in federal AI activities through the National AI Initiative while leaving regulation largely to executive orders and sector-specific agencies. The strategy is familiar: invest heavily, regulate cautiously, trust the market to self-correct. The gap between that investment enthusiasm and the calls for oversight mirrors the UK’s own voluntary era. The pattern is the same; the inertia is just larger.

The UK now occupies a deliberate middle ground. It has moved beyond voluntarism but stopped well short of the EU’s comprehensive classification system. For companies operating across borders, this creates a layered reality: EU compliance as the floor, UK statutory codes for frontier models, and American rules that vary by sector and administration.

The Bletchley Park AI Safety Summit in November 2023, where 28 countries and the EU signed voluntary safety testing commitments, revealed the ambition. Labour’s statutory shift revealed the recognition that ambition without legal backing was not enough.

Post-Brexit, the UK’s positioning carries a distinct tension. The stakes are not abstract. The EU data adequacy decision, the only one ever issued with a built-in expiry clause, means regulatory divergence has a concrete cost. Drift too far from European standards and UK companies lose frictionless data flows with their largest trading partner. Align too closely and the “Brexit dividend” of regulatory flexibility evaporates. Every company operating across the Channel faces a live commercial calculation.

## What the Trajectory Reveals

The UK’s journey from voluntary principles through articulated frameworks to statutory commitments compresses a pattern that usually takes democracies a decade or more.

The first instinct is always to trust the innovators, set broad principles, and avoid stifling growth. This is not foolish. It reflects genuine uncertainty about what regulation should look like when the technology changes faster than any legislative process can track.

The second gate is recognition. Principles exist, language is precise, but nothing compels compliance. Regulators produce strategies. Companies publish ethics charters. Everyone uses the right vocabulary. The gap between the architecture and its enforcement becomes visible only when the principles are tested and no one has the statutory power to act.

The third gate is law. Not because policymakers suddenly become wiser, but because the evidence accumulates. Voluntary commitments do not change the behaviour of actors whose incentives point elsewhere. The word for this is mechanism design. Statutory footing is not overreach. It is learning.

[](https://substackcdn.com/image/fetch/$s_!GWuN!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F51b60a10-1622-4f1c-81d1-0bcb1580f7ef_525x964.png)

The UK has passed through all three. The remaining question is not whether regulation is coming, but whether the momentum of law, once started, will carry governance further than anyone planned.

We trust that progress is linear, that we can negotiate with technology as equals. But the distinction between “should” and “must,” between a principle and a law, is the distinction between a wish and a constraint. Constraints are not the enemy of innovation. They are the condition for its survival. Every democracy building its relationship with AI will pass through these same three gates. The UK simply got there first.

* * *

### Further Reading, Background and Resources

**Sources & Citations**

**[A Pro-Innovation Approach to AI Regulation (UK Government White Paper, March 2023)](https://www.gov.uk/government/publications/ai-regulation-a-pro-innovation-approach/white-paper)**

The document that launched a thousand strategies and enforced none of them. Read it not for what it proposed, but for the architectural assumption it embedded: that AI governance could be delegated to sector-specific regulators without statutory compulsion. That assumption is what Labour spent mid-2024 dismantling.

**[Regulating AI in the UK (Ada Lovelace Institute, July 2023)](https://www.adalovelaceinstitute.org/report/regulating-ai-in-the-uk/)**

The most important critical analysis of the white paper era. The Institute’s 18 recommendations identified the structural flaw at the heart of the voluntary approach: without a statutory duty, regulators would be “obliged to deprioritise or even ignore the AI principles” when they conflicted with existing legal mandates. This report reads, in retrospect, like a blueprint for everything Labour announced twelve months later.

**[Large Language Models and Generative AI (House of Lords Communications and Digital Committee, February 2024)](https://lordslibrary.parliament.uk/large-language-models-and-generative-ai-house-of-lords-communications-and-digital-committee-report/)**

When the House of Lords calls your governance framework “naive,” you have a credibility problem. This committee report recommended mandatory safety tests for high-risk models months before Labour adopted that exact position. The Lords’ analysis sharpened the case that voluntary commitments were structurally misaligned with how frontier AI companies operate.

**[EU AI Act -- Regulation (EU) 2024/1689 (European Parliament, June 2024)](https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence)**

The comprehensive counterpoint. The EU chose to classify every AI system by risk tier; the UK chose to regulate only the frontier. Reading the EU framework alongside Labour’s proposals clarifies what the UK deliberately chose not to do, and why that restraint is both the strategy’s greatest strength and its most obvious vulnerability.

* * *

**For Context**

**[The Bletchley Declaration (AI Safety Summit, November 2023)](https://www.gov.uk/government/publications/ai-safety-summit-2023-the-bletchley-declaration/the-bletchley-declaration-by-countries-attending-the-ai-safety-summit-1-2-november-2023)**

Bletchley matters not for what it achieved but for what it revealed. Twenty-eight countries signed up to voluntary safety testing commitments that were non-binding. Labour explicitly cited these as insufficient when making the case for statutory codes. This was the high-water mark of the voluntary era and the evidence base for moving beyond it.

**[UK Government Response to the AI White Paper Consultation (February 2024)](https://assets.publishing.service.gov.uk/media/65c1e399c43191000d1a45f4/a-pro-innovation-approach-to-ai-regulation-amended-governement-response-web-ready.pdf)**

The last major policy document of the Conservative approach. It confirmed the non-statutory framework and announced the AI and Digital Hub pilot. Read this as the fullest expression of what the voluntary architecture was trying to become before the political ground shifted.

* * *

**Practical Tools**

**Companies operating across the UK and EU** face a dual-compliance reality. Use the EU AI Act’s risk classification as the compliance baseline, then layer UK-specific frontier model obligations on top. The [White & Case AI Watch Global Regulatory Tracker](https://www.whitecase.com/insight-our-thinking/ai-watch-global-regulatory-tracker-united-kingdom) maintains a useful running comparison.

**Sector-specific AI deployers** should engage directly with their relevant UK regulator’s published AI strategy (available via [GOV.UK’s consolidated regulator updates, April 2024](https://www.gov.uk/government/publications/regulators-strategic-approaches-to-ai/regulators-strategic-approaches-to-ai)). Each regulator is interpreting the five principles differently; knowing your regulator’s specific interpretation is more useful than knowing the principles in the abstract.

* * *

**Counter-Arguments**

**The voluntary era was not a failure -- it was a necessary calibration period.**

Voluntary principles allowed regulators to develop domain-specific expertise in AI governance before being handed statutory tools they might not have known how to wield. The ICO, FCA, and CMA each published substantive AI strategies by April 2024 precisely because the principles gave them a framework to learn within. The voluntary era built the institutional knowledge that statutory regulation now relies on.

**Labour’s narrow targeting of frontier models may create a false sense of security.**

By focusing binding regulation on “the handful of companies developing the most powerful AI models,” Labour’s framework leaves the vast majority of AI systems -- including those deployed at scale in hiring, credit scoring, and benefits allocation -- in the same voluntary regime the essay criticises. The most immediate harms from AI in the UK are not coming from frontier models. A regulatory pivot that addresses GPT-scale models while leaving automated benefits decisions unregulated is solving tomorrow’s problem while ignoring today’s.

**The EU’s comprehensive approach may prove more coherent.**

The UK’s layered system -- voluntary principles for most AI, statutory codes for frontier models, sector regulators interpreting guidance differently -- creates a patchwork where obligations depend on which regulator you fall under and which country you serve. For multinational companies, a single comprehensive framework may be easier to comply with than overlapping regimes.

**The “three-stage” narrative may not generalise.**

The UK’s path was shaped by factors that are not universal: a post-Brexit imperative to demonstrate regulatory sovereignty, a change of government that made the policy pivot politically costless, and the Bletchley Summit as a high-profile test case. Japan has maintained a voluntary approach without the same political pressure. India is building sector-specific governance without a white paper phase. The UK’s arc is instructive, but treating it as a universal template risks mistaking one country’s political circumstances for a law of regulatory physics.