*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/shadow-ai-on-the-rise) · 2025-06-06*

[Read on Substack →](https://theaimonitor.substack.com/p/shadow-ai-on-the-rise)

---

[](https://substackcdn.com/image/fetch/$s_!kiuC!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F2aa810c5-1b66-4a4c-b814-f7cb5880719b_1229x815.png)

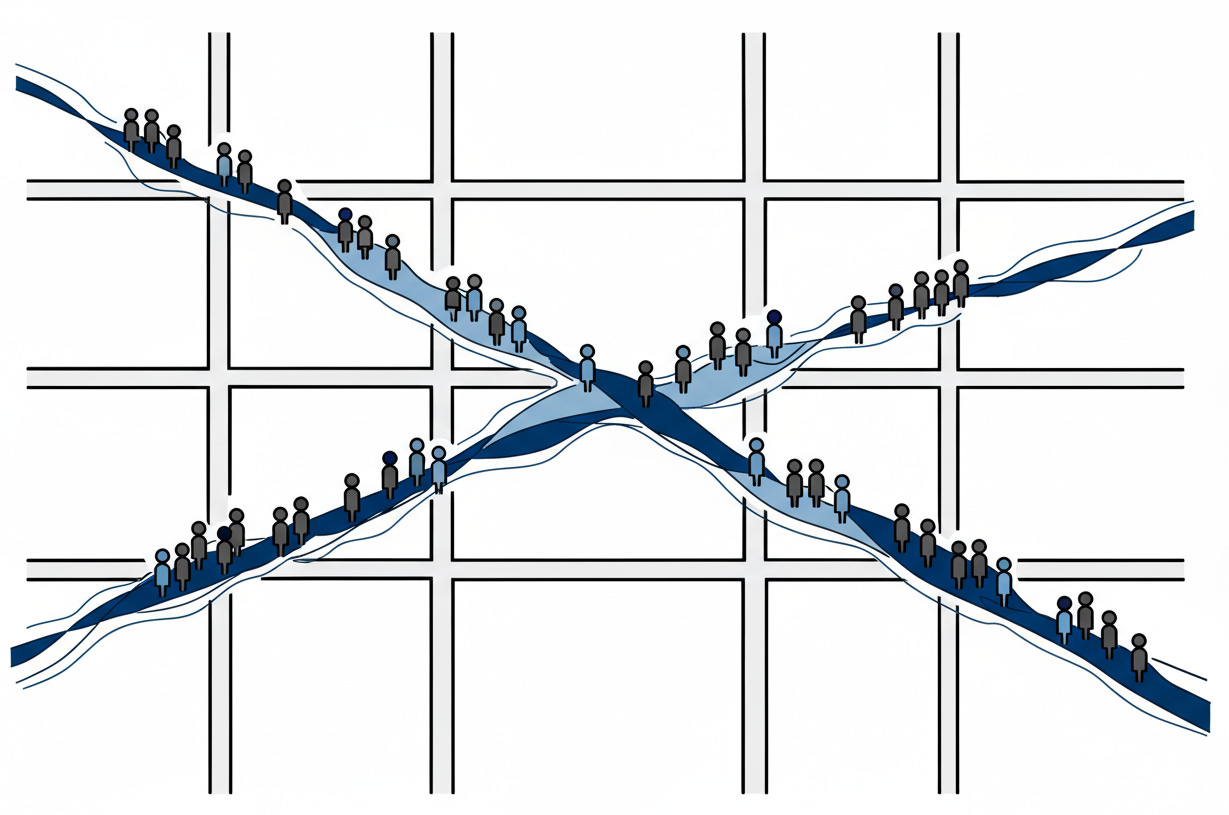

Capability does not wait for permission. It creates a reality of its own.

We assume that organization requires authorization. We believe that if the policy does not permit the tool, the work simply halts. But this is a delusion. Every prohibition creates the behavior it forbids. Shadow AI is not a risk to be managed. It is a vote that has been cast. It is the gap between what organizations provide and what their people actually need, made visible.

## The Governance Vacuum

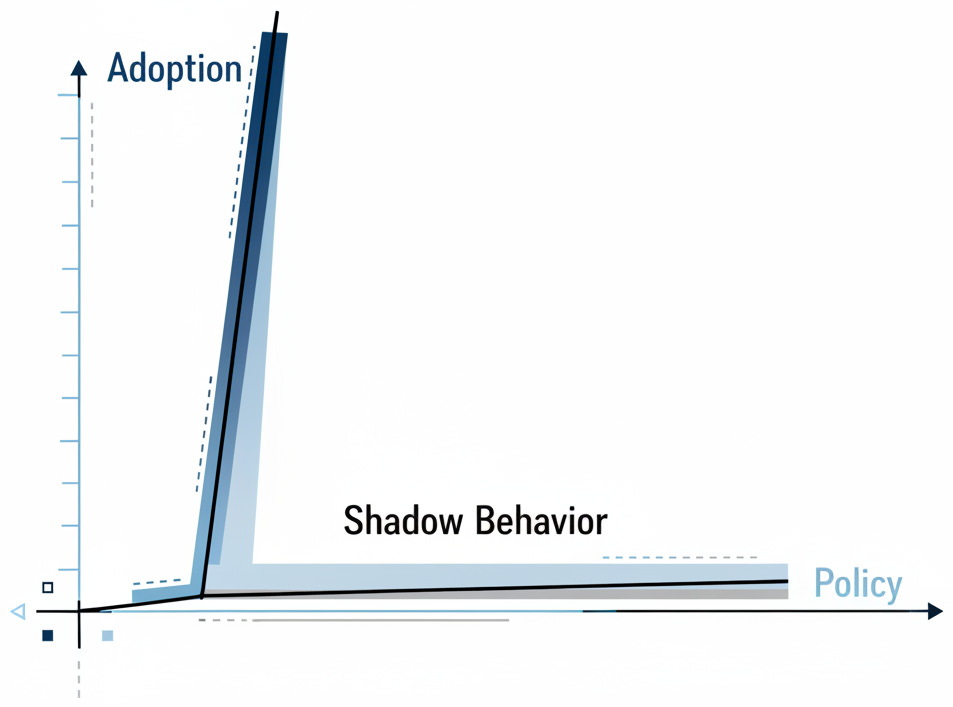

Capability always precedes governance. Within months of release, generative AI permeated the Fortune 500. Usage was highest among upper managers---the very people tasked with writing the policies. This reveals the disconnect: the prohibition of AI was often a theoretical exercise performed by people who were already using it.

The adoption curve was not gradual. It was vertical.

[](https://substackcdn.com/image/fetch/$s_!Ryu-!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F73bade77-869b-4524-bd66-614c3c304cc1_953x707.png)

By mid-2023, 57% of American workers had tried ChatGPT. Fifteen months later, McKinsey found that 91% of surveyed employees were using generative AI for work. For most organizations, it happened before anyone in leadership noticed.

Within nine months of ChatGPT’s public release, 80% of Fortune 500 companies had employees using generative AI. Upper managers were three times more likely to use it than junior staff. More than half operated without formal approval. Yet only 17% of U.S. workers reported clear AI policies from employers. Nearly 70% never received training on safe AI use. This policy vacuum created an underground economy where employees hid their usage from management, not because they were doing something wrong, but because there was no sanctioned way to do it right.

Faced with this reality, many companies reached for the familiar tool: the ban. In 2023, major corporations from Accenture to Samsung moved to restrict ChatGPT access. Two-thirds of top pharmaceutical companies joined in.

The bans worked about as well as Prohibition itself.

Shadow AI flourishes when organizations prohibit without providing alternatives. Employees find workarounds. They use personal devices. They ignore policies. Visible risk is manageable. Underground risk metastasizes.

## The Real Costs of Invisibility

The fear is rational because the risk is concrete.

[](https://substackcdn.com/image/fetch/$s_!oYSZ!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fb80524b3-80d9-4139-9b01-797e61192b25_1091x193.png)

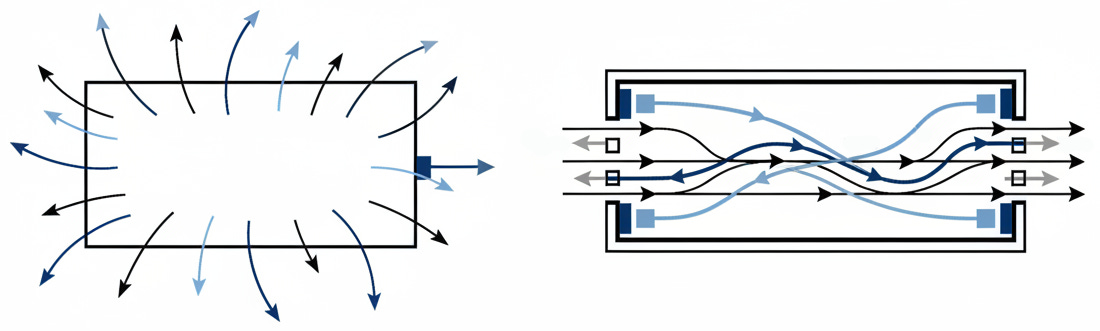

In safety-critical systems, we distinguish between a fault and a failure. A fault is a bug; a failure is when the system crashes. Here, the fault is the lack of secure tools. The failure is the data leak. When Samsung engineers pasted proprietary code into a public model, they were not acting maliciously. They were optimizing for efficiency in an environment where efficiency was not officially provided. The risk comes from lack of containment, not malice.

A UK survey found one in five organizations experienced this specific exposure. Employees do not want to leak data. They simply do not understand where data goes once it leaves their clipboard.

Beyond data leaks lurk compliance nightmares. Healthcare workers inputting patient information violate HIPAA. FINRA reminds financial firms that AI does not exempt them from recordkeeping rules. Under GDPR, sending EU personal data to external AI could be unlawful. The EU AI Act, now in force, mandates transparency for high-risk uses. The regulatory landscape is already active.

Yet the costs of prohibition compound too. Organizations that ban AI watch their competitors accelerate. Employees who cannot use sanctioned tools find unsanctioned ones. The risk goes underground.

## What the Adopters Learned

Smart organizations discovered the alternative to prohibition by treating shadow usage as product research. When Morgan Stanley discovered wealth advisors using ChatGPT, they did not ban it. They built a secure internal proxy. They met the demand where it lived. Morgan Stanley did not invent the use case; their employees did. The bank simply provided a safe container for behavior that was already occurring. By late 2023, 98% of advisor teams had adopted the tool. Shadow AI disappeared because the official solution was superior to the underground alternative.

[](https://substackcdn.com/image/fetch/$s_!3zy7!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F35fc2781-d4ad-4baa-a266-2f1d64fe4da2_1100x331.png)

PwC applied the same logic at scale, deploying ChatGPT Enterprise to over 100,000 users. In both cases, the organization stopped fighting the user’s judgment and started securing it. With clear governance, training, and controls in place, they turned employee enthusiasm into measurable gains: 20-40% productivity increases among users of their internally developed ChatPwC tool.

A pattern emerges. The organizations that succeeded did not fight their employees’ judgment about which tools made them productive. They built secure channels for that judgment to operate. They acknowledged the underlying truth: their people knew something they did not.

## Building Governance That Works

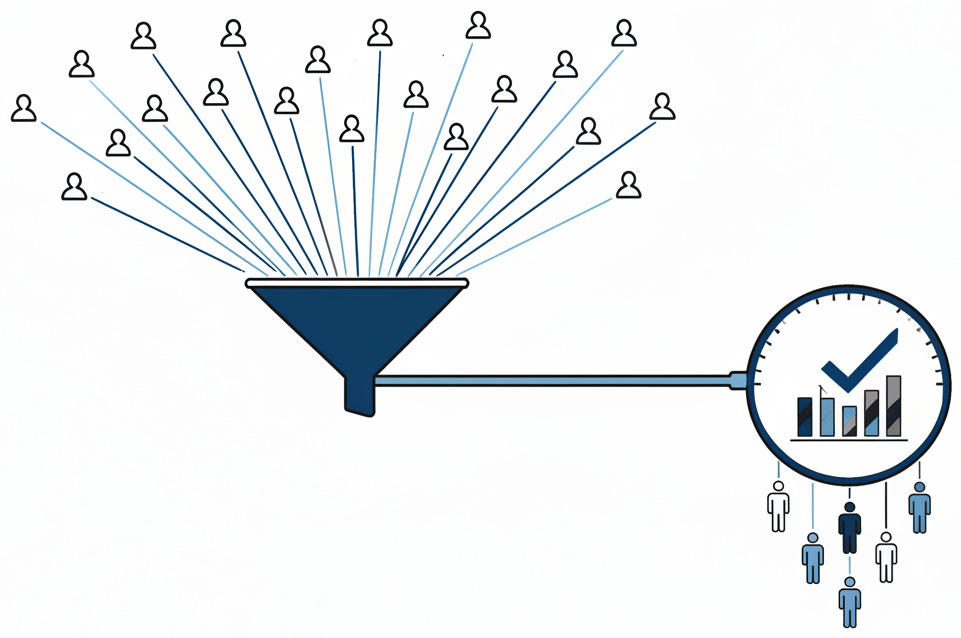

Governance that works focuses on enablement rather than prohibition. Clear policies are less important than practical ones: specifically, which tools are approved, how data is handled, and when outputs require verification. Successful governance brings IT, security, legal, and business units into the same room. McKinsey found 91% of early adopters had implemented governance structures for gen AI.

But policy without infrastructure is theater. Enterprise platforms that keep data inside the perimeter are available now. The barrier is not technical capability; it is the decision to prioritize safety over speed. The 70% of workers who never received AI training represent both a risk and an opportunity. Teach data classification. Teach output verification. Teach approved workflows. The investment returns compound.

## The Strategic Reframe

This pattern applies beyond AI to any technology your employees adopt before you do, from spreadsheets to smartphones to generative AI.

Shadow usage is not defiance. It is a vote. When employees route around policy, they are correcting the map. They are demonstrating that the official process is too slow for the reality of the work.

[](https://substackcdn.com/image/fetch/$s_!iWxR!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F6d9623e4-94cd-45ee-8c30-549fa4f95c32_961x638.png)

GitHub Copilot users complete coding tasks 56% faster. Deloitte found 83% of generative AI users report productivity boosts. The ROI changes the conversation from cost to competitive necessity.

The past two years established a clear trajectory. Organizations that provide secure, monitored AI access see productivity gains while maintaining security. Those that rely on bans watch shadow AI proliferate until an incident forces a reckoning.

The winners will not be those with the strictest policies. They will be those who learn to read the signal.

We assume the organization draws the map. But our people are already drawing it for us. We do not get to choose whether the paths exist. We only get to choose whether to secure them.

* * *

### Further Reading, Background and Resources

## Sources & Citations

* **[Salesforce Generative AI Snapshot Research Series](https://www.salesforce.com/news/stories/ai-at-work-research/)** (October 2023). A double-anonymous survey of 14,000 employees across 14 countries. The methodology matters: double-anonymous design eliminates the social desirability bias that plagues self-reported usage surveys. When employees admit to shadow behavior under these conditions, the numbers mean something.

* **[Samsung ChatGPT Data Leak Coverage](https://www.darkreading.com/vulnerabilities-threats/samsung-engineers-sensitive-data-chatgpt-warnings-ai-use-workplace)** (April 2023). The canonical case study. Three incidents within three weeks of lifting an internal ban: source code, optimization algorithms, and meeting transcripts all entered the public model. The lesson is not that bans work, but that lifting bans without governance fails catastrophically. Also documented in the [AI Incident Database](https://incidentdatabase.ai/cite/768/).

* **[Business.com ChatGPT Workplace Usage Study](https://www.business.com/technology/chatgpt-usage-workplace-study/)** (2023). The source for the “upper managers are 3x more likely to use ChatGPT” finding. When nearly half of upper management uses AI professionally while only 17% of workers report clear policies, the policy hypocrisy becomes visible.

* **[PwC ChatGPT Enterprise Deployment Announcement](https://www.pwc.com/us/en/about-us/newsroom/press-releases/pwc-us-uk-accelerating-ai-chatgpt-enterprise-adoption.html)** (May 2024). The counter-example to Samsung. PwC deployed ChatGPT Enterprise to 100,000+ employees, becoming OpenAI’s largest enterprise customer. This is what “providing alternatives” looks like at scale.

* **[FINRA Regulatory Notice 24-09](https://www.finra.org/rules-guidance/notices/24-09)** (June 2024). The regulatory reality for financial services. FINRA explicitly states that existing rules on supervision, communications, and recordkeeping apply to AI technologies.

## For Context

* **[McKinsey: Gen AI’s Next Inflection Point](https://www.mckinsey.com/capabilities/people-and-organizational-performance/our-insights/gen-ais-next-inflection-point-from-employee-experimentation-to-organizational-transformation)** (August 2024). The 91% adoption figure is 91% of survey respondents, not all employees. The real insight: only 13% of companies have implemented multiple AI use cases despite near-universal employee experimentation. The governance vacuum in statistical form.

* **[HIPAA Journal: Is ChatGPT HIPAA Compliant?](https://www.hipaajournal.com/is-chatgpt-hipaa-compliant/)** (Updated 2024). Standard ChatGPT is not HIPAA-compliant because OpenAI will not enter Business Associate Agreements for consumer tiers. When healthcare workers input patient information, this is the legal framework they are violating.

* **Fault vs. Failure (Safety Engineering)** : The essay borrows from safety-critical systems terminology (ISO 26262, DO-178C). A fault is an abnormal condition (a latent defect), while a failure is the observable malfunction. Faults cause errors; errors cause failures. The analogy: the fault is organizational (no secure tools), the failure is operational (data leak).

## Practical Tools

**Shadow AI Risk Assessment Framework:**

* **Policy Clarity:** What percentage of employees can articulate which AI tools are approved and what data categories are prohibited?

* **Alternative Availability:** For every AI capability employees might seek externally, does an approved internal alternative exist?

* **Training Coverage:** What percentage of employees have received guidance on data classification for AI inputs and output verification protocols?

* **Detection Capability:** Can the organization identify when employees access external AI tools?

**AI Governance Checklist:**

* Cross-functional oversight including AI-specific security review

* Documented data classification rules for AI inputs

* Output verification requirements for AI-generated work product

* Feedback mechanisms for employees to request new tool approvals

* Regular policy review cycles as AI capabilities evolve

## Counter-Arguments

**“The productivity gains are overstated and the risks understated.”** The GitHub Copilot “56% faster” finding measures time-to-completion without fully accounting for bugs introduced by AI-generated code. Meanwhile, data exposure incidents like Samsung’s are likely underreported. The true cost-benefit analysis may be far less favorable than the essay suggests.

**“Prohibition works when properly enforced.”** Well-resourced organizations with strong security cultures can effectively prohibit shadow AI through network-level blocking, endpoint monitoring, and meaningful consequences. The pharmaceutical industry’s 65% ban rate reflects genuine concern about competitive intelligence. Some organizations legitimately cannot accept any AI-related data exposure risk.

**“Enterprise AI tools create their own governance problems.”** Enterprise deployment introduces vendor lock-in, API dependency, and concentrated risk. A ChatGPT Enterprise outage affects 100,000 PwC employees simultaneously. Moving from shadow AI to sanctioned AI transforms distributed, individual risk into concentrated, organizational risk.

**“The essay underweights regulatory uncertainty.”** The EU AI Act’s enforcement timeline means any governance structure built today will require substantial revision multiple times before stabilizing.