*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/next-generation-cybersecurity) · 2024-10-29*

[Read on Substack →](https://theaimonitor.substack.com/p/next-generation-cybersecurity)

---

[](https://substackcdn.com/image/fetch/$s_!bBdh!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F1de5729d-eead-40c9-b66d-1118ac116be3_992x302.png)

Security is not a race. It is a pursuit.

We build higher walls. We hire smarter analysts. We deploy better AI. We assume that matching the enemy tool for tool will let the defender’s weight become decisive. It won’t. The tools work. The institutions cannot move at the speed the tools demand.

The AI revolution has exposed the anatomy of the breach. The asymmetry between attackers and defenders has become structural, not technical.

Attackers operate without compliance, change management, or procurement. They iterate in hours; enterprises iterate in quarters. AI does not bridge this gap. It widens it.

Every organization celebrating their new AI-powered threat detection should consider a simple fact: their patch deployment is measured in weeks. The attacker’s response time is measured in minutes.

[](https://substackcdn.com/image/fetch/$s_!CaYJ!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fa2610f85-4348-475f-aa68-47e9f3c21c16_1084x511.png)

* * *

The conventional claim that AI finally gives defenders the edge misses the point. Machine learning spots patterns humans miss. Behavioral analytics catch anomalies in real time. Automated systems quarantine threats before they spread. These are capabilities. They are not solutions.

In 2019, criminals used AI-generated voice cloning to impersonate a CEO and extract $243,000. The employee on the other end heard his boss’s German accent, his speech patterns, his particular way of issuing urgent requests. He followed instructions and transferred the funds to a Hungarian bank account. By the time the second call came demanding more, suspicion finally stirred. The attack took three minutes. The security team discovered it days later.

This is not an aberration. WormGPT, an unrestricted language model marketed explicitly to cybercriminals, generates phishing emails that are grammatically flawless and contextually appropriate. A single operator can now produce thousands of distinct attacks in the time it once took to craft one. The barrier to entry has collapsed. The sophistication floor has risen. The economics of attack have shifted permanently.

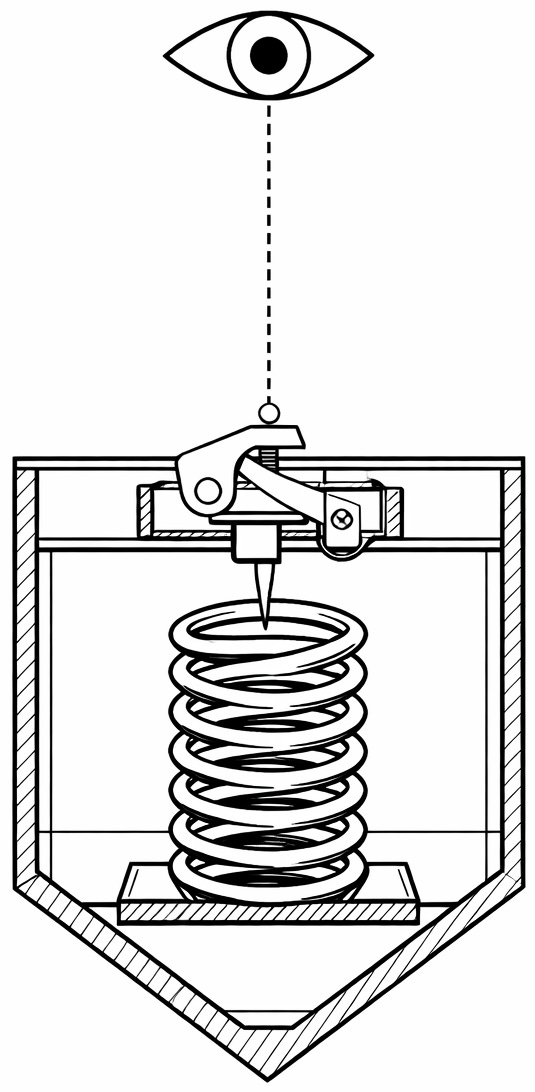

In 2018, IBM researchers demonstrated DeepLocker, a proof-of-concept malware that uses AI to remain dormant until it identifies a specific target through facial recognition. The payload hides inside legitimate applications. It waits. When a webcam captures the right face, it activates.

The trigger conditions are impossible to reverse-engineer because they exist as weights in a neural network, not readable code. There is no signature to detect. No behavior to flag. Just patient waiting until the conditions align.

[](https://substackcdn.com/image/fetch/$s_!DnDC!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F5782e850-62a2-47e4-acc3-48414ecfc21f_535x1092.png)

The technology is not hypothetical. It exists. The question is not whether attackers will use it, but how swiftly enterprises can respond when they do.

Both sides have access to AI. Both sides will use it. The advantage goes to whoever can observe, orient, decide, and act faster. On this dimension, the attacker wins decisively.

A cybercriminal can test a phishing variant, fail, adjust, and try again within an hour. No approval process. No change advisory board. The feedback loop is immediate.

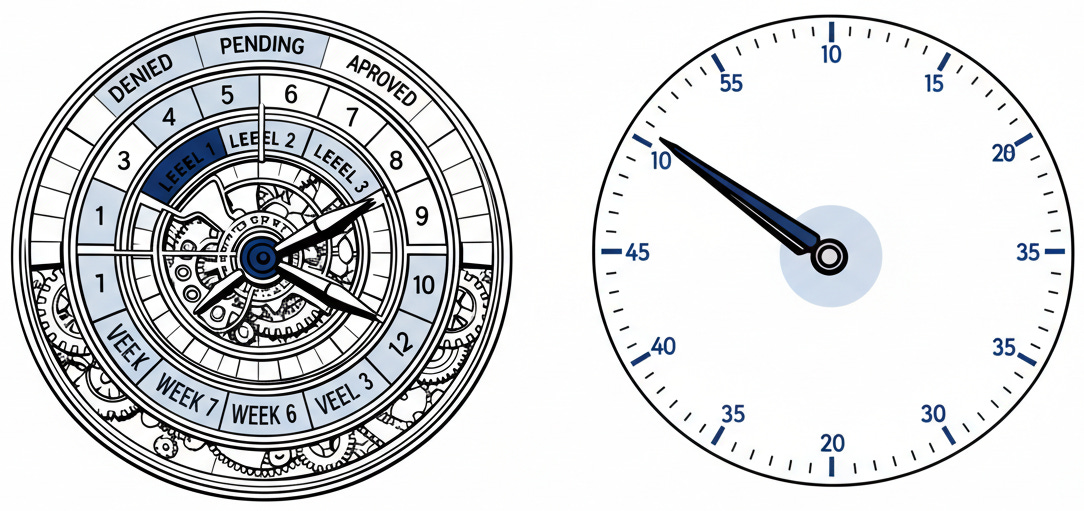

An enterprise facing a novel attack faces a different reality. The patch requires staging. The deployment requires scheduling. The change requires documentation. Multiple stakeholders must sign off. Five to seven approval gates stand between detection and deployment. Weeks pass. Sometimes months.

AI makes both sides quicker, but “quicker” means different things in these two contexts. Attackers get better at attacking. Defenders get better at detecting what they already understand. The gap between detection and response remains a chasm. The problem is architectural, not technical.

This explains an otherwise puzzling observation: enterprises spend more on security than ever before, deploy more sophisticated tools than ever before, hire more analysts than ever before, and suffer more breaches than ever. The tools work. The institutions cannot move at the speed the tools demand.

[](https://substackcdn.com/image/fetch/$s_!ROhS!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ff868fb48-e539-4a35-b5f6-f43e5bd2122b_1209x299.png)

The speed gap extends beyond technical response. Capabilities advance faster than the policies governing them. A security team that wants to implement an autonomous response system faces questions their legal department cannot answer. The liability for an automated system blocking a legitimate transaction is undefined. Auditing a neural network’s reasoning is theoretically opaque. The frameworks do not exist.

While enterprises deliberate, attackers push forward. They face no such constraints. The ethical boundaries that slow legitimate AI adoption do not exist in criminal operations. Every safeguard built into commercial AI tools gets stripped away in the underground versions. Every friction that protects companies from mistakes also protects attackers from consequences. The asymmetry runs deeper than technology. It is existential.

The trajectory here is clear. AI is not making security better or worse in absolute terms. It is amplifying existing advantages. Attackers who moved quickly before AI will move more quickly with it. Institutions that moved slowly will find their slowness exposed more brutally than before. The technology is neutral. The structural advantages it confers are not.

* * *

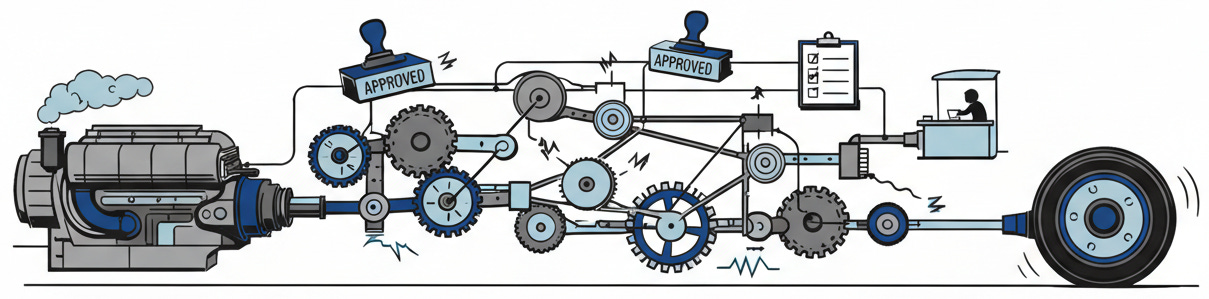

The security industry sells AI as armor. It is actually acceleration. And acceleration favors whoever was already moving fastest. Defense at machine speed requires decision-making at machine speed. Most organizations are not built for that. They were built for predictability, accountability, and control.

The uncomfortable truth is that most companies cannot outrun their own bureaucracy. They cannot implement defenses faster than they approve them. They cannot adapt quicker than they document. The attacker’s advantage is not superior technology. It is freedom from the very structures that make enterprises enterprises.

Solving this requires something more difficult than purchasing better tools. It requires examining why the gap between detection and response exists in the first place, and whether the processes creating that gap are still worth their cost. The question that matters is not “how do we detect threats faster?” but “how much response latency are we willing to trade for control?” For most institutions, the answer will be painful. The controls that slow attackers also slow defenders. The oversight that prevents mistakes also prevents adaptation. The governance that satisfies regulators also satisfies adversaries who count on response times measured in weeks.

We trust that process protects us. We trust that documentation shields us. We trust that the structures designed to manage risk will guard us against failure. In an age of AI, process is the vulnerability.

[](https://substackcdn.com/image/fetch/$s_!UDCc!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fade58bfb-ce85-490f-a8f0-1f9f548be732_973x512.png)

The very systems we built to keep us safe have become the weakness our adversaries exploit. The attackers are betting that our safeguards will remain static while the tools accelerate.

It is a safe bet.

* * *

### Further Reading, Background and Resources

**Sources & Citations**

**[World Economic Forum: Global Cybersecurity Outlook 2024](https://www3.weforum.org/docs/WEF_Global_Cybersecurity_Outlook_2024.pdf)** (January 2024)

The WEF’s annual report, released at Davos and developed in collaboration with Accenture, surveyed cybersecurity leaders from June to November 2023. The headline finding: 55.9% believe generative AI will give the overall advantage to attackers over the next two years. Fewer than one in ten (8.9%) believe defenders will hold the advantage. This is not speculation from journalists or vendors with products to sell; it is the assessed judgment of practitioners. When the people responsible for defending networks believe they are structurally disadvantaged, that belief becomes self-reinforcing.

**[FBI San Francisco Field Office: AI Cybercrime Warning](https://www.fbi.gov/contact-us/field-offices/sanfrancisco/news/fbi-warns-of-increasing-threat-of-cyber-criminals-utilizing-artificial-intelligence)** (May 2024)

The FBI’s official warning, announced at RSA Conference, is worth reading not for its specific recommendations but for what it signals about institutional awareness. When the FBI publicly acknowledges that “attackers are leveraging AI to craft highly convincing voice or video messages and emails,” they are admitting the game has changed. Government agencies rarely issue warnings this direct unless the threat has already materialized.

**[Brian Krebs: Meet the Brains Behind WormGPT](https://krebsonsecurity.com/2023/08/meet-the-brains-behind-the-malware-friendly-ai-chat-service-wormgpt/)** (August 2023)

Krebs identifies the actual human behind the tool. This investigation matters because it demonstrates that malicious AI services are not shadowy nation-state operations but often the work of individual entrepreneurs. Rafael Morais, the 23-year-old Portuguese creator, shut down WormGPT within days of being exposed. Attribution still works, even in the AI era.

**[IBM DeepLocker: Concealing Targeted Attacks with AI Locksmithing](https://i.blackhat.com/us-18/Thu-August-9/us-18-Kirat-DeepLocker-Concealing-Targeted-Attacks-with-AI-Locksmithing.pdf)** (Black Hat USA, August 2018)

The original presentation slides remain worth studying. DeepLocker predates the current generative AI boom by five years, yet it anticipated the core problem: AI enables malware to hide in plain sight until it recognizes its specific target. Note the ethics of the disclosure itself: IBM chose to publish offensive research as a warning.

**Counter-Arguments**

_The asymmetry may favor defenders, not attackers._ Defenders have access to vastly more compute resources, larger datasets of historical attacks, and institutional continuity that most threat actors lack. The “asymmetry of speed” might be real, but the asymmetry of resources favors the other side.

_The structural explanation ignores economic incentives._ The simpler explanation may be that organizations underinvest in security because the costs of breaches are externalized and insurance markets are immature. If consequences fell directly on decision-makers, iteration speed would increase overnight.

_AI amplifies noise, not just signal._ Every AI-generated phishing campaign produces patterns. The same AI capabilities that help attackers scale their operations also give defenders more data to train their models.

_Human judgment remains the binding constraint._ No matter how fast AI systems iterate, the ultimate decisions in both attack and defense remain human. The bottleneck is not machine speed but human attention and judgment.