*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/long-term-societal-impacts-of-ai) · 2025-02-08*

[Read on Substack →](https://theaimonitor.substack.com/p/long-term-societal-impacts-of-ai)

---

[](https://substackcdn.com/image/fetch/$s_!RVzR!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fd98cde24-0f82-46d4-b8d9-dd4572d8ac51_702x1087.png)

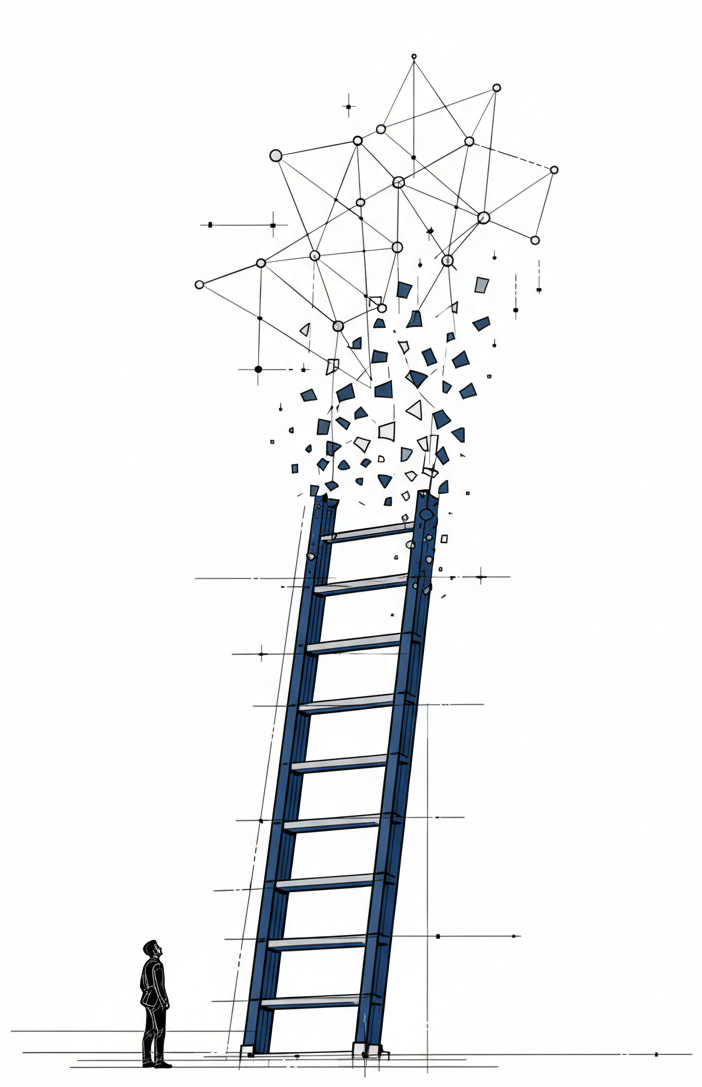

For centuries, expertise was a function of accumulation. The senior partner held the leverage because they held the information. That assumption has dissolved. The machines are not coming for the physical tasks of the past. They are coming for the cognitive scaffolding of our present. The question is no longer how we adapt our work. It is how we adapt our identity when the ladder we climbed has been removed.

Previous revolutions displaced those who worked with their hands. This one displaces those who paid for their minds.

[](https://substackcdn.com/image/fetch/$s_!jR5j!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F62545090-6226-4b07-8089-1d3cb1d1d306_1112x738.png)

The data is no longer preliminary. The occupations most exposed to generative AI are high-wage professional roles: mortgage brokers, lawyers, investment bankers. Blue-collar workers may be the least harmed.

The pattern should have been obvious.

In high-leverage fields like law and finance, adoption shifted from experimental to infrastructure. Task completion times dropped by nearly half while output quality rose. These are not gradual efficiency gains. They represent a step-function change in the cost of cognition. The transformation is not arriving. It is installed.

Amplification is the visible story. Physicians paired with AI systems now outperform specialists working alone. New roles commanding high salaries suggest a market hungry for oversight. Global GDP projections are revised upward. The system works. It produces more.

But efficiency does not distribute itself evenly.

The most dangerous finding is not a capability, but a compression. AI assists the novice more than the expert. It closes the gap between the capable and the exceptional. This sounds like equality, but it is actually a collapse of market value. When a junior employee with an AI assistant produces output indistinguishable from a senior partner, the return on experience evaporates. The career ladder, the structure that justified years of low-paid apprenticeship in exchange for future leverage, breaks.

We are flattening the skill curve just as we automate the work that sits on top of it.

[](https://substackcdn.com/image/fetch/$s_!OQ8l!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fe525125a-9113-49ce-8c67-782374dd2393_1122x364.png)

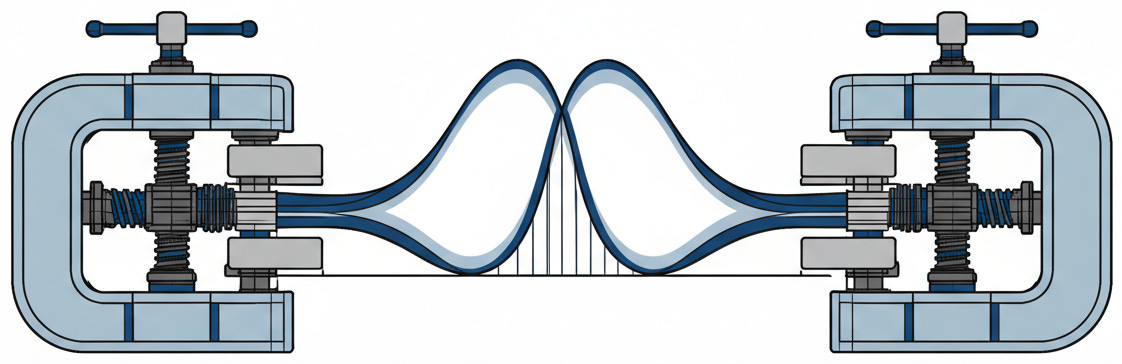

About half of Americans believe AI will worsen inequality. The polling reflects a structural intuition: acceleration benefits those who control the accelerator. One mechanism of that control is already visible.

Systems execute the history they are trained on. A recruiting tool learns to penalize resumes containing “women’s” not because it is evil, but because it reflects a decade of hiring data. Risk assessment systems mislabel Black defendants as high-risk at twice the rate of white defendants not because they are flawed, but because they reflect the patterns of past sentencing. These are not bugs to be patched. They are structural features of probabilistic machines that lack the capacity for moral reflection.

They execute the past. And executing the past means encoding who historically held power and who did not.

[](https://substackcdn.com/image/fetch/$s_!6FFb!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ff55e3554-0b49-4f89-81ee-fdb24f999b55_1231x471.png)

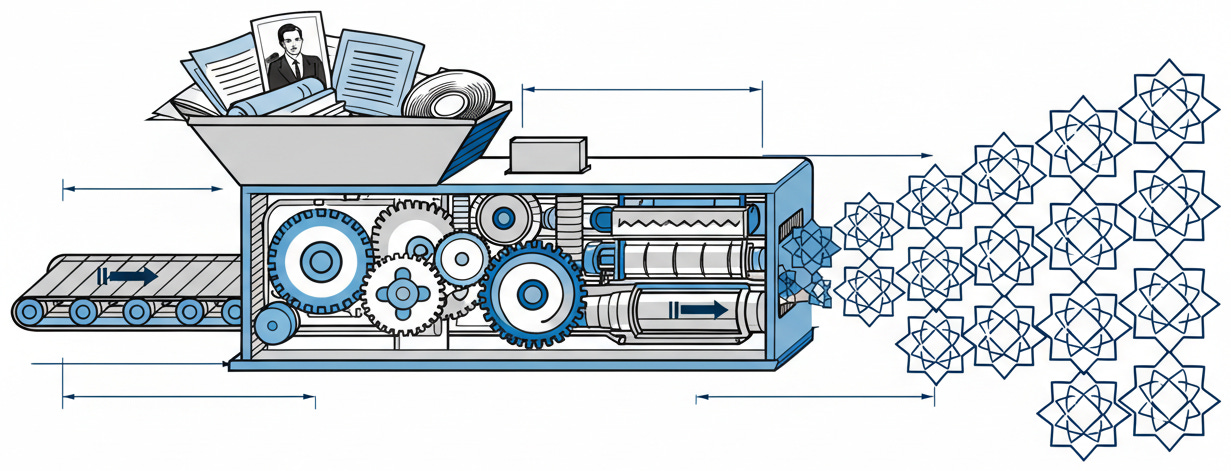

This creates a governance problem with no clean solution. The EU’s AI Act, four years in the drafting, governs a landscape that has already shifted. High-risk applications face mandatory audits and human oversight obligations, but by the time these regulations take effect, the systems they target will likely be obsolete. Democratic deliberation moves slowly by design. Technology deployment moves fast by incentive. The gap is not an accident. It is structural. And structure determines who controls what.

[](https://substackcdn.com/image/fetch/$s_!gIC5!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fad4faf23-931a-4177-84b7-1e6664ab4771_1248x832.png)

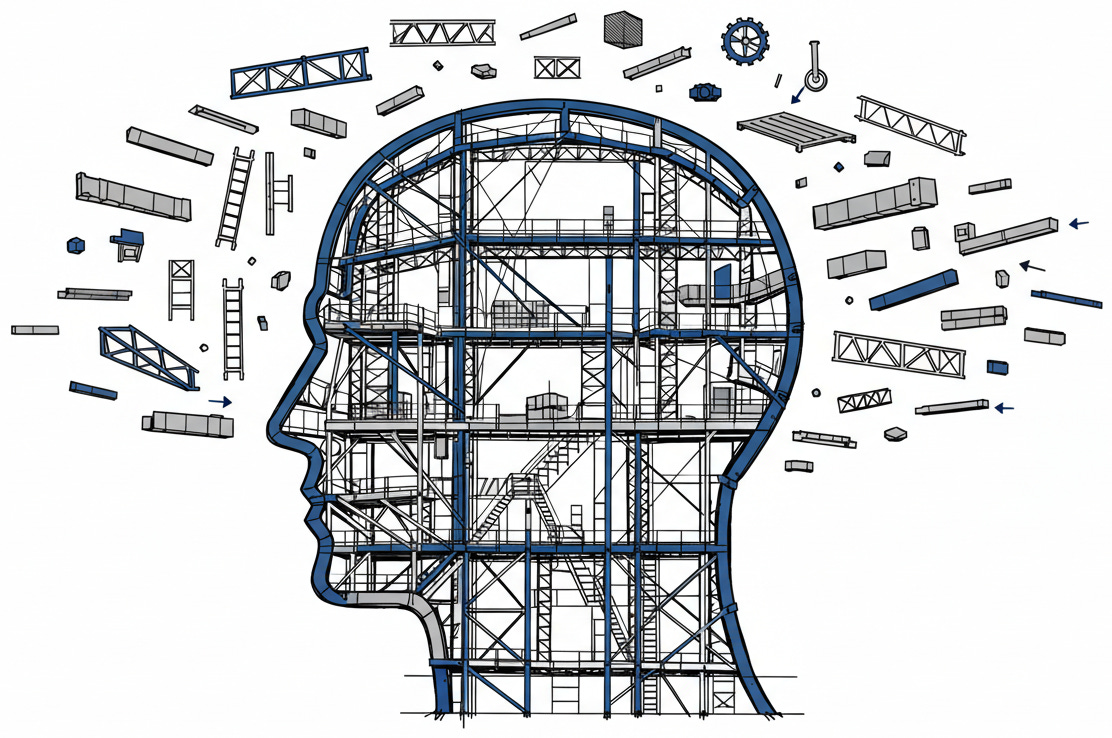

The harder question reaches beyond policy to meaning. Work has provided purpose, identity, and structure for centuries. If AI can perform most cognitive tasks more efficiently than humans, we are left with a void.

Professional identity is not merely about income. It is about mastery, about the satisfaction of doing something well that others cannot easily replicate. The lawyer who spent a decade learning to read case law. The analyst who developed intuition for market patterns. The writer who cultivated a distinctive voice. These investments of time and attention created differentiation, and differentiation created meaning.

Now differentiation is cheap.

When expertise becomes abundant, when anyone with AI access can approximate what once required years of training, the foundation of professional identity shifts. The question of good or bad is the wrong frame. The question is whether we have any framework at all. We are experiencing, in real time, a transformation of what it means to be skilled. And the trajectory is one-way. When law firms discover that three associates with AI can do the work of twelve, they do not quietly maintain the larger team. When consulting firms find that AI-assisted analysts outperform unassisted ones, they do not choose the slower path. The institutions that created experts are already adapting to a world that needs fewer of them.

Some say we are freed for creativity, caregiving, and connection, the activities AI cannot replicate. Others say we are freed into a crisis of purpose that no policy can address. The honest position is that we do not know. We are building systems we cannot fully predict, deploying them at a speed we cannot fully control, and trusting that the benefits will be distributed fairly. The evidence suggests otherwise.

The deeper story is about authority. AI is now embedded directly into the tools we use. It is ambient rather than optional. That design choice is making AI the default state of work. When assistance is invisible, workers lose awareness of where their judgment ends and the system’s begins. The boundary blurs. The locus of control shifts.

[](https://substackcdn.com/image/fetch/$s_!_tSG!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fe8ff4510-7fa0-4773-bb18-c26f9433c840_1160x653.png)

Every organization is making a choice about where the human ends and the system begins. These are structural decisions about authority. Each one forecloses some futures while enabling others. The window for shaping these decisions is closing. The trajectory is being set now.

We trust that efficiency is an unalloyed good. That making cognition cheaper is equivalent to making it better. But value depends on direction, not speed. The machines are learning to execute our history faster than we are learning to understand it. We are optimizing for output while the inputs to our identity are being rewritten.

The real story of AI is not about what it can create. It is about what it can control.

* * *

### Further Reading, Background and Resources

**Sources & Citations**

[Brynjolfsson, Li, Raymond: “Generative AI at Work”](https://www.nber.org/papers/w31161) (NBER Working Paper 31161, April 2023) - The study that made skill compression measurable. Tracking 5,179 customer support agents, the researchers found AI assistance produced a 14% average productivity increase, but the distribution matters more than the average. Novice workers improved 34%. Experienced workers barely moved. When the paper states that “AI assistance compresses the productivity distribution,” it is documenting the mechanism that makes accumulated expertise less scarce.

[MIT Study: “Experimental Evidence on the Productivity Effects of Generative Artificial Intelligence”](https://news.mit.edu/2023/study-finds-chatgpt-boosts-worker-productivity-writing-0714) \- Noy & Zhang, Science (July 2023) - The headline finding (40% faster task completion, 18% quality improvement) matters less than what is buried in the data: AI compressed the productivity distribution, helping lower-skilled workers more than experts. The detail that 68% of workers simply copied ChatGPT output without editing should concern anyone thinking about skill atrophy.

[Clio 2024 Legal Trends Report](https://www.clio.com/resources/legal-trends/2024-report/) (October 2024) - Legal AI adoption jumped from 19% to 79% in a single year. A profession built on precedent is adopting technology faster than it can establish norms for its use.

**For Context**

[ProPublica: “Machine Bias”](https://www.propublica.org/article/machine-bias-risk-assessments-in-criminal-sentencing) (May 2016) - The investigation that launched algorithmic accountability as a discipline. Black defendants were mislabeled as high-risk at nearly twice the rate of white defendants. Required reading for understanding why bias is structural rather than incidental.

**Counter-Arguments**

_The Productivity Paradox Suggests Slower Transformation Than Headlines Imply_ \- History offers a consistent pattern: transformative technologies take decades to restructure economies, not years. Robert Solow’s 1987 observation that “you can see the computer age everywhere but in the productivity statistics” applied for nearly fifteen years before productivity growth materialized. The current 28% workplace adoption rate suggests we are in early innings of a multi-decade transformation. Predictions of imminent displacement may be confusing capability with implementation.

_Skill Compression May Democratize Rather Than Devalue Expertise_ \- If AI helps novices reach higher performance levels faster, it lowers barriers to entry in fields historically gatekept by expensive credentialing. Legal services, financial advice, and medical consultation have been inaccessible to millions. AI-assisted professionals serving clients at lower price points could expand access to services that were luxuries of the affluent. The question is distributional: who captures the efficiency gains?