*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/geopolitics-of-ai) · 2024-12-10*

[Read on Substack →](https://theaimonitor.substack.com/p/geopolitics-of-ai)

---

[](https://substackcdn.com/image/fetch/$s_!U1IM!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F00837cd7-a968-4f02-8a38-818b981c4f76_1244x777.png)

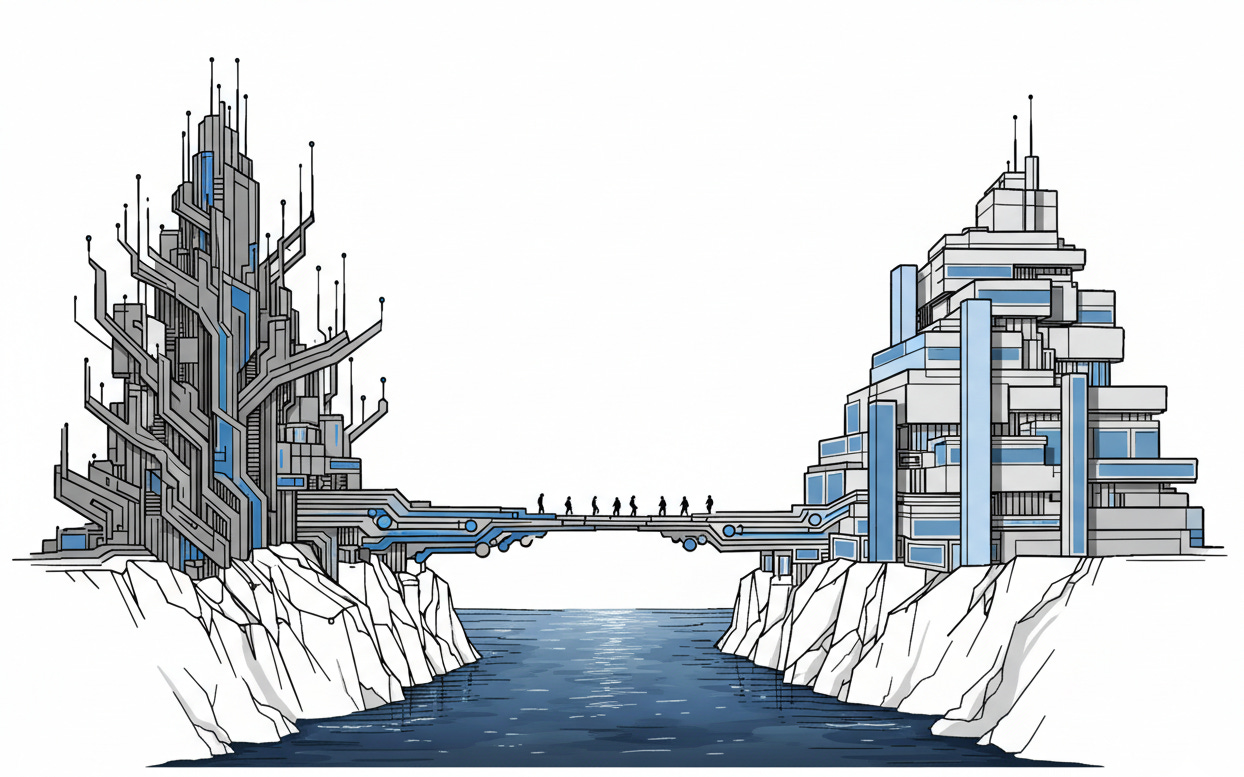

We treat intelligence as a natural resource. We assume it sits in the ground, waiting to be mined. But intelligence is not discovered. It is built. The means of production define power.

The race for AI is not a race for technology. It is a race for control.

Nations have always fought for land, for oil, for access to trade routes. Now they compete for the capacity to build intelligence itself. Commercial convenience and strategic capability have merged. The code that recommends your next movie is the same technology guiding autonomous drones and optimizing power grids. The United States and China are the primary contestants. Everything else is an audience.

China’s strategy is state-directed and built on timescales that democracies struggle to comprehend. Beijing published a national plan to lead the world in AI by 2030, backed by coordinated infrastructure spending and directed research. When the Chinese state sets a priority, resources mobilize with a speed that market economies cannot match.

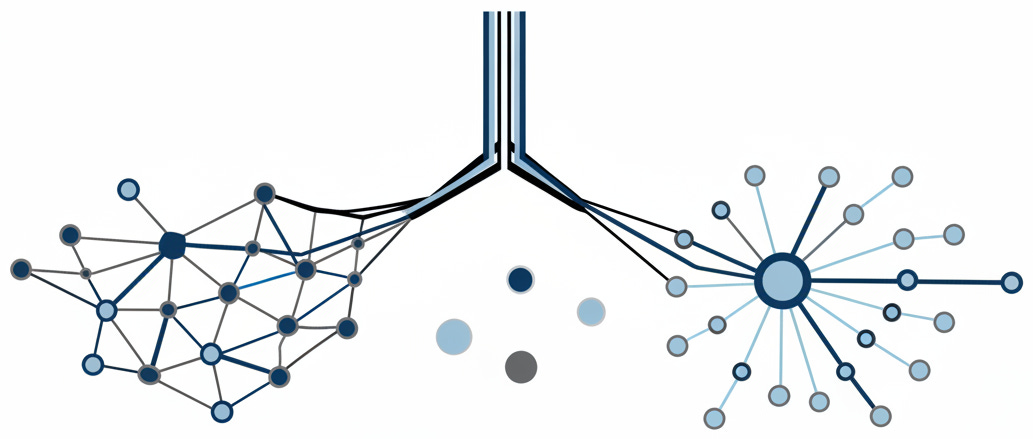

The American strength comes from a different engine. The breakthrough technologies emerged largely from Silicon Valley companies, funded by venture capital and driven by commercial incentives rather than government edict. The result is a lead in raw capability. American companies develop 73% of large language models, compared to China’s 15%. Private AI investment in the U.S. reached $67 billion in 2023, dwarfing China’s $8 billion.

But the snapshot of today is misleading. China now files more than double the AI patents of the U.S. annually. In specific domains like facial recognition and mass surveillance, deployment already leads. The question is which variable matters more when the system scales.

Neither shows any sign of stepping back from the race. The United States holds the cards that matter right now: talent attraction, semiconductor access, and the depth of a commercial ecosystem that innovates faster than any state apparatus. China holds the cards that matter later: the scale of data, a speed of state coordination that democracies cannot replicate, and a willingness to deploy AI in ways Western societies would not accept. The American model generates better algorithms; the Chinese model generates more of everything else.

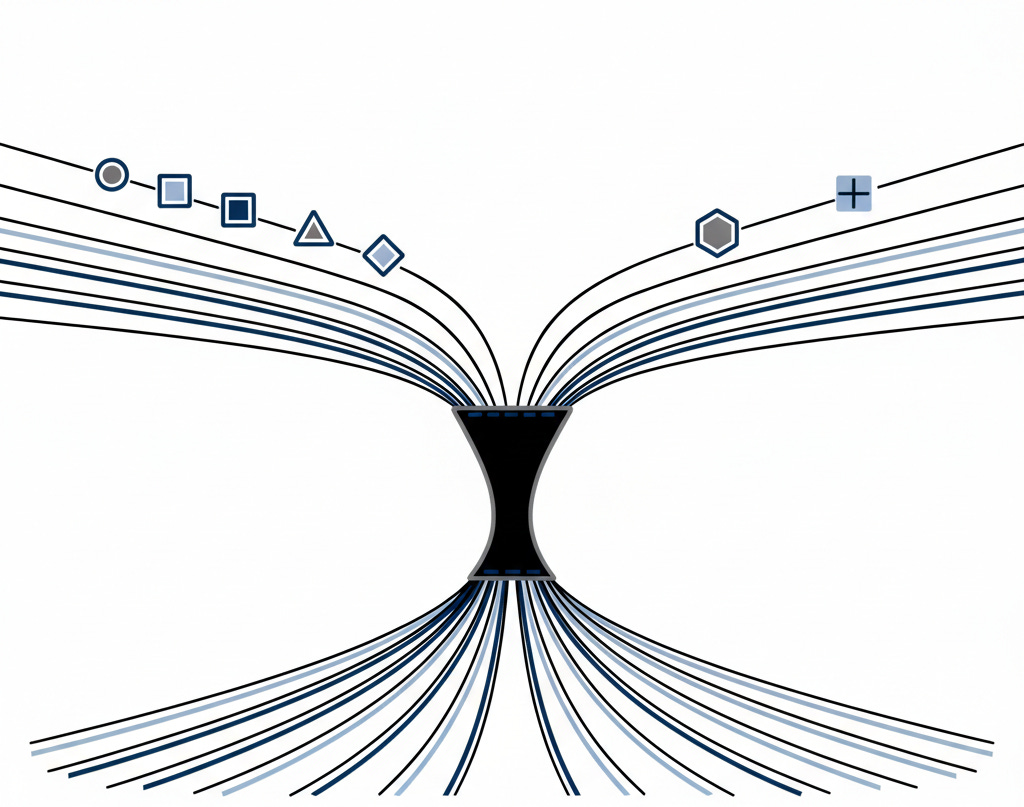

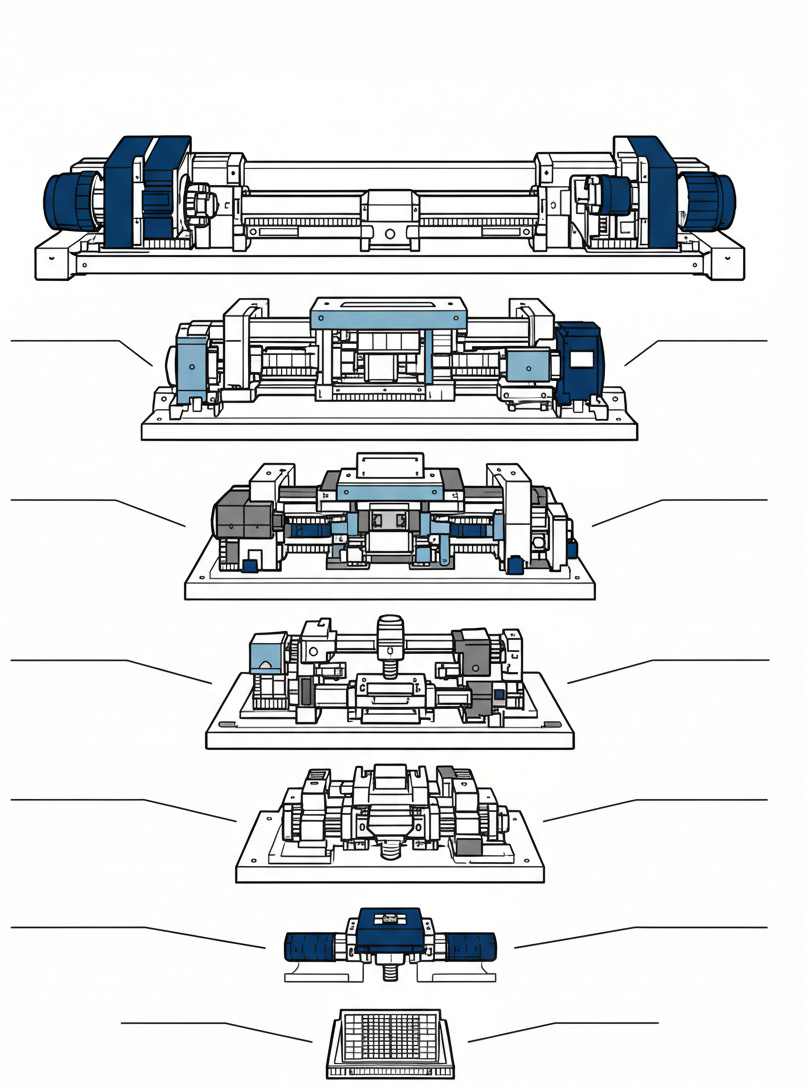

Washington has bet that the hardware matters more than the software. Training advanced AI is an energy and silicon problem first, a code problem second. The most capable models demand millions of dollars’ worth of processors. The supply chain for those chips is the most potent strategic chokepoint in existence. NVIDIA designs the GPUs in America; Taiwan manufactures them; the Netherlands produces the machinery that etches the silicon.

[](https://substackcdn.com/image/fetch/$s_!OxYp!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fd8a13211-988f-40c9-8edf-faa798c2c37a_1024x807.png)

Washington understood something crucial: if you cannot control the algorithms, you can control the fuel they burn.

The export restrictions imposed by the U.S. in 2022 and 2023 attempted to freeze an adversary’s capability by starving it of fuel. Every month that passes with restricted chip access is a month China cannot train the largest models at the frontier. China is racing to build domestic semiconductor capacity, but the gap spans years, possibly a decade. Whoever controls the chips controls the pace of progress.

What does that timeline actually look like? China’s SMIC has produced chips at 7-nanometer scale, but the most advanced chips require extreme ultraviolet lithography that only ASML can provide, and ASML cannot sell to China. Huawei’s Ascend processors are improving, and the gap with NVIDIA may be narrower than commonly assumed, but efficiency and scale remain American advantages. China is investing tens of billions in domestic fab capacity, but building a semiconductor ecosystem is not a spending problem. It is an accumulated knowledge problem. The machines that make the machines took decades to develop. That timeline cannot be purchased away.

[](https://substackcdn.com/image/fetch/$s_!l-ls!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fa9f7c6dc-e01c-48a7-a9c2-be87a74fa6e9_806x1091.png)

While the U.S. and China race to build, Europe has chosen to regulate. The EU AI Act, which came into force in 2024, bans certain uses outright and heavily regulates high-risk applications. European officials frame this as “human-centric AI,” betting that the rules matter as much as who wins. There is power in this approach: companies seeking European market access must comply, and compliance shapes product design globally. But regulation defends against impact; it does not produce capability. Europe may influence how AI is deployed, but Washington and Beijing will determine what AI exists to deploy.

The forces shaping this struggle point in a clear direction. Perhaps a major AI accident triggers global pause. Perhaps the economic costs of decoupling become too high. Perhaps breakthrough technologies emerge that reset the playing field. None of these seems imminent.

What seems more likely is deepening bifurcation. A Western AI sphere governed by democratic constraints and commercial incentives, and a Chinese sphere operating under state direction with fewer limits on deployment. The rest of the world will not get to choose which reality they inhabit. They will simply choose which supplier to buy from.

[](https://substackcdn.com/image/fetch/$s_!Jxz1!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F515b2129-5e33-4daa-bf16-51604f32dfca_1033x439.png)

This sounds abstract until you map it onto decisions being made now. Brazil considering Huawei 5G infrastructure. Saudi Arabia negotiating AI partnerships with both Washington and Beijing. India trying to build domestic capability while balancing relationships with both. Infrastructure, not ideology, determines which sphere a nation enters. Once your telecommunications run on Chinese equipment, once your smart cities use Chinese AI, once your surveillance systems train on Chinese models, you have chosen a technological dependency that shapes what is possible for a generation. The supplier becomes the architecture.

The Space Race was expensive theatre. Nuclear weapons created deterrence through the threat of mutual destruction. AI offers something different: compounding advantage in every domain simultaneously. Economic productivity, military capability, surveillance capacity, information control. The nation that leads in AI does not merely gain prestige. It gains the ability to shape the future faster than anyone else can react.

The twentieth century was defined by the control of industrial production. The twenty-first century will be defined by the control of intelligence production. We think of this as a software competition. It is a supply chain war.

The trajectories are set. The choices that determine which path we follow are being made now, in export control decisions and research funding priorities. These choices will compound. By the time their consequences are obvious, they will be locked in.

The architecture of the next century is not being debated. It is being assembled.

[](https://substackcdn.com/image/fetch/$s_!aSCQ!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Ff9ad74fb-a3d5-4481-b21e-560933d5cd6e_1166x609.png)

* * *

### Further Reading, Background and Resources

**Sources & Citations**

[Stanford HAI AI Index Report 2024](https://hai.stanford.edu/ai-index/2024-ai-index-report) \- The definitive source for understanding the U.S.-China investment gap. Stanford’s data reveals the $67 billion versus $8 billion disparity in private AI investment, and it does so with methodology transparent enough to interrogate. Worth reading not just for the headline numbers but for the granular breakdowns: which categories of AI receive funding, where the talent concentrates, how model development distributes geographically. The 73% U.S. share of large language model development comes from EU analysis cited here. This is where exaggeration carries legal consequences.

[Brookings Institution: “The Global AI Race”](https://www.brookings.edu/articles/the-global-ai-race-will-us-innovation-lead-or-lag/) \- Brookings provides the clearest synthesis of why the snapshot numbers are misleading. Yes, the U.S. leads in investment and frontier models. But China’s patent volume, surveillance deployment, and state coordination represent a different kind of lead. The analysis is sober about American vulnerabilities, which makes it useful. Think tanks that only flatter their home country’s position are not worth reading.

[European Commission: EU AI Act Official Framework](https://digital-strategy.ec.europa.eu/en/policies/regulatory-framework-ai) \- Primary documentation for understanding Europe’s bet that governance can substitute for production. The risk-based classification system, the prohibited practices, the compliance timelines are all here. Read this to understand what European officials believe they are accomplishing. Whether they are right is a separate question, but you cannot evaluate the strategy without understanding it first. Watch specifically for how the “high-risk” category expands over time, as this is where regulatory creep will manifest.

[CSIS: Chokepoints in the Semiconductor Supply Chain](https://www.csis.org/analysis/chokepoints-semiconductor-supply-chain) \- Essential reading for anyone who wants to understand why chips are the oil of the AI era. CSIS maps the concentration points: ASML’s monopoly on EUV lithography, TSMC’s dominance in advanced manufacturing, the limited number of facilities capable of producing AI-grade chips. The analysis reveals why the U.S. export controls are both powerful and fragile. A single earthquake in Taiwan would reshape global AI development more than any policy decision.

**For Context**

[UK Government: The Bletchley Declaration](https://www.gov.uk/government/publications/ai-safety-summit-2023-the-bletchley-declaration) \- The November 2023 summit represents the first time 28 nations, including both the U.S. and China, signed a joint statement on AI safety. The declaration itself is diplomatically vague, but the fact of joint signature matters. This is the baseline for understanding what minimal international consensus currently exists, and how far we are from anything resembling meaningful coordination.

[Georgetown CSET: China’s Military-Civil Fusion](https://cset.georgetown.edu/publication/pulling-back-the-curtain-on-chinas-military-civil-fusion/) \- Essential background for understanding why “commercial AI” and “military AI” are meaningless categories in China’s strategic framework. The MCF strategy explicitly targets AI for defense applications, with Xi Jinping personally chairing the coordination commission. Western analysts who treat Chinese tech companies as purely commercial entities are missing the architecture.

[Government of India: IndiaAI Mission](https://www.pib.gov.in/PressReleaseIframePage.aspx?PRID=2012355) \- The clearest example of a major non-aligned power charting its own course. India’s $1.25 billion IndiaAI Mission, approved in March 2024, aims to build sovereign AI capability through indigenous large language models, 10,000+ GPU compute infrastructure, and domestic talent development. Read alongside the iCET partnership with the U.S. to see how India balances domestic capability-building with strategic alignment.

**Practical Tools**

_Strategic Dependency Assessment Framework_

When evaluating AI-related strategic dependencies, consider these dimensions:

* **Hardware access** : Does your nation or organization depend on chips manufactured outside allied territory? What is the timeline to alternative sources if supply is disrupted?

* **Talent concentration** : Where are your AI researchers trained, and where would they go if incentives shifted? Immigration policy is AI policy.

* **Data sovereignty** : Who controls the infrastructure where your training data resides? The cloud provider’s nationality matters more than the server’s location.

* **Model supply chain** : For commercial AI applications, can you trace the model’s provenance? Fine-tuned models inherit the strategic dependencies of their base models.

* **Regulatory arbitrage** : How do your compliance obligations differ from competitors in less regulated jurisdictions? The EU AI Act creates asymmetric constraints.

_Decision Criteria:_ If you identify 0-1 dependencies, monitor but do not restructure. If you identify 2-3 dependencies, develop contingency plans and document model provenance now. If you identify 4-5 dependencies, strategic vulnerability is material; begin active mitigation or accept the risk explicitly at board level.

**Counter-Arguments**

_“The decoupling narrative is overstated.”_ Despite aggressive rhetoric, the U.S. and China remain deeply economically intertwined, and AI development depends on global collaboration. Chinese researchers publish in American venues; American companies manufacture in China; academic partnerships persist despite official restrictions. The semiconductor chokepoint is real, but it assumes Taiwan remains accessible to the U.S. and that Chinese domestic capacity never catches up. History suggests that determined nations eventually work around technological blockades. A ten-year lead is not permanent dominance.

_“Hardware chokepoints can be circumvented.”_ The essay emphasizes chip control as the decisive lever, but alternative architectures may emerge. Neuromorphic computing, optical processors, and other approaches could eventually route around GPU dependency. Meanwhile, algorithmic efficiency improvements mean that smaller models on less advanced hardware can increasingly match the performance of frontier systems. Meta’s LLaMA releases demonstrated that open-source models on consumer hardware can approach competitive performance levels. The chokepoint assumes current architectures remain essential.

_“Europe’s regulatory approach may prove strategically sound.”_ The essay treats European regulation as a defensive retreat from production. But Brussels may be playing a longer game. If AI causes significant harms, the EU’s early governance framework positions it to export regulatory standards globally, just as GDPR became the de facto global privacy standard. Being the safest provider of AI services may prove more valuable than being the most capable.

_“The AI race framing itself is the problem.”_ Treating AI development as a zero-sum competition guarantees the worst outcomes the essay warns about. The frame creates pressure for speed over safety, deployment over deliberation, national advantage over global coordination. Perhaps the more important question is not who wins the race but whether the race framing itself is the correct model for a technology that could affect everyone regardless of which nation develops it first.