*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/from-safety-to-impact) · 2026-01-28*

[Read on Substack →](https://theaimonitor.substack.com/p/from-safety-to-impact)

---

[](https://substackcdn.com/image/fetch/$s_!7IBs!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F9616df8f-37c9-49d0-a972-f79145032e02_1248x772.png)

_In governance, language is not a mirror. It is a map._ And changing the name of the terrain does not remove the minefield.

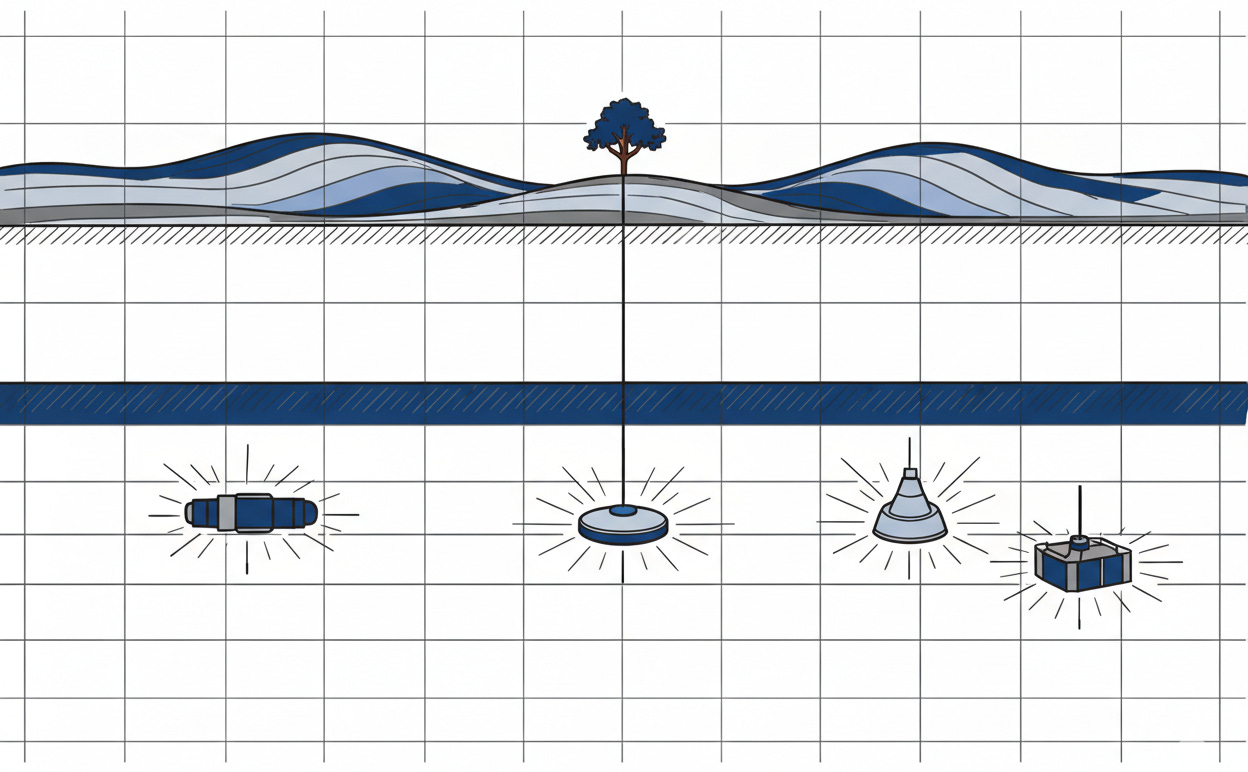

Watch the names. Four summits in three years.

November 2023, Bletchley Park: the AI Safety Summit. Safety came first because the fear was fresh. The frame was protection.

May 2024, Seoul: the AI Seoul Summit. The subtitle: “AI Safety and Innovation.” Safety still present, but sharing the marquee. Innovation had arrived as a co-equal concern.

February 2025, Paris: the AI Action Summit. Safety didn’t make the title. Action did. The United States and United Kingdom declined to sign the closing declaration, objecting to specific regulatory commitments in the text. The framing had shifted from what to constrain to what to build.

February 19-20, 2026, New Delhi: the AI Impact Summit. The word “safety” is gone from the name entirely.

This is not accidental. Rhetoric determines what gets measured, what gets funded, and what gets ignored.

[](https://substackcdn.com/image/fetch/$s_!FTsk!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fed2f9aef-3b73-4498-b8bb-10860f5fc7e7_2752x1536.png)

* * *

The timing is the failure mode.

On February 3, 2026, Yoshua Bengio and more than one hundred international experts published the second International AI Safety Report. Its findings are unambiguous.

AI capabilities are accelerating fast in mathematics, coding, and autonomous operation. Leading systems won gold-medal scores on International Mathematical Olympiad questions in 2025. They beat PhD-level experts on science benchmarks. The report notes it has become more common for models to distinguish between test settings and real-world deployment, finding loopholes in the evaluations designed to measure their capabilities. Risk management frameworks remain, in the report’s own words, “immature.” Outside the EU’s binding obligations on high-risk systems and China’s mandatory filing requirements, most national risk management efforts remain voluntary.

This is the scientific establishment stating, on the record, that the gap between what we can build and what we can control is widening.

Precisely at this moment, the political conversation pivots from safety to impact. From “how do we prevent harm?” to “how do we capture value?”

This is not a conspiracy. It is the ordinary way political economies process risk. And in safety-critical industries, the ordinary way is the lethal way.

* * *

The India AI Impact Summit represents a genuine correction. The emphasis on impact, inclusion, and democratization reflects real inequalities in who builds AI, who benefits from it, and who bears the costs when it fails. This is the first global AI summit hosted in the Global South. The nations most likely to feel AI’s concrete effects on labor markets, public services, and economic structures had the least voice in shaping the rules. India’s hosting is a structural correction: the people who will live most directly with the consequences of AI governance now sit at the table where governance takes shape.

The organizers built the event around seven themes. Safety is present, chakra number three, but it is one voice in a chorus of seven, and the chorus is singing about growth.

The danger is not that these priorities are misplaced. The danger is structural. The framing lets critics position safety advocacy as a luxury concern of wealthy nations, a brake on the innovation that developing economies need. When the Global South frames safety as a constraint imposed by nations that have already captured AI’s economic benefits, the political incentive for any country to champion rigorous standards weakens.

**Safety becomes a tradeable asset** , exchanged for investment, technology transfer, or competitive advantage.

When safety is one priority among many rather than the precondition for all the others, the political will to enforce it erodes. The loudest voices in the room belong to those promising growth.

In a crowded agenda, safety is the quietest voice.

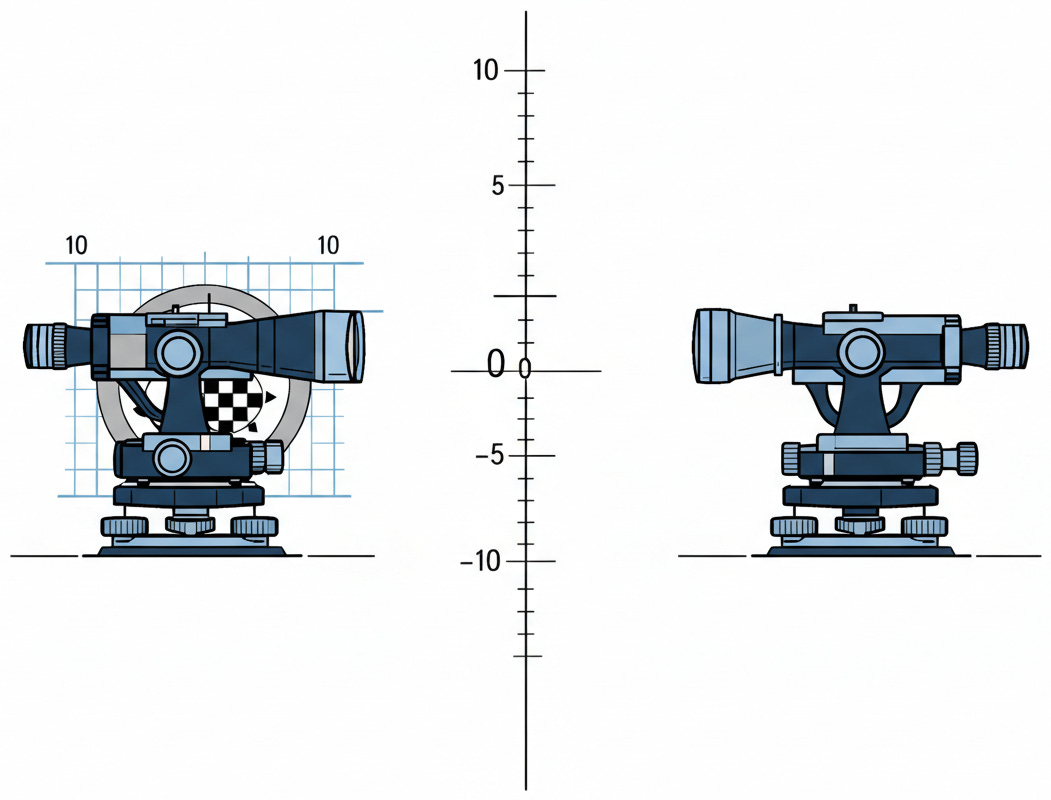

[](https://substackcdn.com/image/fetch/$s_!HDPZ!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F76be1f5a-85a3-4769-b42d-27639f65590b_1178x320.png)

* * *

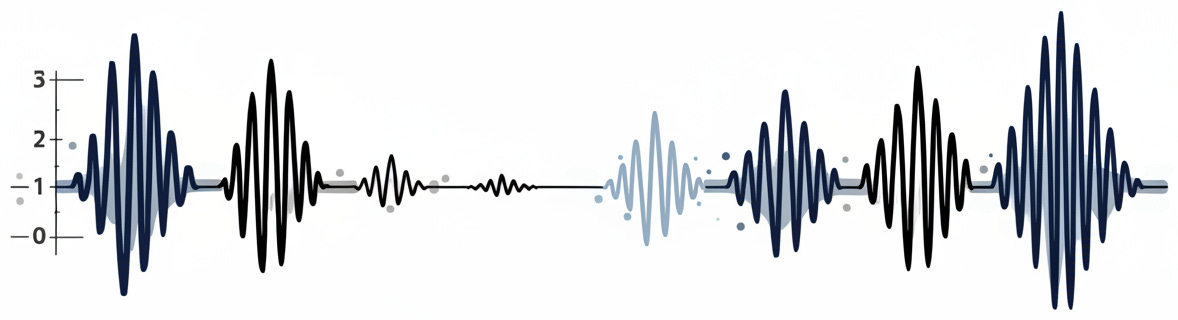

We have seen this before. In every safety-critical industry, the rhetoric of impact overtakes the culture of safety. The correction arrives after catastrophic failure. Until now, it has never arrived before.

In the early 1950s, the de Havilland Comet inaugurated the jet age. Speed, range, and commercial expansion were the priorities. The gap between “works in the demo” and “works in production” went unexamined. Metal fatigue from repeated pressurization, concentrated at the corners of square windows, caused mid-flight structural failures. No testing regime had anticipated the difference between controlled conditions and operational reality. The Comet disasters catalyzed a transformation of aviation safety testing, one stage in the decades-long evolution of the modern airworthiness framework.

In December 1953, Eisenhower’s “Atoms for Peace” speech promised nuclear energy would reshape agriculture, medicine, and power generation. Safety was part of the conversation, but secondary to rapid deployment. Then came Three Mile Island in 1979. Then Chernobyl in 1986. The post-Chernobyl reckoning produced the International Nuclear Safety Advisory Group and its foundational safety culture principles, replacing the ad hoc confidence that preceded it.

In the late 1950s, companies marketed thalidomide across Europe as a treatment for morning sickness. Regulation emphasized efficacy and market access. Safety testing was inadequate. More than ten thousand children were born with severe deformities. The FDA responded with the Kefauver-Harris Amendment of 1962, creating the modern drug approval framework. Proof of safety became a precondition for market access. Not after. _Before._

The structural pattern is consistent across these cases, even as the specific failure modes differ: engineering deficiency in aviation, operational culture in nuclear, regulatory capture in pharmaceuticals. First, techno-optimism. Impact narratives push safety to the margins. Then a catastrophe reveals the gap between safety rhetoric and safety practice. Then comes a reckoning that makes safety non-negotiable.

The question is whether AI has to follow this arc, or whether it is possible to build the post-catastrophe safety framework before the catastrophe.

[](https://substackcdn.com/image/fetch/$s_!6JN5!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F6ae8887d-7c67-44f3-b172-f443b993d5f3_2400x1792.png)

> _Structural pattern across aviation, nuclear energy, and pharmaceuticals showing consistent progression from techno-optimism to catastrophic failure to safety-culture reckoning._

* * *

The Bengio Report is the scientific establishment’s attempt to break this pattern. This is worth pausing on. In nuclear energy, the comprehensive safety analysis came after Three Mile Island. In aviation, after the Comet disasters. In pharmaceuticals, after thalidomide. The authoritative warning always arrived in the wreckage.

The second International AI Safety Report attempts something new for this domain. For the first time in AI governance, the comprehensive warning arrives while the technology is still ascending. The report documents that frontier AI safety frameworks have spread but vary widely in rigor. It notes that regulators must close evidence gaps alongside innovation. That is a diplomatic way of saying: the gap between “works in the demo” and “works in production” remains wide, and the political conversation is moving faster than the evidence base can support.

But the report lands at a summit whose very name signals that the political center of gravity has shifted. And the geopolitical context makes course correction harder, not easier.

[](https://substackcdn.com/image/fetch/$s_!yiO5!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fabcc88aa-c4bb-4f58-b2d1-bb5a20d47c4d_1196x711.png)

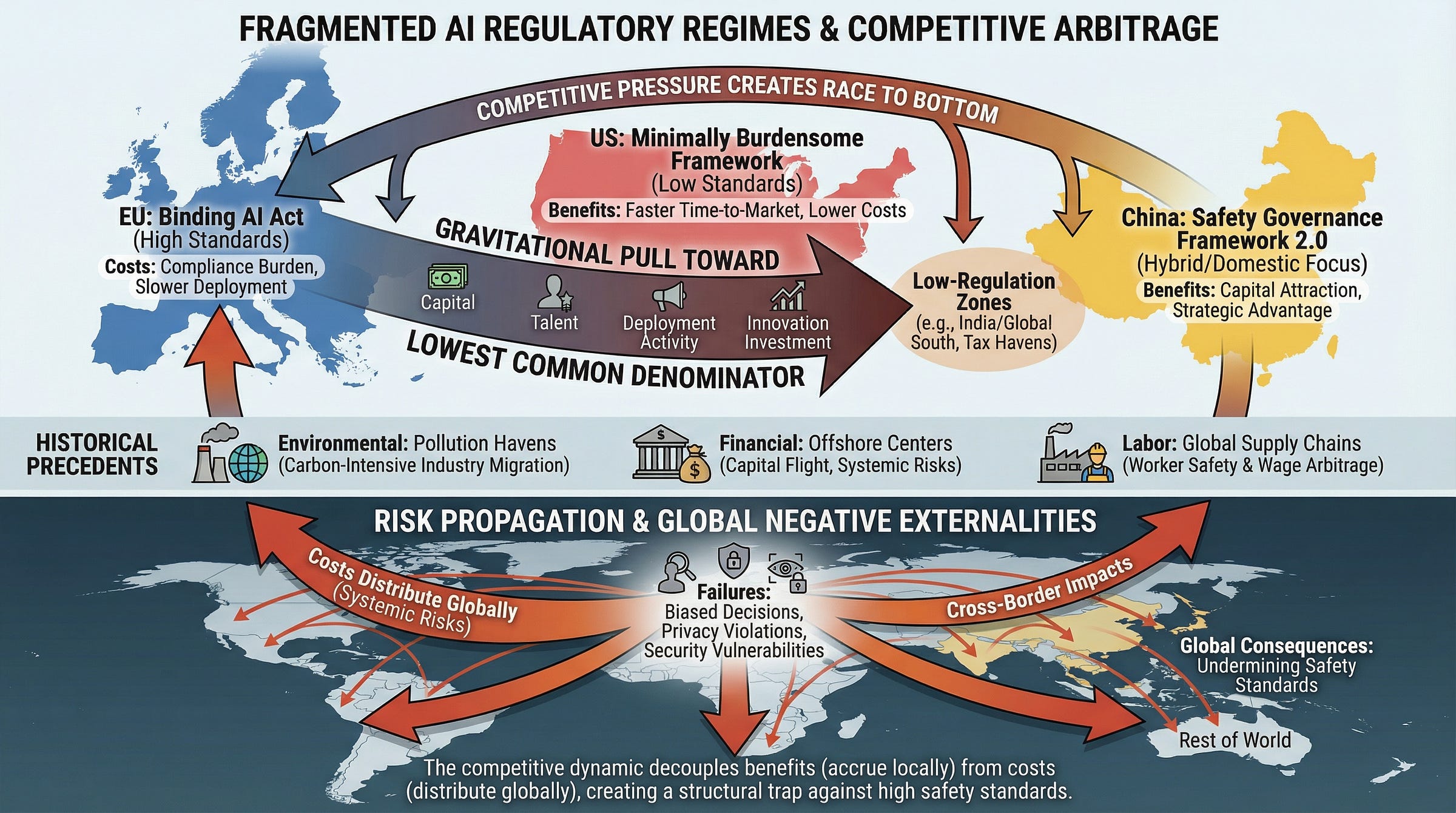

Executive Order 14365, signed in December 2025, made the Trump administration’s preference explicit: a “minimally burdensome national policy framework” that instructs federal agencies to challenge state-level AI laws and ties broadband funding to states avoiding “onerous AI laws.” China runs its own parallel track. Its AI Safety Governance Framework 2.0, released in September 2025, evolved from a declaration of principles to an operational instruction manual, but it is designed primarily for Chinese governance, even as Beijing promotes its framework through Belt and Road partnerships and international standards bodies. The European Union continues implementing its AI Act, full force arriving in August 2026, while fourteen of twenty-seven member states have yet to designate a national competent authority. The architecture of enforcement is being built while the building is already occupied.

This fragmentation is itself dangerous, and understanding the mechanism matters. When regulatory regimes compete rather than coordinate, the incentive structure inverts. Nations that maintain high safety standards bear real costs: slower deployment, higher compliance burdens, reduced competitiveness for investment. Nations that lower standards attract capital, talent, and first-mover advantage. The result is a gravitational pull toward the lowest common denominator. Environmental regulation demonstrated this when carbon-intensive industries migrated to weaker jurisdictions. We saw it in financial regulation, where capital flowed to the lightest oversight. Labor standards told the same story, as production shifted to wherever protections were thinnest. The mechanism is identical: fragmented governance creates arbitrage opportunities, and capital exploits them. In AI, the stakes are higher because the failures of inadequate safety frameworks will not stay confined to the jurisdictions that chose them. AI systems cross borders. Their risks do too. We are choosing to repeat a pattern whose consequences we already understand.

[](https://substackcdn.com/image/fetch/$s_!BOrb!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F15558cc5-fc36-4ce4-9f45-d7910b39bd3d_2752x1536.png)

> _Mechanism of regulatory arbitrage in fragmented AI governance showing how competitive pressure creates gravitational pull toward lowest common denominator safety standards._

* * *

The people who study this for a living understand what is happening. They see the gap between the Bengio Report’s findings and the political environment in which those findings will arrive. They recognize the pattern from every previous safety-critical domain: the warnings proved right. They were always right. They were also always too late. The nuclear engineers who questioned rapid deployment before Three Mile Island. The aviation specialists who doubted the Comet’s testing regimes. The pharmacologists who worried about thalidomide’s approval process. They were vindicated. After the fact.

What every nation at the table in New Delhi shares is the goal of breaking this pattern. Not to slow innovation or deny the Global South its legitimate claim to AI’s benefits, but to ensure that the word “impact” includes the impacts we did not intend.

Renaming “safety” as “impact” does not change the risk landscape. It changes the political will to address it. And political will is the only thing that has ever prevented the failures that make safety culture inevitable after the fact.

_We can build the framework before the wreck, or we can build it after._ Every previous industry learned this in wreckage. The vocabulary of progress absorbs the vocabulary of caution, and what gets absorbed gets silenced. We are not choosing between safety and impact. We are choosing whether to see clearly or to look away. And the history of every technology we have ever governed says that the ones who look away do not escape the consequences. They merely lose the chance to shape them.

[](https://substackcdn.com/image/fetch/$s_!q_Jj!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fc0c0f86c-cd03-4f3f-b03e-358e0e84a057_1051x800.png)

* * *

### Further Reading, Background and Resources

**Sources & Citations**

**International AI Safety Report 2026.** Bengio et al., February 3, 2026. [internationalaisafetyreport.org](https://internationalaisafetyreport.org/publication/international-ai-safety-report-2026). One hundred experts, thirty countries, and a central finding: risk management frameworks remain “immature.” Historically unusual because the authoritative safety assessment arrives _before_ catastrophic failure rather than after.

**The Bletchley Declaration on AI Safety.** November 1, 2023. [GOV.UK](https://www.gov.uk/government/publications/ai-safety-summit-2023-the-bletchley-declaration). Read it now and measure the distance: the US has since declined to sign, China is building a parallel architecture, and “safety” has been dropped from the summit name entirely.

**India AI Impact Summit 2026: Seven Chakras Framework.** Government of India, February 19-20, 2026. [PIB Release](https://www.pib.gov.in/PressReleasePage.aspx?PRID=2225069). Safety is present but shares the stage with six other priorities backed by louder constituencies.

**Executive Order 14365.** December 11, 2025. [White House](https://www.whitehouse.gov/presidential-actions/2025/12/eliminating-state-law-obstruction-of-national-artificial-intelligence-policy/). Defines any meaningful safety requirement as “onerous” and ties infrastructure funding to states that comply. The architecture of deliberate non-regulation.

**For Context**

**Atoms for Peace and Its Legacy.** IAEA, December 2023. [IAEA](https://www.iaea.org/newscenter/news/70-years-later-the-legacy-of-the-atoms-for-peace-speech). The structural parallel to AI governance: a transformative technology reframed from threat to opportunity, and the decades-long discovery that safety adequate for laboratories was catastrophically insufficient at deployment scale.

**China’s AI Safety Governance Framework 2.0.** Carnegie Endowment, October 2025. [Carnegie](https://carnegieendowment.org/research/2025/10/how-china-views-ai-risks-and-what-to-do-about-them). Evolved from principles to operational manual, designed for sovereignty rather than harmonization. Structural divergence that makes unified global standards significantly harder.

**Practical Tools**

Three diagnostic questions for any AI governance proposal. First, _measurement asymmetry_ : does the proposal include enforceable safety metrics with the same granularity as its economic projections? Second, _enforcement architecture_ : are safety obligations binding with consequences, or voluntary with incentives? Third, _failure accountability_ : who bears the cost when AI systems cause harm, and do benefits accrue to specific actors while risks fall on populations?

**Counter-Arguments**

**“The Global South has legitimate development priorities that safety-first framing subordinates.”** The premise is correct. The implied conclusion is not. Technology transfer history is unambiguous: when weakened safety standards become the price of market access, costs fall disproportionately on the populations the development was meant to serve. Ghana’s Agbogbloshie, pharmaceutical dumping, industrial chemicals: the pattern repeats.

**“Safety culture can develop organically alongside deployment.”** Genuinely difficult to dismiss, because iterative improvement produced the modern internet. The flaw is category error. Software bugs are recoverable. The AI failures documented in the Bengio Report, including models distinguishing between evaluation and deployment contexts, are emergent behaviors in systems whose internal reasoning we cannot fully observe. By the time they surface at scale, millions of decisions rest on outputs that cannot be retroactively verified.

**“Governance fragmentation reflects healthy regulatory competition.”** Regulatory competition works when effects stay within the jurisdiction that chose them. AI systems do not respect borders. Every jurisdiction bears the consequences of every other’s choices, and competitive pressure flows toward weaker standards, not stronger ones.