*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/applications-of-ai-in-healthcare) · 2024-11-05*

[Read on Substack →](https://theaimonitor.substack.com/p/applications-of-ai-in-healthcare)

---

[](https://substackcdn.com/image/fetch/$s_!BlM9!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fa45fc0bf-2b7d-4860-9ba7-b52ac4a74c6f_1104x362.png)

We mistake expertise for omniscience. We assume the master sees everything. But human attention is finite. Expertise is not about seeing everything. It is about knowing what you are missing.

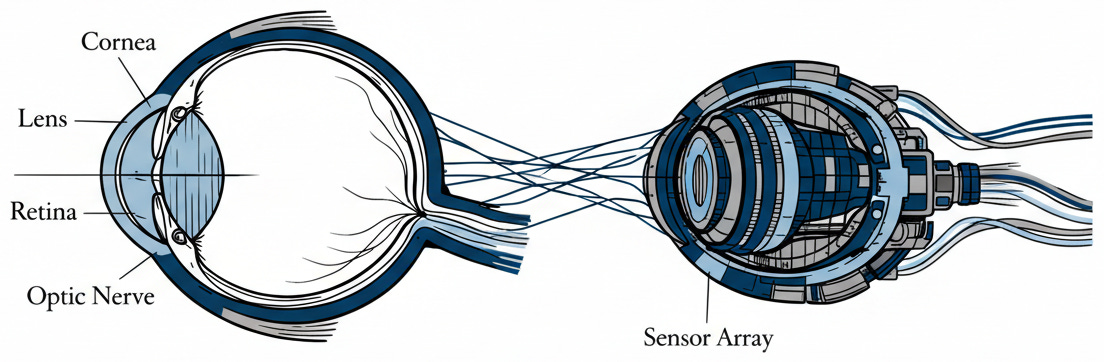

The revolution in medicine is not automation. It is augmentation: building tools that reveal what skilled eyes inevitably overlook.

Consider the pathologist reviewing lymph node slides for breast cancer metastases. Unassisted, she catches 74.5% of cases. With AI, that number climbs to 93.5%. The algorithm scans the slide, flagging regions where cellular patterns suggest malignancy, the spots her tired eyes miss on slide ninety-seven. She remains the decision-maker, but her reach extends. Review time drops by half. Accuracy rises by nearly twenty points.

[](https://substackcdn.com/image/fetch/$s_!jIII!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fa3b04d09-3077-4633-affa-998769606413_1127x670.png)

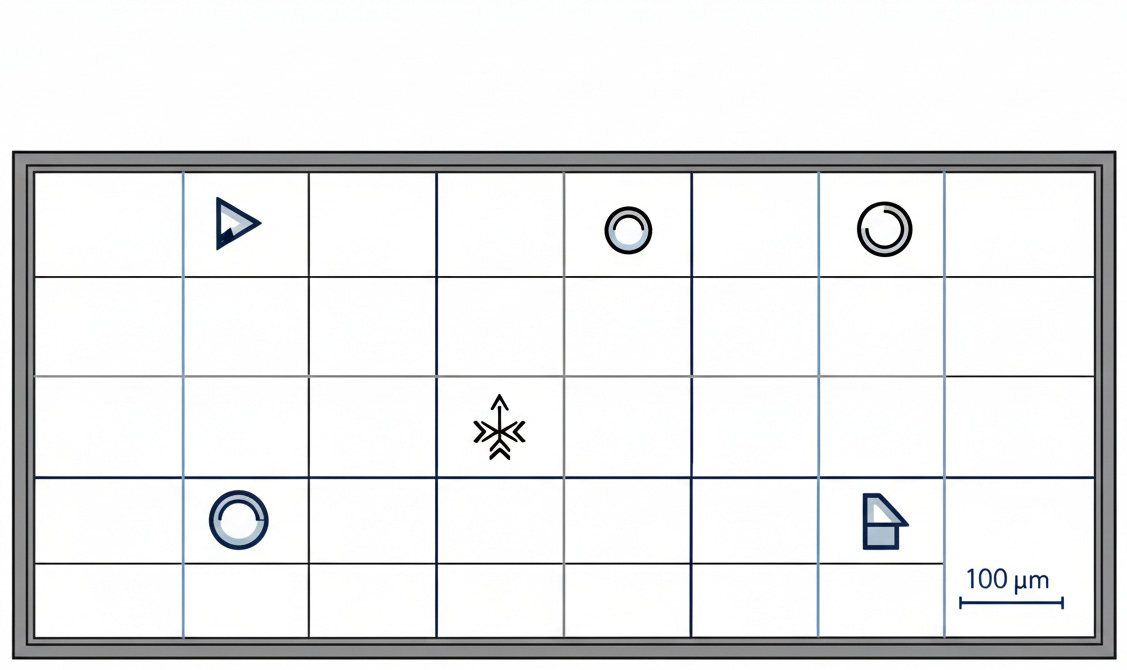

This is not a fluke. It is a structural convergence. Vast quantities of visual data collide with time-pressed experts, creating conditions where a second set of eyes catches what human attention cannot sustain long enough to see. AI tools scanning for lung nodules catch 29% of cases radiologists initially miss. In neurology, assistance cuts MRI reading time by 44%. The FDA has authorized nearly 950 AI-enabled medical devices, more than three-quarters of them in imaging. Where the task is pattern recognition at scale, the machine extends the human limit. Where the task requires context, it fails.

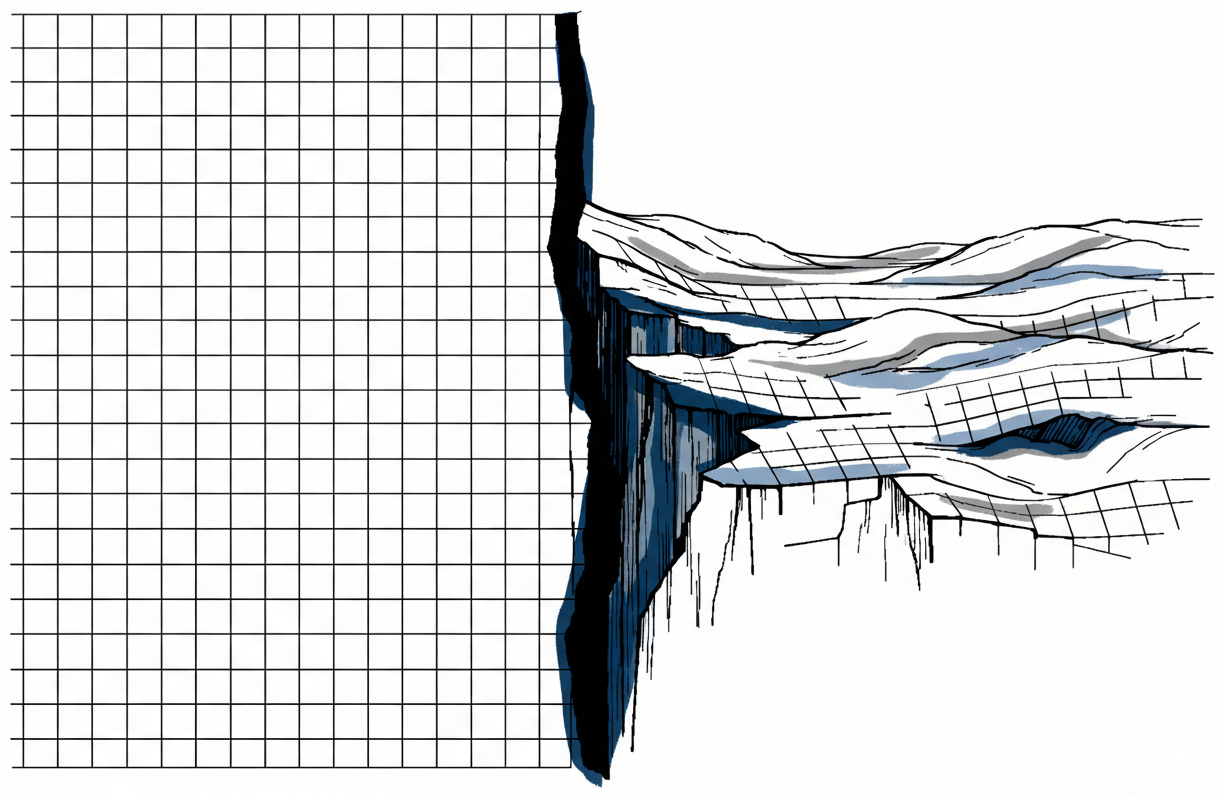

The distinction matters because the opposite approach fails catastrophically. IBM’s Watson for Oncology promised to read medical literature and recommend treatments. MD Anderson Cancer Center spent five years and $62 million trying to make it work. The system never touched a patient. Watson mistook the menu for the meal.

The failure was structural. Real clinical data is messy. Treatment decisions require context an algorithm cannot access: tolerance for risk, family reality, the subtle cues read in a hesitation. In safety-critical systems, “works in the demo” and “works in production” are separated by a chasm. Watson tried to automate judgment rather than enhance observation. It confused the map with the territory. The project collapsed into the gap between promise and deployment.

[](https://substackcdn.com/image/fetch/$s_!EoMe!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fc878c8b6-9994-43f5-94ee-cf349a6a57f8_1224x797.png)

Contrast this with systems designed to extend perception. An AI-driven early warning system at Ysbyty Gwynedd in Wales continuously calculated deterioration scores for ward patients. It flagged patterns too faint for a nurse making rounds to detect: a slight uptick in respiratory rate combined with a minor dip in oxygen saturation. Individually, these meant little. Together, they formed a signal that human observation missed in the constant flow of ward activity.

Serious adverse events dropped by 35%. Cardiac arrests fell by 86%. The AI did not treat patients. Nurses and doctors did. But they treated them earlier, armed with alerts that cut through the noise of a busy ward where a hundred small changes compete for attention every hour.

[](https://substackcdn.com/image/fetch/$s_!9nSv!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fc11b0893-ca1b-4e0f-83cd-33ae068706ff_1409x927.png)

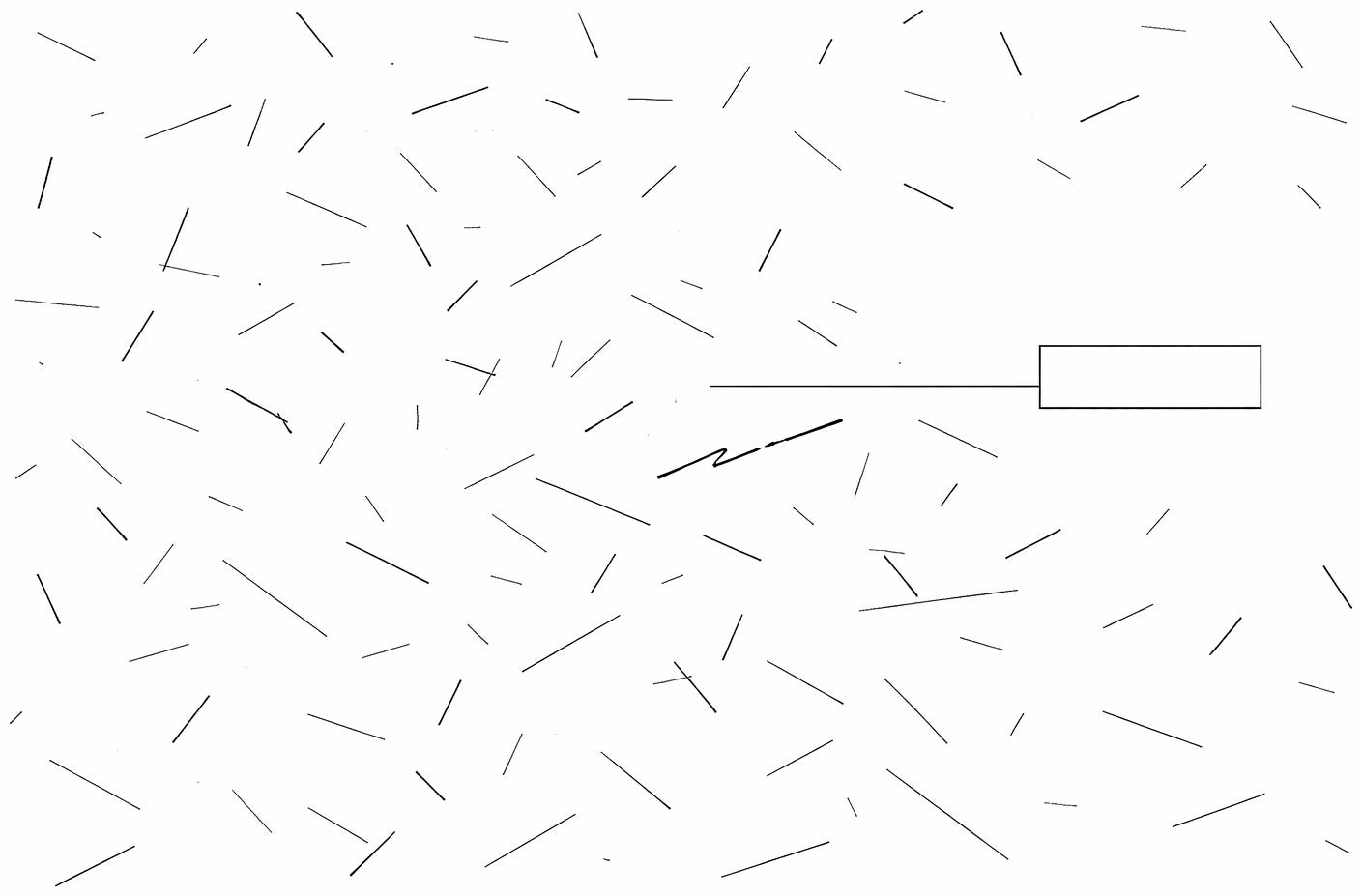

The principle extends beyond diagnosis into discovery. When MIT researchers used deep learning to search 100 million molecules for antibiotics, the AI did not design the drug. It identified a candidate, Halicin, that killed bacteria resistant to every known treatment. Human researchers synthesized it, tested it, and validated it. The machine collapsed years into days. It augmented the search. It did not replace the science.

This pattern appears wherever AI meets high-volume pattern recognition and time-constrained experts. Legal discovery requires reviewing millions of documents in a single lawsuit, a task that once consumed armies of junior associates for months. Financial auditing, where anomalies hide in oceans of transactions. Manufacturing quality control. Network security monitoring, where attack signatures shift faster than any analyst can track. The dynamic is consistent: systems that try to replace expert judgment founder on complexity they cannot anticipate. Augmentation succeeds because it leaves complexity with the humans who understand it.

The pathologist knows which findings require escalation. The oncologist knows which patients will tolerate aggressive treatment. The nurse knows which vital sign changes matter for this specific patient. These judgments resist automation. They require contextual reasoning earned from years of practice and thousands of encounters. They require reading human beings as carefully as their test results. The observation that precedes judgment is different. Observation is data. Judgment is wisdom.

This distinction points toward a future of more augmentation, not less. Aging populations strain healthcare systems and clinician shortages grow more acute. Diagnostic volumes outpace human capacity. A radiologist can review only so many scans before fatigue degrades accuracy. An AI that pre-screens images extends that physician’s reach without replacing their judgment. So long as demand for imaging continues to outpace supply, an algorithm that helps a radiologist read twice as many scans at higher accuracy does not threaten the job. It makes the radiologist more valuable, because there are never enough radiologists for the scans that need reading. The tools that work treat human expertise as the scarce resource and machine analysis as its multiplier.

The wrong question asks whether AI will replace doctors. The right question asks what doctors miss that AI could catch.

The answer reframes expertise in an age of machine perception. The best diagnosticians will not be those who see the most, but those who know their limits and build the instruments to see beyond them. Value is created at the junction of human judgment and extended vision, where accumulated wisdom meets tireless observation, where intuition earned over decades meets pattern recognition that never tires.

The future of medical expertise is not knowing everything. It is knowing what to build to catch what you miss. The best doctors will not be those who never err. They will be those who construct systems to find their errors before harm is done.

The second set of eyes reveals the purpose of the first. Judgment, not omniscience. Wisdom, not observation.

We build these systems not to outsource our humanity, but to extend its reach. The map is not the territory. But with the right instruments, we can finally read the terrain.

We stop pretending we can see it all alone. We start building the eyes that catch what we miss.

* * *

### Further Reading, Background and Resources

**Sources & Citations**

[Dissecting Racial Bias in an Algorithm Used to Manage the Health of Populations](https://www.science.org/doi/10.1126/science.aax2342) (Science, October 2019)

The study that changed how we talk about algorithmic fairness in healthcare. Obermeyer and colleagues traced systematic underestimation of Black patients’ health needs to a single design choice: using healthcare spending as a proxy for illness. They did not just identify bias; they demonstrated how correcting it could reduce disparities by 84%.

[A Deep Learning Approach to Antibiotic Discovery](https://www.sciencedirect.com/science/article/pii/S0092867420301021) (Cell, February 2020)

AI drug discovery at its best: humans set the objective, machines compress the search. Stokes et al. screened 100 million molecules in three days and found a compound effective against bacteria that had resisted every known antibiotic. The AI found the needle; researchers still had to verify it was actually a needle.

[The Failed Promise of IBM Watson](https://academic.oup.com/jnci/article/109/5/djx113/3847623) (Journal of the National Cancer Institute, May 2017)

The autopsy of Watson for Oncology at MD Anderson. Five years, $62 million, zero patients treated. Required reading for anyone tempted to skip from “promising demo” to “clinical deployment.”

**For Context**

[AlphaFold: A Solution to a 50-Year-Old Grand Challenge in Biology](https://deepmind.google/discover/blog/alphafold-a-solution-to-a-50-year-old-grand-challenge-in-biology/) (DeepMind, November 2020)

Understanding protein folding helps contextualize what “AI success” looks like: a well-defined problem with clear evaluation criteria where machine perception genuinely exceeds human capability.

[WHO Ethics and Governance of Artificial Intelligence for Health](https://www.who.int/publications/i/item/9789240029200) (World Health Organization, June 2021)

You will not read all 165 pages. Nobody does. But the six guiding principles (pages 8-35) give you the vocabulary policymakers and hospital administrators actually use when evaluating AI tools.

**Counter-Arguments**

_Augmentation May Be Transitional, Not Permanent_

Every augmentation success trains the next generation of autonomous systems. Pathologists reviewing AI-flagged regions generate labeled data that improves the next model. The human-in-the-loop is not just a safety feature; it is a training mechanism.

_Selection Bias Distorts the Evidence_

The examples in this essay are cases that worked. We do not hear about the dozens of medical AI startups that quietly failed because their augmentation tools did not improve outcomes. Watson gets mentioned because its failure was spectacular enough to become news.

_Augmentation Tools May Deepen Inequality_

Extended vision costs money. AI-assisted pathology requires infrastructure that small hospitals cannot afford. The radiologist reading twice as many scans with AI assistance works at an academic medical center, not a community hospital serving uninsured patients.