*Originally published in [The AI Monitor](https://theaimonitor.substack.com/p/ai-governance-models-that-actually) · 2025-06-17*

[Read on Substack →](https://theaimonitor.substack.com/p/ai-governance-models-that-actually)

---

[](https://substackcdn.com/image/fetch/$s_!opWu!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fcdf50559-6a4e-4d8e-bc2e-7623240dd13f_1170x696.png)

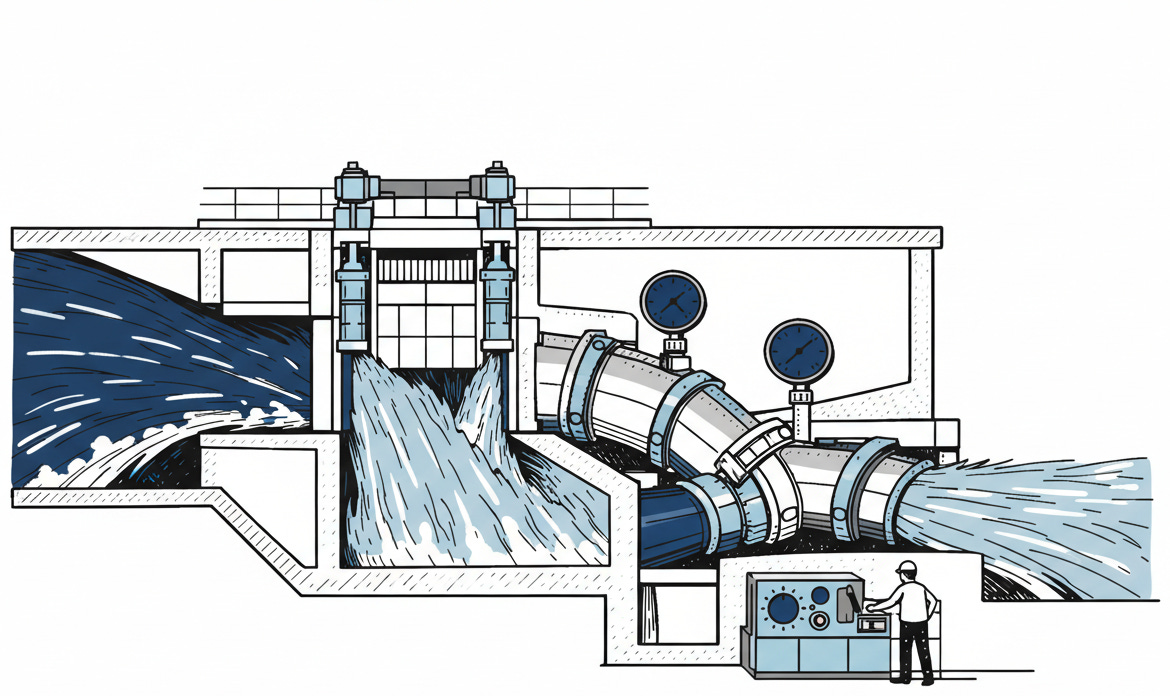

We assume that innovation requires chaos. That governance is the friction that slows us down. This is a dangerous misunderstanding. In safety-critical systems, speed is a function of control, not freedom. You do not make a car faster by removing the brakes; you make it faster by ensuring the brakes work well enough to trust the engine. The question is no longer whether we can build powerful intelligence. It is whether we can build the structures required to survive it.

## The Invisible Revolution

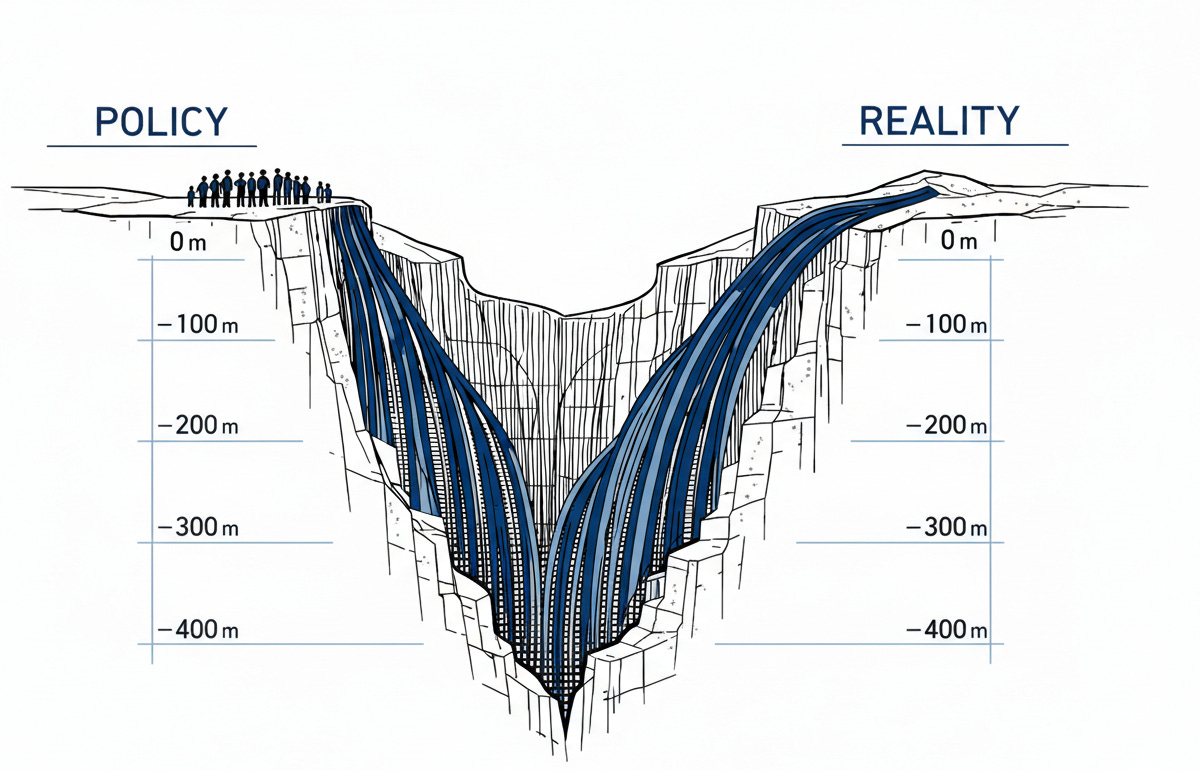

The gap between corporate policy and operational reality is no longer a gap. It is a canyon.

[](https://substackcdn.com/image/fetch/$s_!R1F3!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fcbf9a40a-c07e-42d6-b567-6a733c287d3d_1200x771.png)

The statistics are precise, but the pattern is universal. Workers are not waiting for permission. They are pasting proprietary code into public chatbots and uploading confidential strategies to consumer tools because the tools work. They are not being malicious; they are being productive. They are trading abstract security risks for immediate efficiency gains. Prohibition has already failed. You cannot forbid a capability that is already embedded in the workflow.

This is not a prediction. A study examining 176,000 AI prompts found that 8.5% contained sensitive data. Employees are not bypassing security to be reckless; they are bypassing it to work.

## The Proof of Performance

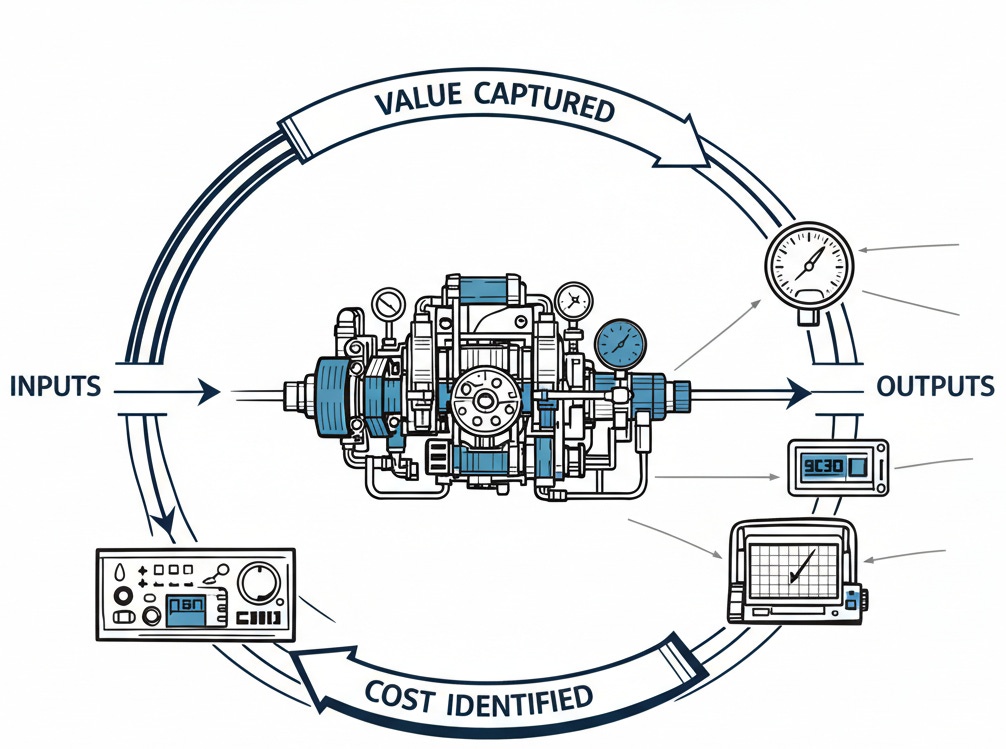

Panic is the standard response. It is also expensive and useless. A few organizations discovered something counter-intuitive: oversight is not a tax. It is a performance multiplier.

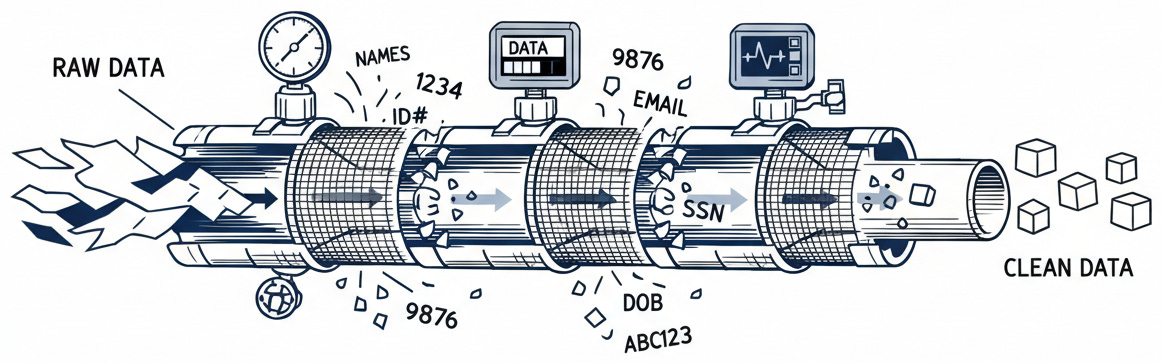

Wells Fargo did not stumble into this efficiency; they engineered it. Burdened by a history of regulatory scrutiny, they could not afford Silicon Valley’s “move fast and break things” philosophy. They built an assistant that handles 245 million interactions annually with zero privacy breaches not by asking employees to be careful, but by making care invisible. The system simply never sees sensitive data. It is stripped from the audio before it is processed. Safety lives in the pipeline, not the policy document.

[](https://substackcdn.com/image/fetch/$s_!tJyQ!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F744ba9da-94d8-4ea3-81c8-c2248cc3bb3a_1162x363.png)

JPMorgan proved that measurement drives value. Their $17 billion technology investment came with a requirement: every AI initiative must prove its worth through centralized stewardship. The result was $1.5 billion in verified savings. When volatility hit the market, their AI-equipped advisors retrieved information 95% faster than competitors relying on manual processes. Measurement creates advantage. The enterprises capturing real ROI are not the ones with the most capabilities. They are the ones with the tightest feedback loops.

## The Regulatory Catalyst

These results were impressive. Then February 2, 2025, made them unavoidable. The EU AI Act’s first restrictions took effect, carrying penalties that can bankrupt a company. Compliance is now survival.

But you cannot retrofit governance. It is not a patch to download on a Friday. Companies that scattered AI deployments across departments are now realizing that the cost of catching up is higher than the cost of doing it right. The market for governance frameworks is exploding, but purchasing tools is not a strategy. You cannot automate a process you do not understand.

## The Channel, Not the Dam

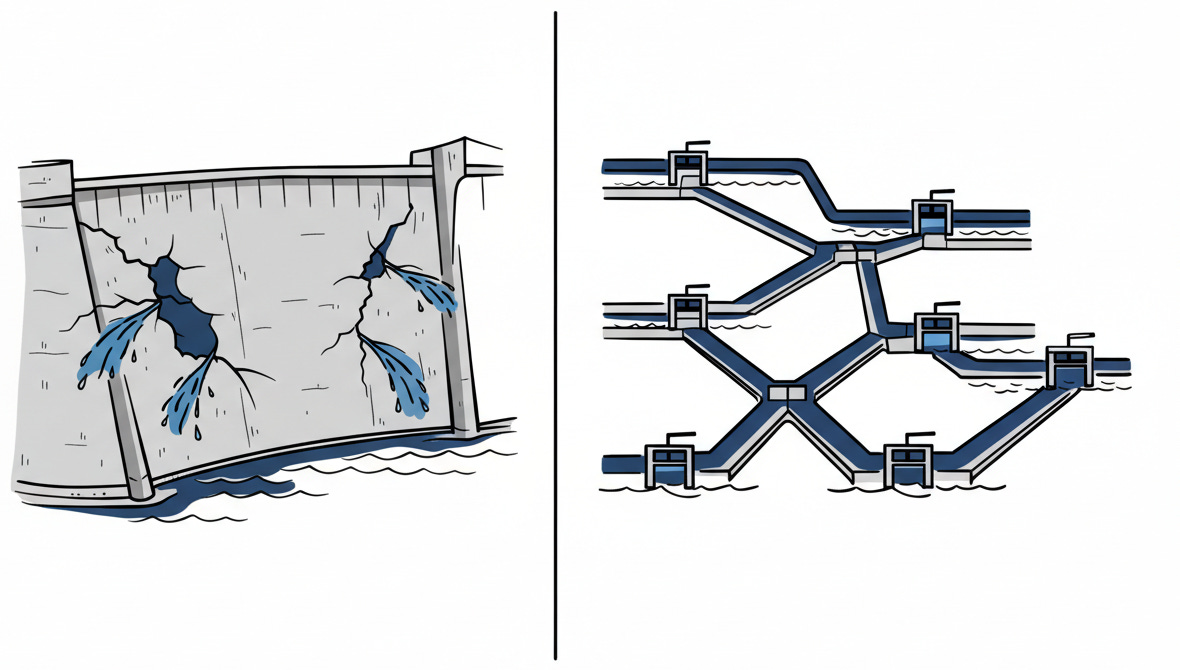

Compliance alone is not strategy. The most successful leaders learned a harder truth: you cannot fight the current. You channel it.

Healthcare institutions and financial firms alike learned that prohibition fails. When major banks blocked access to ChatGPT, employees simply used personal devices. The security risk increased; the productivity gains vanished. The solution was not a higher dam but a smarter channel.

[](https://substackcdn.com/image/fetch/$s_!02Qu!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F9cbdb5cb-5f76-4066-aee9-35ef2b7412f7_1180x670.png)

Leading firms now offer internal alternatives that feel as smooth as the public systems. Monitoring runs continuously behind the scenes. Documentation generates automatically. The user experiences only the clean interface. Behind it, oversight functions as an accelerator rather than a brake.

## The Five Shifts

Transformation follows a sequence. You cannot build the roof before the foundation.

It starts with visibility. You cannot govern what you cannot see. Smart organizations implement discovery instruments without punishment, creating amnesty periods that reveal the actual landscape. Employees will confess to using unsanctioned tools if you assure them it is not a firing offense. Once the landscape is visible, you can build automated guardrails. Wells Fargo’s architecture succeeds because users do not have to think about security. Security through invisible design scales; security through constant vigilance fails.

Structure requires context. Transparency comes through training that focuses on reasoning rather than rules. When people understand the failure modes, they stop creating them. Finally, measurement drives discipline. JPMorgan logs every prompt and every outcome. Without that data, AI is expensive theater. With it, you know exactly what is working and what is costing you a fortune. We are not building for today’s use case. We are building for the volatility of the next decade.

[](https://substackcdn.com/image/fetch/$s_!OZVF!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2Fdb488727-297f-40a2-9a5d-def924e45d63_1006x749.png)

## The Stakes

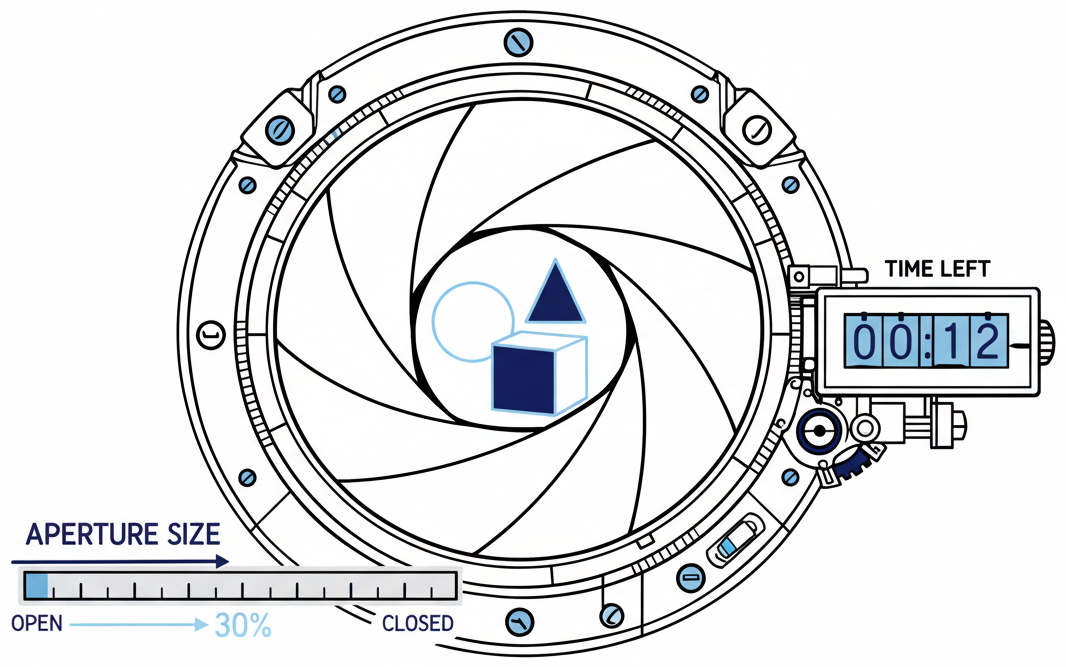

The window to implement this is closing.

[](https://substackcdn.com/image/fetch/$s_!Gqyj!,f_auto,q_auto:good,fl_progressive:steep/https%3A%2F%2Fsubstack-post-media.s3.amazonaws.com%2Fpublic%2Fimages%2F386cd471-d18f-4b37-9a5f-3e608070b8db_1066x667.png)

Only 1% of organizations describe their AI deployments as mature. The other 99% are still experimenting. The opportunity for establishing advantage is measured in quarters, not years. Wells Fargo processes a quarter-billion interactions with zero data leaks. JPMorgan extracts $1.5 billion in value through centralized discipline. These are not case studies. They are blueprints.

Somewhere in our organizations, an employee is pasting something sensitive into a public AI system right now. The question is not whether we can stop them. It is whether we have built the channels to direct that innovation safely, or whether we are still hoping that warnings in employee handbooks will save us.

The distinction between control and command is vanishing. We are no longer the operators of these tools; we are the architects of their environment. The companies that thrive will not be the ones with the most advanced models. They will be the ones that accept that flow is inevitable and build the channels to direct it. The capabilities are irrelevant. The structures we build around them are everything.

* * *

### Further Reading, Background and Resources

## Sources & Citations

**Microsoft Work Trend Index 2024** \- [AI at Work Is Here. Now Comes the Hard Part](https://www.microsoft.com/en-us/worklab/work-trend-index/ai-at-work-is-here-now-comes-the-hard-part) (May 2024)

The definitive survey on shadow AI. Microsoft and LinkedIn surveyed 31,000 knowledge workers across 31 markets, revealing the 75% adoption and 78% BYOAI figures that anchor this essay’s urgency argument. Worth reading for the methodology alone---Edelman Data & Intelligence conducted the fieldwork between February and March 2024, giving these numbers unusual rigor for industry research.

**VentureBeat** \- [Wells Fargo’s AI assistant just crossed 245 million interactions](https://venturebeat.com/ai/wells-fargos-ai-assistant-just-crossed-245-million-interactions-with-zero-humans-in-the-loop-and-zero-pii-to-the-llm) (April 2025)

The technical architecture matters here. CIO Chintan Mehta explains how Wells Fargo’s privacy-first pipeline actually works: speech transcribed locally, text scrubbed and tokenized internally, only intent extraction sent to the external model. The “filters in front and behind” quote captures the governance philosophy better than any whitepaper could.

**Reuters** \- [JPMorgan says AI helped boost sales, add clients in market turmoil](https://www.reuters.com/business/finance/jpmorgan-says-ai-helped-boost-sales-add-clients-market-turmoul-2025-05-05/) (May 2025)

Straight from earnings calls, not marketing materials. The $1.5 billion in verified savings and “95% faster information retrieval” figures come from executives speaking to investors---where exaggeration carries legal consequences. The context of market turmoil makes the governance success more credible, not less.

**European Parliament** \- [EU AI Act: First regulation on artificial intelligence](https://www.europarl.europa.eu/topics/en/article/20230601STO93804/eu-ai-act-first-regulation-on-artificial-intelligence)

Primary source for the regulatory framework. Note the implementation timeline: prohibitions took effect February 2, 2025, but penalty enforcement begins August 2, 2025. Jones Day’s [legal analysis](https://www.jonesday.com/en/insights/2025/02/eu-ai-act-first-rules-take-effect-on-prohibited-ai-systems) provides useful interpretation of what “prohibited practices” actually means in practice.

## For Context

**McKinsey** \- [The state of AI: How organizations are rewiring to capture value](https://www.mckinsey.com/capabilities/quantumblack/our-insights/the-state-of-ai-how-organizations-are-rewiring-to-capture-value) (March 2025)

The jaw-dropping gap: 92% planning increased AI investment, but only 1% reporting mature implementations. That “1% mature” figure refers to organizations where gen AI is “fundamentally changing how work is done and driving substantial business outcomes.” Survey of 1,491 participants across 101 nations. This gap defines the governance challenge.

**Grand View Research** \- [AI Governance Market Report](https://www.grandviewresearch.com/industry-analysis/ai-governance-market-report) (2024)

Market projections vary wildly---from $1.4 billion to $7 billion by 2030 depending on the research firm. The consistent signal: explosive growth from a $227 million base in 2024. The variance itself tells a story about how nascent this market remains.

**Stanford HAI** \- [Wells Fargo joins Stanford HAI Corporate Affiliate Program](https://hai.stanford.edu/news/wells-fargo-joins-stanford-hai-corporate-affiliate-program) (2024)

The partnership that produced training for 4,000+ employees across multiple cohorts. The program delivers structured AI ethics and governance curriculum developed by Stanford faculty, combining self-paced modules with live workshops. Demonstrates how serious governance investment looks in practice: academic rigor meets enterprise scale through sustained institutional collaboration, not one-off vendor purchases.

## Practical Tools

**Governance Vendor Evaluation Criteria**

When assessing AI governance platforms, prioritize these capabilities: (1) real-time monitoring of model outputs across deployment contexts, (2) audit trail generation that satisfies regulatory discovery requirements, (3) integration depth with existing security and compliance tooling, (4) federated access controls that balance central oversight with business unit autonomy.

**EU AI Act Compliance Timeline**

* February 2, 2025: Prohibited practices take effect

* August 2, 2025: Penalty enforcement begins

* August 2, 2026: High-risk AI system requirements apply

* August 2, 2027: Full compliance required for all in-scope systems

Organizations operating in or serving EU markets should map current AI use cases against the prohibited and high-risk categories now, not later.

## Counter-Arguments

**“Governance slows innovation”**

The strongest version: governance frameworks add friction at precisely the moment when competitive advantage depends on speed. JPMorgan’s 450+ use cases didn’t deploy themselves---each required evaluation, approval, monitoring. The counterpoint isn’t that governance doesn’t slow things down (it does), but that ungoverned AI creates different, slower problems: remediation of failures, regulatory response, trust repair. Wells Fargo’s “weeks rather than months” deployment timeline suggests the friction can be engineered into acceleration, but only with significant upfront investment in processes and infrastructure.

**“The market will self-correct”**

The libertarian case: bad AI governance will produce bad outcomes, bad outcomes will produce reputational damage, reputational damage will produce better governance---all without regulatory intervention. This argument has historical merit in some domains. The counterpoint: AI failures often harm parties who weren’t the buyers (loan applicants, job candidates, content consumers), breaking the feedback loop that makes market self-correction work. The EU AI Act exists precisely because European regulators concluded that market incentives alone wouldn’t protect affected populations. Whether you agree depends partly on your priors about regulatory competence versus market efficiency.

**“By the time governance is implemented, the technology has moved on”**

The pace-of-change objection: model capabilities double annually while governance frameworks take 18-24 months to develop and deploy. By the time your AI policy is approved, GPT-6 has rendered it obsolete. The counterpoint: effective governance is principle-based, not technology-specific. Wells Fargo’s “filters in front and behind” architecture works regardless of which model sits in the middle. The organizations failing at governance are those writing rules for specific tools rather than building adaptive systems. The EU AI Act explicitly takes a risk-based approach precisely because regulators understood that capability-specific rules would be instantly outdated.

**“We’re different---these frameworks don’t apply to us”**

The uniqueness objection: our industry has special requirements, our company culture is different, our risk profile is unusual. General frameworks miss the nuance. The counterpoint: every organization believes this, and almost none are actually unique in the ways that matter for governance. Financial services, healthcare, and government all claimed special status---and all are now racing to implement substantially similar governance architectures. The question isn’t whether your context is different (it is), but whether that difference changes the fundamental requirements: oversight, auditability, human accountability, data protection. It rarely does.